A2GC: Asymmetric Aggregation with Geometric Constraints for Locally Aggregated Descriptors

Visual Place Recognition (VPR) aims to match query images against a database using visual cues. State-of-the-art methods aggregate features from deep backbones to form global descriptors. Optimal transport-based aggregation methods reformulate feature-to-cluster assignment as a transport problem, but the standard Sinkhorn algorithm symmetrically treats source and target marginals, limiting effectiveness when image features and cluster centers exhibit substantially different distributions. We propose an asymmetric aggregation VPR method with geometric constraints for locally aggregated descriptors, called $A^2$GC-VPR. Our method employs row-column normalization averaging with separate marginal calibration, enabling asymmetric matching that adapts to distributional discrepancies in visual place recognition. Geometric constraints are incorporated through learnable coordinate embeddings, computing compatibility scores fused with feature similarities, thereby promoting spatially proximal features to the same cluster and enhancing spatial awareness. Experimental results on MSLS, NordLand, and Pittsburgh datasets demonstrate superior performance, validating the effectiveness of our approach in improving matching accuracy and robustness.

💡 Research Summary

Visual Place Recognition (VPR) requires robust global descriptors that can match a query image against a large database despite drastic appearance changes caused by illumination, weather, seasons, or viewpoint variations. Recent state‑of‑the‑art pipelines first extract dense local features with a deep backbone and then aggregate them into a compact global vector. Optimal‑transport‑based aggregation, exemplified by SALAD, treats the assignment of local features to learned cluster centers as a transport problem and solves it with the Sinkhorn algorithm. However, the standard Sinkhorn formulation enforces symmetric marginal constraints, implicitly assuming that the distributions of image features and cluster centers are balanced. In practice, feature distributions are often heavy‑tailed, multimodal, or otherwise asymmetric, which hampers the effectiveness of symmetric transport solvers.

The paper introduces A²GC‑VPR, a novel VPR framework that (1) relaxes the symmetry assumption through an asymmetric optimal‑transport solver and (2) incorporates geometric constraints that encode the spatial layout of features. The asymmetric solver works in the log‑domain. First, it performs a few (T = 3) iterations of row‑column normalization averaging: each iteration computes a row‑normalized log‑matrix and a column‑normalized log‑matrix, then averages them. This averaging balances the influence of rows and columns without forcing either to dominate. After the iterative normalization, separate marginal calibrations are applied: a source‑side correction u aligns the row sums with the source marginal a, and a target‑side correction v aligns the column sums with the target marginal b. The final transport matrix P is obtained by exponentiating the calibrated log‑matrix. By allowing u and v to be learned independently, the method adapts to heterogeneous source and target distributions, effectively handling cases where the number of local features differs from the number of clusters or where their statistical properties diverge.

Geometric constraints are introduced via learnable coordinate embeddings. For each spatial location (x, y) in the feature map, normalized coordinates are projected through a 1×1 convolution (ϕ_g) into a d_g‑dimensional embedding g_xy. Each cluster center also carries a learnable geometric embedding c_gj. The geometric compatibility score S_gij = g_xyᵀ c_gj is combined with the conventional feature similarity score S_fij using a scalar weight λ_g (learned during training). The fused score matrix S_ij = S_fij + λ_g S_gij serves as the cost matrix for the transport problem, encouraging spatially proximate features to be assigned to the same cluster and thus endowing the aggregated descriptor with implicit spatial awareness.

The implementation uses DINOv2 ViT‑B/14 as the backbone, extracting a 768‑dimensional global token and a dense feature map (768 × H × W). The global token is projected to a 256‑dimensional vector, while local features are projected to a lower dimension before entering the transport module. The system employs m = 64 clusters, a geometric embedding dimension d_g = 16, and λ_g initialized to 0.15. Training is performed on the GSV‑Cities dataset (≈1.2 M images) with Multi‑Similarity loss and a miner, using AdamW (lr = 6e‑5) and a linear decay schedule over 4000 iterations. Retrieval is conducted with FAISS using L2 distance on L2‑normalized descriptors.

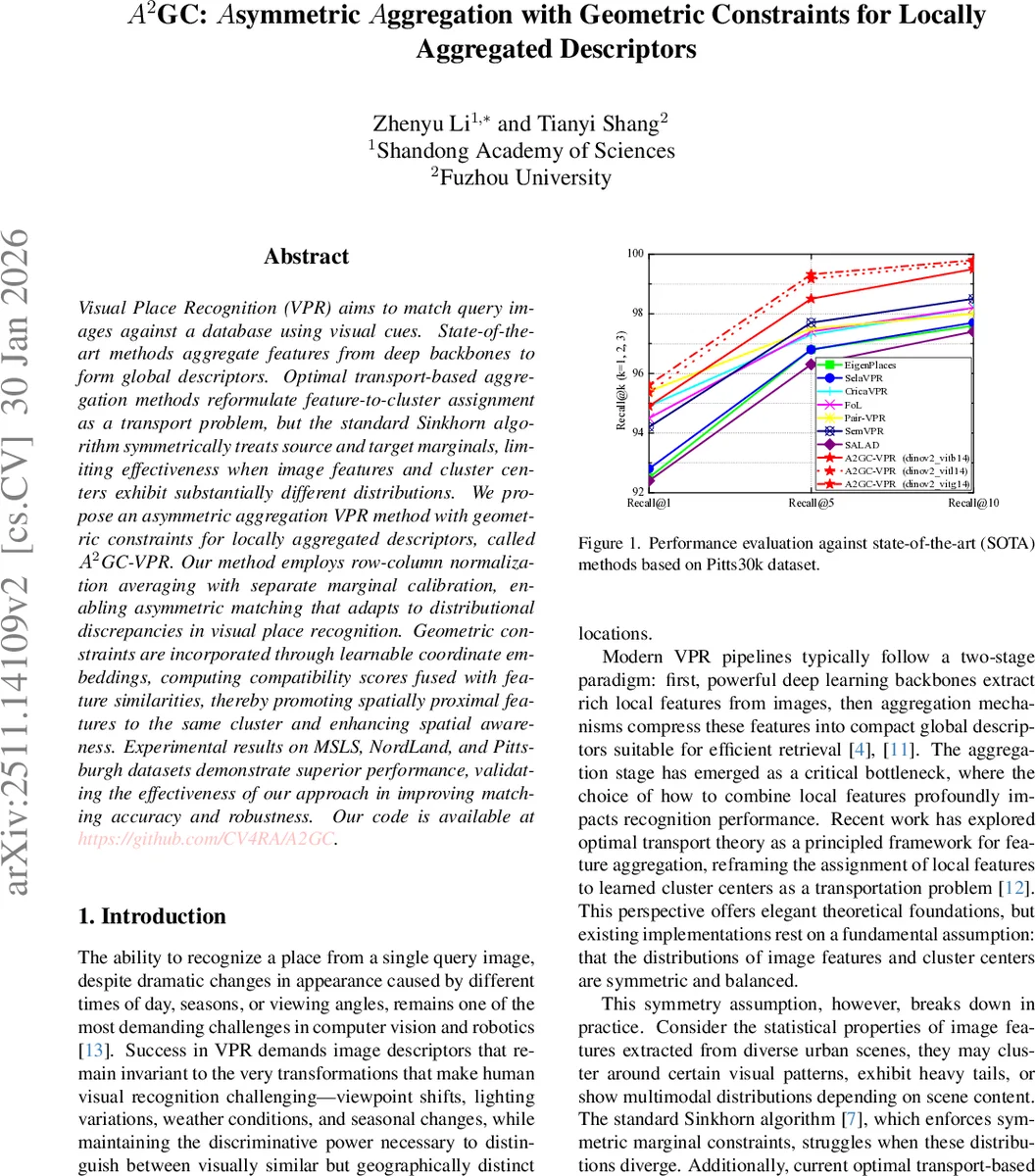

Extensive experiments on four benchmarks—Pittsburgh30k, Pitts250k‑test, MSLS (validation and challenge), and NordLand—demonstrate that A²GC‑VPR consistently outperforms or matches the best existing methods. On Pittsburgh30k, it achieves Recall@1/5/10 of 95.6 %/99.3 %/99.8 %, surpassing Pair‑VPR and CricaVPR. On Pitts250k‑test it reaches 97.3 %/99.3 %/99.7 %. On MSLS‑val it records 93.6 %/97.5 %/97.9 %, comparable to the top‑performing FoL and Pair‑VPR, and on MSLS‑challenge it attains 80.6 %/90.9 %/92.5 %. Ablation studies reveal that removing the asymmetric transport (i.e., using standard Sinkhorn) degrades performance by 2–4 % absolute Recall, while omitting geometric constraints reduces spatial coherence and similarly harms accuracy. Varying the number of normalization iterations shows that three iterations provide the best trade‑off between convergence speed and descriptor quality.

From a computational standpoint, the asymmetric solver adds only a modest overhead: log‑domain operations are cheap, and the limited number of iterations keeps runtime comparable to conventional VLAD‑style aggregators. Memory consumption remains within the limits of a single V100 GPU, making the approach suitable for real‑time robotics or mobile applications.

In summary, A²GC‑VPR contributes three key innovations to VPR: (1) an asymmetric optimal‑transport aggregation that accommodates distributional mismatches between features and clusters, (2) learnable geometric constraints that embed spatial relationships into the assignment process, and (3) a seamless integration with modern transformer‑based backbones while preserving efficiency. The authors release code and pretrained models, facilitating reproducibility and future extensions such as multi‑scale asymmetric transport or dynamic cluster adaptation.

Comments & Academic Discussion

Loading comments...

Leave a Comment