Towards Atoms of Large Language Models

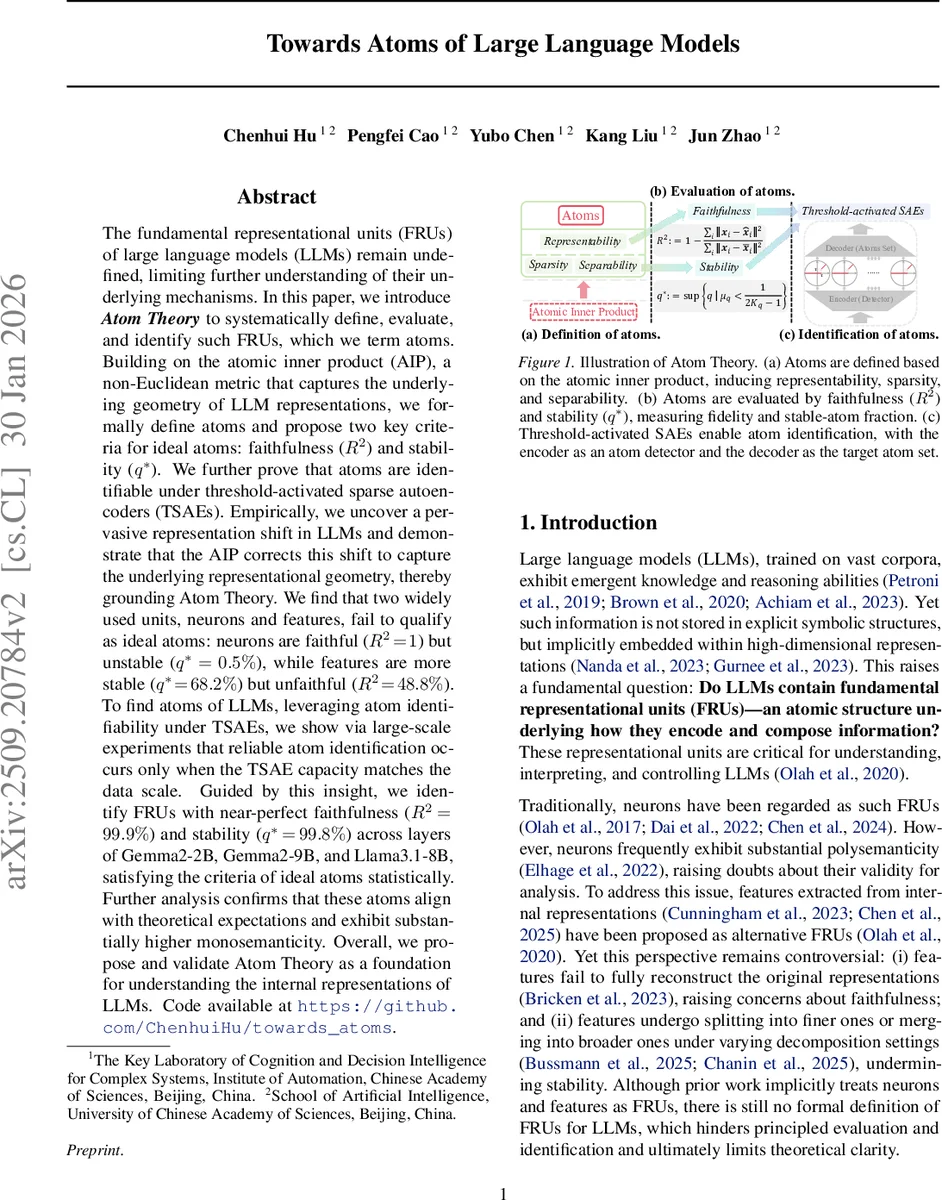

The fundamental representational units (FRUs) of large language models (LLMs) remain undefined, limiting further understanding of their underlying mechanisms. In this paper, we introduce Atom Theory to systematically define, evaluate, and identify such FRUs, which we term atoms. Building on the atomic inner product (AIP), a non-Euclidean metric that captures the underlying geometry of LLM representations, we formally define atoms and propose two key criteria for ideal atoms: faithfulness ($R^2$) and stability ($q^$). We further prove that atoms are identifiable under threshold-activated sparse autoencoders (TSAEs). Empirically, we uncover a pervasive representation shift in LLMs and demonstrate that the AIP corrects this shift to capture the underlying representational geometry, thereby grounding Atom Theory. We find that two widely used units, neurons and features, fail to qualify as ideal atoms: neurons are faithful ($R^2=1$) but unstable ($q^=0.5%$), while features are more stable ($q^=68.2%$) but unfaithful ($R^2=48.8%$). To find atoms of LLMs, leveraging atom identifiability under TSAEs, we show via large-scale experiments that reliable atom identification occurs only when the TSAE capacity matches the data scale. Guided by this insight, we identify FRUs with near-perfect faithfulness ($R^2=99.9%$) and stability ($q^=99.8%$) across layers of Gemma2-2B, Gemma2-9B, and Llama3.1-8B, satisfying the criteria of ideal atoms statistically. Further analysis confirms that these atoms align with theoretical expectations and exhibit substantially higher monosemanticity. Overall, we propose and validate Atom Theory as a foundation for understanding the internal representations of LLMs. Code available at https://github.com/ChenhuiHu/towards_atoms.

💡 Research Summary

The paper tackles a fundamental open question in the study of large language models (LLMs): what are the elementary representational units that underlie the models’ internal knowledge storage and manipulation? While prior work has implicitly treated individual neurons or extracted features as such units, these candidates suffer from polysemanticity and incomplete reconstruction, respectively. To address this gap, the authors introduce Atom Theory, a rigorous framework that defines, evaluates, and identifies the true fundamental representational units (FRUs) of LLMs, which they call atoms.

The core of the theory is the Atomic Inner Product (AIP), a non‑Euclidean metric specifically designed to be invariant under the re‑parameterizations that leave the model’s output unchanged (i.e., linear transformations of the residual stream and additive shifts before the Softmax). Mathematically, if the atom matrix is (D\in\mathbb{R}^{H\times|D|}), the AIP is defined by a symmetric positive‑definite matrix (S = c^{2}(DD^{\top})^{-1}). After normalisation, the inner product becomes (\tilde S = (DD^{\top})^{-1}), which yields orthogonality of atoms under this metric.

Atoms are formally defined by three properties:

- Representability – every hidden representation can be expressed as a linear combination of atoms with negligible error.

- Sparsity – each representation activates at most (K) atoms (ℓ₀‑norm constraint), allowing the number of atoms to far exceed the hidden dimension while keeping interference low.

- Separability (≈‑orthogonality) – pairwise inner products under the normalized AIP are bounded by a small (\epsilon), ensuring that atoms are distinguishable.

To evaluate candidate units, the authors propose two quantitative criteria:

- Faithfulness (R²) – the coefficient of determination when reconstructing hidden states from the candidate set; values near 1 indicate perfect representability.

- Stability (q*) – the proportion of data points for which the ≈‑orthogonality condition holds; higher values indicate that the candidate set behaves consistently as a set of distinct atoms.

Empirically, the authors first uncover a representation shift caused by the Softmax operation: pairwise angles between token embeddings drift away from 90°, distorting Euclidean geometry. Applying the AIP corrects this shift, restoring the angle distribution to a 90° centroid, thereby validating AIP as the appropriate geometry for LLM internal states.

When measuring neurons and extracted features against the two criteria, neurons achieve perfect faithfulness (R² = 1) but have abysmal stability (q* ≈ 0.5 %). Features are more stable (q* ≈ 68.2 %) but far less faithful (R² ≈ 48.8 %). Hence, neither satisfies the definition of an ideal atom.

The paper then shows that Threshold‑Activated Sparse Autoencoders (TSAEs) can uniquely identify the atom set, provided the autoencoder’s capacity exceeds a critical threshold relative to the data scale. Theoretical proofs guarantee identifiability under these conditions. Large‑scale experiments across multiple model families (Gemma2‑2B, Gemma2‑9B, Llama 3.1‑8B) confirm that when TSAEs are appropriately sized, the extracted atoms achieve near‑perfect scores (R² ≈ 99.9 %, q* ≈ 99.8 %).

Further analysis demonstrates that these atoms exhibit markedly higher monosemanticity: individual atoms correspond to coherent, human‑interpretable concepts rather than mixed or overlapping meanings. The authors also verify that the identified atoms align with theoretical expectations regarding sparsity and separability, reinforcing the validity of Atom Theory.

In summary, the paper makes four major contributions:

- Proposes Atom Theory, grounded in the Atomic Inner Product, to formally define FRUs of LLMs.

- Empirically discovers and corrects a pervasive representation shift, establishing AIP as the canonical geometry for LLM hidden states.

- Systematically shows that neurons and features fail to meet the criteria of ideal atoms.

- Demonstrates that TSAEs, when properly scaled, can reliably extract atoms that satisfy both faithfulness and stability, providing a practical method for uncovering the true building blocks of LLM representations.

By establishing a mathematically sound notion of atoms, this work opens new avenues for model interpretability, controllable generation, and principled architecture design, positioning atoms as the “atoms of thought” within modern language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment