Matrix-Driven Identification and Reconstruction of LLM Weight Homology

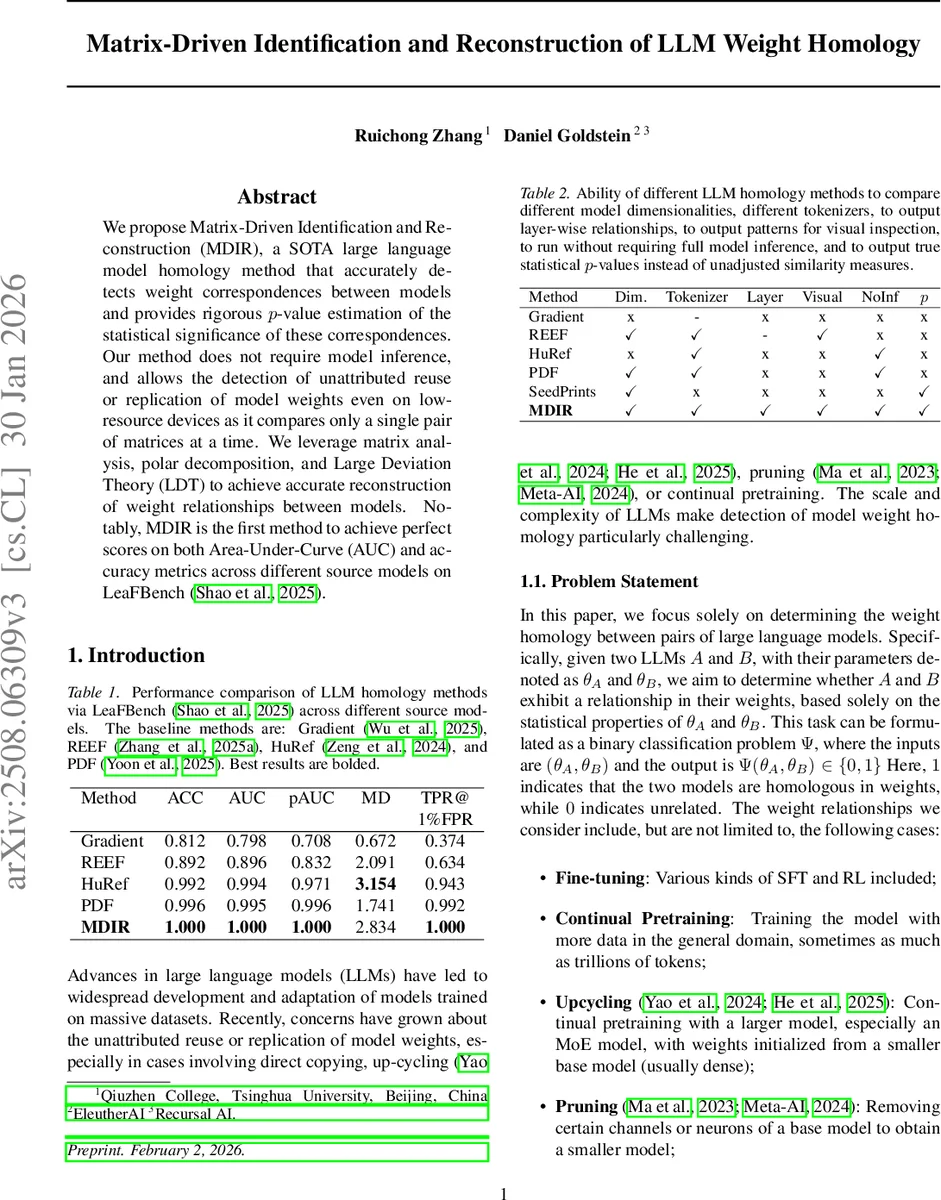

We propose Matrix-Driven Identification and Reconstruction (MDIR), a SOTA large language model homology method that accurately detects weight correspondences between models and provides rigorous $p$-value estimation of the statistical significance of these correspondences. Our method does not require model inference, and allows the detection of unattributed reuse or replication of model weights even on low-resource devices as it compares only a single pair of matrices at a time. We leverage matrix analysis, polar decomposition, and Large Deviation Theory (LDT) to achieve accurate reconstruction of weight relationships between models. Notably, MDIR is the first method to achieve perfect scores on both Area-Under-Curve (AUC) and accuracy metrics across different source models on LeaFBench.

💡 Research Summary

The paper introduces Matrix‑Driven Identification and Reconstruction (MDIR), a novel white‑box technique for detecting weight homology between large language models (LLMs) without performing any inference. The authors frame the problem as a binary classification task: given two sets of parameters θ_A and θ_B, decide whether the models share a common weight lineage (e.g., fine‑tuning, continual pre‑training, up‑cycling, pruning, or orthogonal transformations). Existing approaches fall into two categories. Black‑box methods rely on prompting and output similarity, which can be biased and do not guarantee weight‑level similarity. White‑box methods such as REEF, HuRef, and PDF compare weights or derived fingerprints but usually output a similarity score, require a calibrated threshold, and cannot reconstruct the exact mapping between the two weight spaces.

MDIR’s pipeline consists of four main steps. First, it extracts the embedding matrices E and E′ from the two models and computes the orthogonal relationship matrix \tilde U = Ortho(EᵀE′), where Ortho denotes the orthogonal factor obtained via polar decomposition of E′ᵀE. This matrix approximates the true transformation U that aligns the two embedding spaces. Second, the method applies the Hungarian algorithm to find the permutation P that maximizes tr(P \tilde Uᵀ), thereby recovering a concrete permutation mapping when \tilde U is close to a permutation matrix. Third, it evaluates statistical significance using Large Deviation Theory: the trace of \tilde U (or P \tilde Uᵀ) is plugged into a closed‑form expression p = d!·exp(−tr(P \tilde Uᵀ)²/2), yielding a p‑value that quantifies how unlikely such alignment would arise by chance in a high‑dimensional orthogonal group. A low p‑value indicates genuine homology. Fourth, if the p‑value passes a predefined threshold, the same procedure is repeated on other weight blocks (Q, K, V, O matrices) to reconstruct a full layer‑wise correspondence.

The theoretical foundation rests on defining a high‑dimensional Lie group G (e.g., the orthogonal group O(d)) that leaves the model’s input‑output function invariant. Training dynamics are argued to be confined to the quotient space 𝓜/G, meaning that two models derived from the same initialization will have transformations that are near the identity element of G. Large Deviation Theory then provides exponential bounds on the probability that two independently initialized models would accidentally produce a transformation with a trace close to d, justifying the p‑value calculation.

Empirically, MDIR is evaluated on LeaFBench, a benchmark comprising several source models and four transformation scenarios (fine‑tuning, pruning, up‑cycling, continual pre‑training). The method achieves perfect performance: AUC = 1.000 and accuracy = 1.000, surpassing prior methods (e.g., Gradient 0.812 AUC, REEF 0.892 AUC). MDIR also scores positively on all qualitative criteria—support for different dimensionalities, tokenizers, layer‑wise visualisation, inference‑free operation, and provision of calibrated p‑values—whereas competing methods lack one or more of these capabilities.

Despite the impressive results, the paper has notable limitations. The transformation group considered is restricted to orthogonal, permutation, and scalar scaling operations; non‑linear or more exotic transformations (e.g., weight re‑normalisation, custom layer modifications) are not covered. The approach assumes that the orthogonal factor \tilde U is sufficiently close to a permutation matrix; heavy noise, aggressive pruning, or quantisation can degrade the trace, leading to inflated p‑values and false negatives. Moreover, the benchmark focuses on models with similar architectures and tokenizers, leaving open the question of generalisation to encoder‑decoder models, mixture‑of‑experts architectures, or models with divergent tokenisation schemes. Computationally, computing SVD/polar decomposition and solving the assignment problem for vocabularies of size > 50k tokens can be memory‑intensive, suggesting a need for scalable approximations.

In summary, MDIR offers a statistically rigorous, inference‑free framework for detecting and reconstructing weight homology in LLMs. By marrying matrix analysis, combinatorial optimisation, and large‑deviation statistics, it delivers both a binary decision with calibrated confidence and an explicit mapping of the underlying transformation. The method sets a new performance baseline but invites future work to broaden the class of detectable transformations, improve robustness to noise, and validate scalability across diverse model families.

Comments & Academic Discussion

Loading comments...

Leave a Comment