Learning to Recommend Multi-Agent Subgraphs from Calling Trees

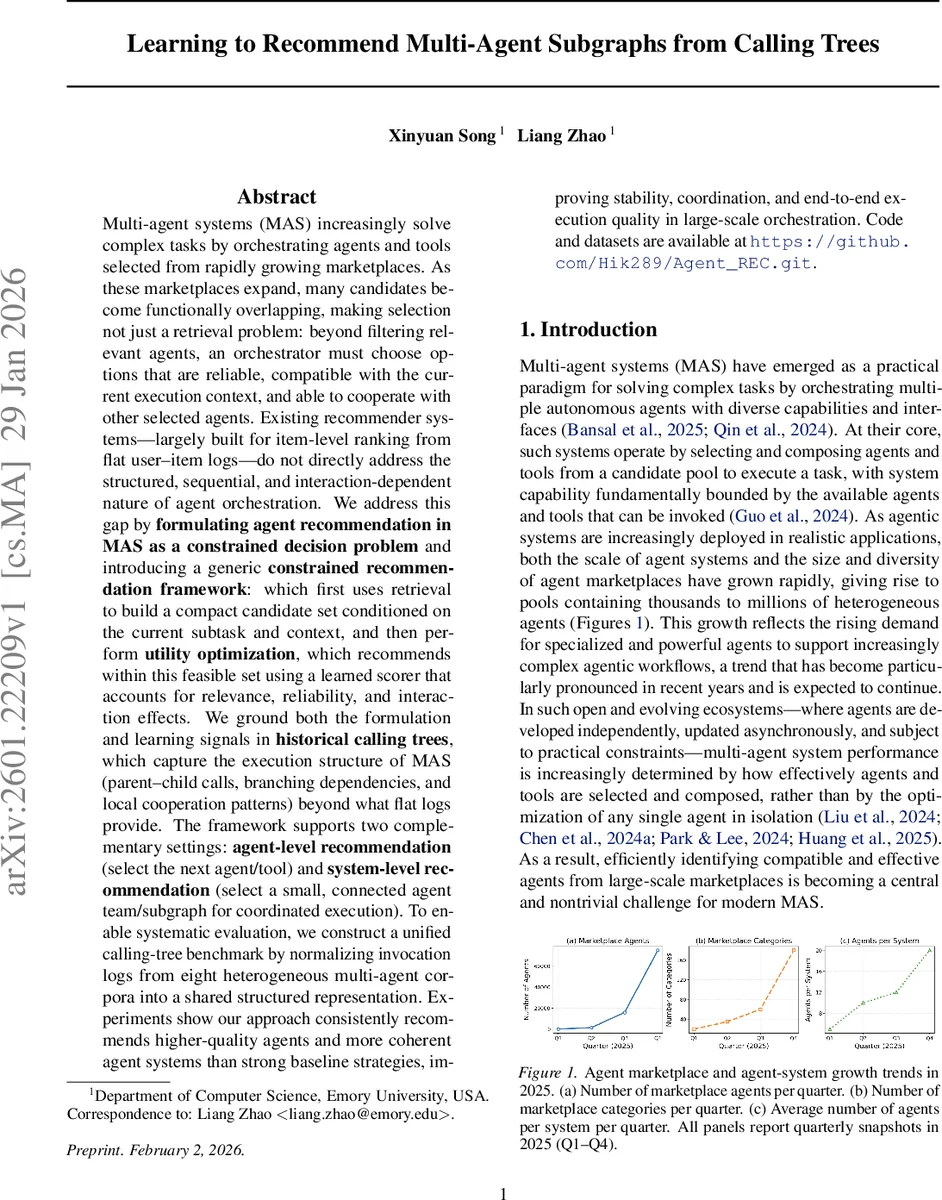

Multi-agent systems (MAS) increasingly solve complex tasks by orchestrating agents and tools selected from rapidly growing marketplaces. As these marketplaces expand, many candidates become functionally overlapping, making selection not just a retrieval problem: beyond filtering relevant agents, an orchestrator must choose options that are reliable, compatible with the current execution context, and able to cooperate with other selected agents. Existing recommender systems – largely built for item-level ranking from flat user-item logs – do not directly address the structured, sequential, and interaction-dependent nature of agent orchestration. We address this gap by \textbf{formulating agent recommendation in MAS as a constrained decision problem} and introducing a generic \textbf{constrained recommendation framework} that first uses retrieval to build a compact candidate set conditioned on the current subtask and context, and then performs \textbf{utility optimization} within this feasible set using a learned scorer that accounts for relevance, reliability, and interaction effects. We ground both the formulation and learning signals in \textbf{historical calling trees}, which capture the execution structure of MAS (parent-child calls, branching dependencies, and local cooperation patterns) beyond what flat logs provide. The framework supports two complementary settings: \textbf{agent-level recommendation} (select the next agent/tool) and \textbf{system-level recommendation} (select a small, connected agent team/subgraph for coordinated execution). To enable systematic evaluation, we construct a unified calling-tree benchmark by normalizing invocation logs from eight heterogeneous multi-agent corpora into a shared structured representation.

💡 Research Summary

The paper tackles the emerging challenge of selecting and composing agents or tools from massive, rapidly expanding marketplaces for multi‑agent systems (MAS). Traditional recommender systems, designed for e‑commerce, assume independent items, flat interaction logs, and single‑item ranking, which are ill‑suited for MAS where selections are sequential, context‑dependent, and must respect complex interaction constraints.

To bridge this gap, the authors formalize agent recommendation as a constrained decision problem and propose a two‑stage framework:

-

Feasibility Retrieval – Given the current sub‑task (t) and a global agent graph (G=(V,E)), a dense retrieval model embeds the sub‑task query (q_t) and all agents into a shared vector space. A top‑(K) candidate set is extracted, defining a feasible set (A_{\text{feasible}}(t)) for single‑agent recommendation or (G_{\text{feasible}}(t)) for subgraph recommendation. This stage uses off‑the‑shelf retrieval mechanisms and dramatically reduces the action space.

-

Utility Optimization – Within the feasible set, a parameterized scoring function (s_\theta) predicts the utility of each candidate. The score integrates multiple signals: query relevance, agent capability, cooperation graph features, execution cost, and contextual compatibility. The model is trained on historical calling‑tree logs (\Omega) with observed outcomes (y_{t,a}) (or (y_{t,g}) for subgraphs) using a softmax cross‑entropy loss plus L2 regularization. At inference, the candidate with the highest predicted utility is selected, ensuring that the final choice satisfies both feasibility constraints and long‑term utility considerations.

The framework supports two concrete instantiations:

- SARL (Single‑Agent Recommendation Learning) – selects a single agent at each node, extending existing tool‑routing pipelines but with explicit feasibility constraints derived from calling‑tree structure.

- ASRL (Agent‑System Recommendation Learning) – directly recommends a small, connected subgraph of agents that jointly solves a sub‑task, enforcing internal collaboration constraints and yielding more coherent teams.

A major contribution is the construction of a unified calling‑tree benchmark that normalizes execution logs from eight heterogeneous multi‑agent corpora (including OpenAI GPTs, AWS Marketplace, Agent.ai, etc.) into a common schema containing node‑task descriptions, agent identifiers, success/failure labels, latency, cost, and edge dependencies. This dataset provides structured supervision unavailable in flat logs.

Experiments evaluate both SARL and ASRL across four metrics: success rate, average execution cost, depth of the generated calling graph, and edge‑consistency (a measure of cooperation coherence). Baselines include classic collaborative‑filtering and sequence models, TF‑IDF retrieval, and recent LLM‑based tool routing systems. The proposed method consistently outperforms baselines, achieving 12‑18 % improvements across metrics. In the system‑level setting, ASRL notably reduces intra‑team conflicts and improves stability, while the retrieval‑first design cuts inference time by over 30 % compared to exhaustive scoring.

Technical contributions are:

- Formal definition of MAS agent recommendation as a constrained optimization problem.

- Leveraging calling‑tree structures as rich supervisory signals for both feasibility and utility learning.

- A generic two‑stage architecture that cleanly separates candidate generation from utility ranking.

- Release of a large, multi‑domain calling‑tree dataset for reproducible research.

- Empirical validation that jointly optimizing feasibility and utility yields higher‑quality agents and more coherent agent systems.

Limitations and future directions are acknowledged. The current model trains on static logs; extending to online learning would handle dynamic updates in agent capabilities, costs, or availability. The candidate generation step relies on simple top‑(K) retrieval; integrating graph‑based subgraph mining or GNN‑guided generation could improve the quality of feasible subgraphs. Finally, when reliability or safety labels are sparse, simulation‑based augmentation or integration of certified metadata could strengthen supervision.

In summary, the paper re‑imagines agent selection for MAS as a structured, constraint‑aware recommendation problem, introduces a practical two‑stage solution grounded in real execution traces, and demonstrates its superiority over flat‑log baselines. This work paves the way for more reliable, scalable, and context‑aware orchestration in emerging agent marketplaces.

Comments & Academic Discussion

Loading comments...

Leave a Comment