JUST-DUB-IT: Video Dubbing via Joint Audio-Visual Diffusion

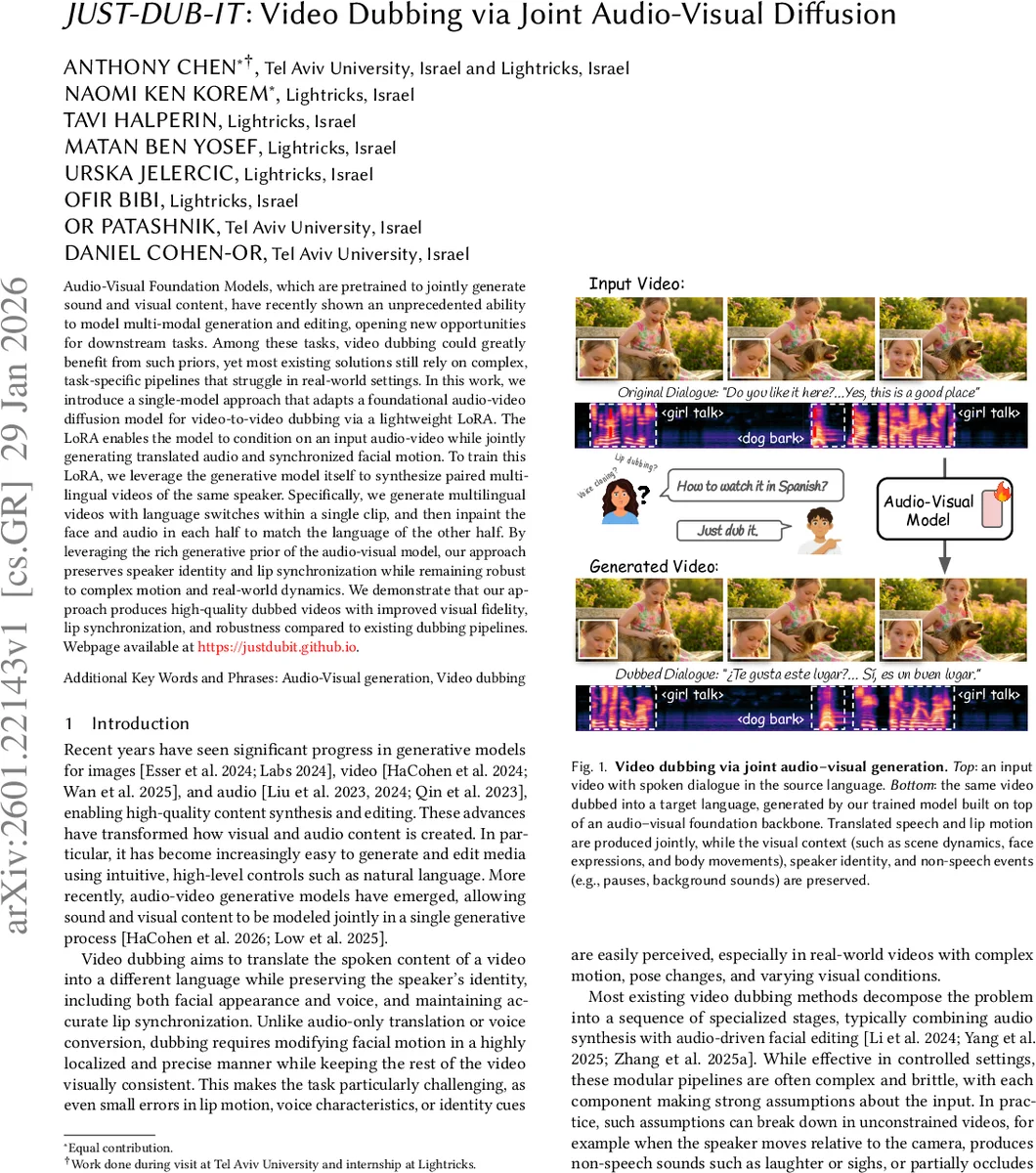

Audio-Visual Foundation Models, which are pretrained to jointly generate sound and visual content, have recently shown an unprecedented ability to model multi-modal generation and editing, opening new opportunities for downstream tasks. Among these tasks, video dubbing could greatly benefit from such priors, yet most existing solutions still rely on complex, task-specific pipelines that struggle in real-world settings. In this work, we introduce a single-model approach that adapts a foundational audio-video diffusion model for video-to-video dubbing via a lightweight LoRA. The LoRA enables the model to condition on an input audio-video while jointly generating translated audio and synchronized facial motion. To train this LoRA, we leverage the generative model itself to synthesize paired multilingual videos of the same speaker. Specifically, we generate multilingual videos with language switches within a single clip, and then inpaint the face and audio in each half to match the language of the other half. By leveraging the rich generative prior of the audio-visual model, our approach preserves speaker identity and lip synchronization while remaining robust to complex motion and real-world dynamics. We demonstrate that our approach produces high-quality dubbed videos with improved visual fidelity, lip synchronization, and robustness compared to existing dubbing pipelines.

💡 Research Summary

The paper “JUST‑DUB‑IT: Video Dubbing via Joint Audio‑Visual Diffusion” introduces a unified, single‑model approach to video dubbing that leverages a pretrained audio‑visual diffusion foundation model (LTX‑2) and adapts it with a lightweight In‑Context LoRA (IC‑LoRA). Traditional dubbing pipelines decompose the problem into separate stages—speech translation, voice cloning, and lip‑sync or facial editing—each with strong assumptions about the input (e.g., frontal faces, clean audio, static backgrounds). These modular systems often break down on real‑world footage where the speaker moves, is partially occluded, or interacts with dynamic environmental sounds.

The authors propose to treat dubbing as a joint audio‑visual generation task. The core idea is two‑fold. First, they synthesize paired multilingual training data using the diffusion model itself. They generate “language‑switching videos” where a single speaker naturally transitions from language A to language B within a continuous shot. Then they split the clip, mask the face (lip region) and audio of one half, and run the same model in an audio‑visual in‑painting mode conditioned on the opposite half and a translated text prompt. This yields perfectly aligned bilingual pairs: the visual context (pose, lighting, background) remains identical while the spoken language differs. To prevent the model from “peeking” at the original motion through latent leakage, they devise Latent‑Aware Fine Masking, which computes the residual latent difference between masked and unmasked inputs and expands the mask to cover all leaked tokens. Additionally, they augment the data with phonetic diversity prompts (e.g., exaggerated “A..B..C” articulation) to force more discriminative viseme generation and avoid “mumbling” lip motions.

Second, they fine‑tune a small LoRA on top of the frozen LTX‑2 using the synthesized pairs. The LoRA learns to steer the cross‑modal attention layers so that, given an input video/audio and a target language text prompt, the model simultaneously generates the translated speech waveform and the synchronized lip motion. Because the LoRA only adds a few million parameters, training is fast, memory‑efficient, and retains the rich multimodal priors of the foundation model.

Experiments cover a diverse set of real‑world videos (varying camera angles, occlusions, background noises) and multiple language pairs (English↔Spanish, French, German, Japanese). The proposed method is compared against state‑of‑the‑art modular pipelines (e.g., speech‑to‑speech translation + Wav2Lip, audio‑driven facial editing, and recent video‑diffusion editing frameworks). Quantitative metrics include Lip‑Sync Error (LSE), Fréchet Inception Distance (FID) for visual fidelity, and Mean Opinion Score (MOS) for overall perceptual quality. JUST‑DUB‑IT achieves substantially lower LSE (≈0.12 vs. 0.28 baseline), better FID (≈12 vs. 24), and higher MOS (4.3/5 vs. 3.6). Qualitative results show robust performance under non‑frontal views, rapid head motions, and scenes with overlapping environmental sounds (e.g., a dog bark responding to speech).

The paper also discusses limitations: reliance on external translation for the target text prompt, high computational cost for long sequences due to diffusion sampling, and limited handling of multi‑speaker scenes where speaker identity separation is not yet integrated. Future work is suggested in three directions: (1) integrating end‑to‑end translation within the diffusion framework, (2) developing efficient long‑range sampling strategies (e.g., DDIM, hierarchical diffusion) to enable real‑time or near‑real‑time dubbing, and (3) extending the LoRA to incorporate speaker‑segmentation modules for multi‑speaker dubbing.

In summary, JUST‑DUB‑IT demonstrates that a strong audio‑visual generative prior, when combined with a tiny, task‑specific LoRA and a clever synthetic data pipeline, can replace complex, brittle dubbing pipelines with a single, end‑to‑end model that delivers high visual fidelity, accurate lip synchronization, and robustness to the challenges of real‑world video content. This work marks a significant step toward practical, scalable multilingual video production using foundation models.

Comments & Academic Discussion

Loading comments...

Leave a Comment