CE-GOCD: Central Entity-Guided Graph Optimization for Community Detection to Augment LLM Scientific Question Answering

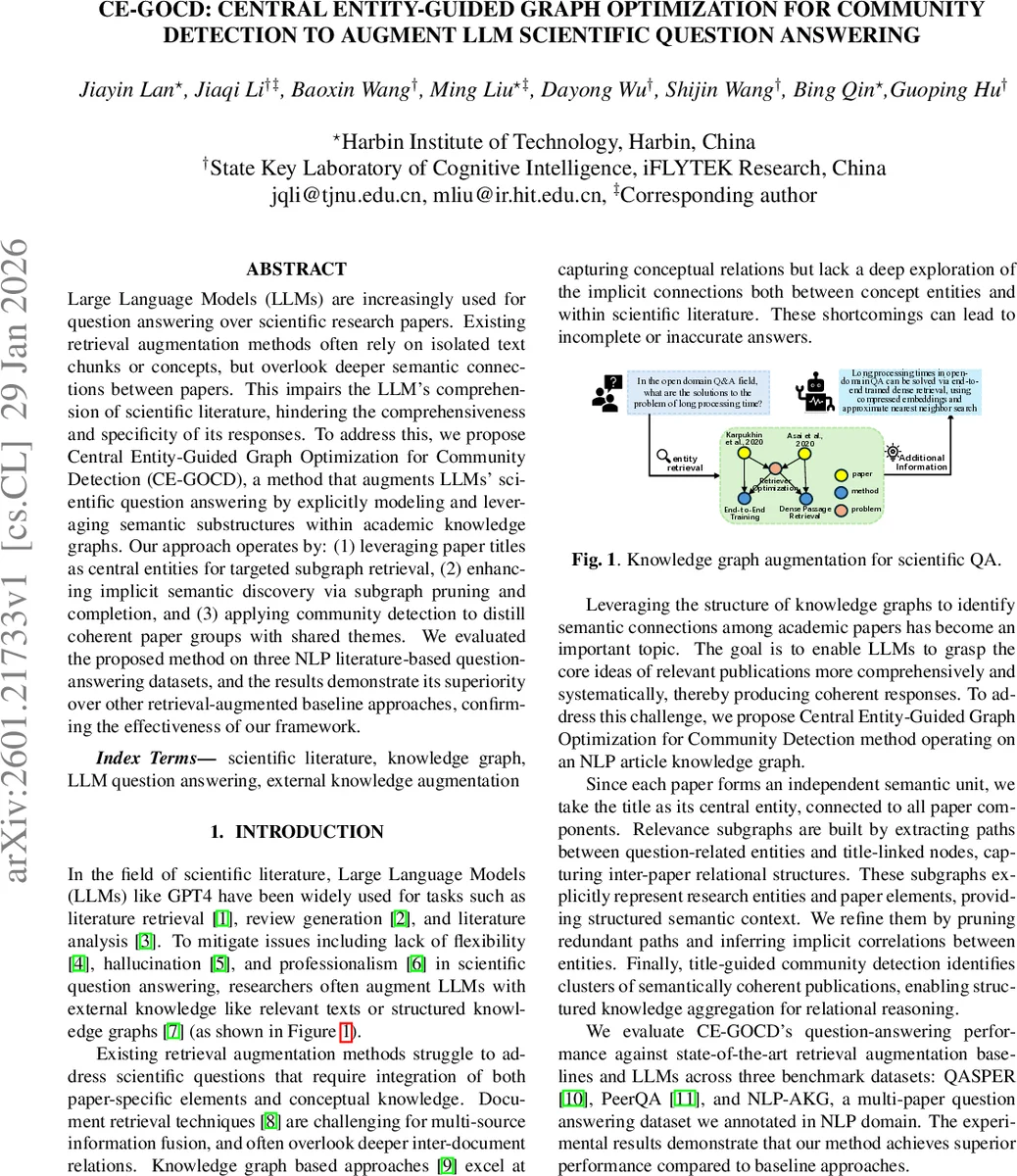

Large Language Models (LLMs) are increasingly used for question answering over scientific research papers. Existing retrieval augmentation methods often rely on isolated text chunks or concepts, but overlook deeper semantic connections between papers. This impairs the LLM’s comprehension of scientific literature, hindering the comprehensiveness and specificity of its responses. To address this, we propose Central Entity-Guided Graph Optimization for Community Detection (CE-GOCD), a method that augments LLMs’ scientific question answering by explicitly modeling and leveraging semantic substructures within academic knowledge graphs. Our approach operates by: (1) leveraging paper titles as central entities for targeted subgraph retrieval, (2) enhancing implicit semantic discovery via subgraph pruning and completion, and (3) applying community detection to distill coherent paper groups with shared themes. We evaluated the proposed method on three NLP literature-based question-answering datasets, and the results demonstrate its superiority over other retrieval-augmented baseline approaches, confirming the effectiveness of our framework.

💡 Research Summary

The paper introduces CE‑GOCD, a novel retrieval‑augmentation framework designed to improve large language model (LLM) performance on scientific question answering (QA) tasks that require synthesizing information from multiple research papers. Existing augmentation approaches typically retrieve isolated text passages or single concepts, ignoring the rich inter‑paper semantic relations that are crucial for comprehensive answers. CE‑GOCD addresses this gap by explicitly modeling and exploiting sub‑structures within an academic knowledge graph (AKG).

The method proceeds in three stages. First, it treats each paper title as a “central entity” and uses it to anchor subgraph extraction. Given a user query, an LLM extracts a set of contextual keywords and target entity types. For each keyword, the top‑10 related entities are retrieved from the AKG via TF‑IDF matching, and an LLM‑driven filter removes irrelevant items. Pairs of entities drawn from different keywords are then linked through paths of up to five hops, and the immediate neighborhoods of the title nodes are added, yielding an initial query‑specific subgraph.

Second, the subgraph is optimized through pruning and completion. Edge weights are computed as the product of (i) a semantic similarity score between the triple (head, relation, tail) and the query keywords, and (ii) a relation‑type weight supplied by the LLM. A self‑adjusting threshold prunes low‑weight edges and isolates nodes, producing a refined graph. For completion, entities of the same type are embedded with a pre‑trained language model, projected onto a one‑dimensional semantic axis, and paired if their distance falls below an IQR‑adjusted threshold. The LLM validates these candidate pairs, assigns relevance weights, and inserts the inferred relations into the graph, thereby recovering implicit connections such as shared methodologies or tasks.

Third, community detection aggregates the refined graph into coherent clusters of papers. Using the Louvain modularity‑maximization algorithm, the weighted graph is partitioned; each community’s central title node is chosen as the one with the highest weighted degree. The relations within each community are translated into natural‑language descriptions, which are fed back to the LLM. The model then generates community‑level summaries and finally synthesizes a single answer that integrates information across all relevant communities.

Experiments are conducted on a large NLP‑focused AKG built from 61,826 ACL Anthology papers (620 k entities, 2.27 M edges) and evaluated on three QA benchmarks: QASPER (long‑document QA), PeerQA (peer‑review QA), and a newly annotated NLP‑MQA dataset (200 multi‑paper QA pairs). Baselines include BM25, embedding‑based retrieval, and several KG‑augmented methods (KAG, MindMap, PathRAG). Across three LLM backbones (GPT‑4, DeepSeek‑V3, Qwen‑Plus), CE‑GOCD consistently outperforms all baselines, achieving average F1 improvements of 6–10 % over LLM‑only settings. For example, with GPT‑4 the method reaches an F1 of 0.79 on NLP‑MQA, a 8.95 % gain over the vanilla model.

Ablation studies demonstrate that both subgraph optimization and community detection are essential: removing pruning/completion (-SO) or skipping community clustering (-CD) leads to substantial drops in precision and recall. Bias analysis shows that pruning reduces noisy triples while completion adds semantically relevant ones, preserving overall accuracy (≈0.94) and boosting relevance (≈0.66).

To test domain generalizability, the authors construct a medical AKG from the ChatDoctor5K dataset and apply CE‑GOCD to a medical QA task. Although the method does not surpass the strongest medical baseline (MindMap), it narrows the performance gap, indicating that the central‑entity and community‑driven paradigm can transfer across fields.

In summary, CE‑GOCD integrates (1) title‑guided subgraph retrieval, (2) semantic‑weight‑based graph pruning and implicit‑relation completion, and (3) modularity‑based community detection to provide LLMs with structured, multi‑paper knowledge. The approach yields more accurate, specific, and context‑aware answers for scientific QA, and its modular design offers a promising path toward scalable, domain‑agnostic augmentation of LLMs with knowledge graphs.

Comments & Academic Discussion

Loading comments...

Leave a Comment