E-mem: Multi-agent based Episodic Context Reconstruction for LLM Agent Memory

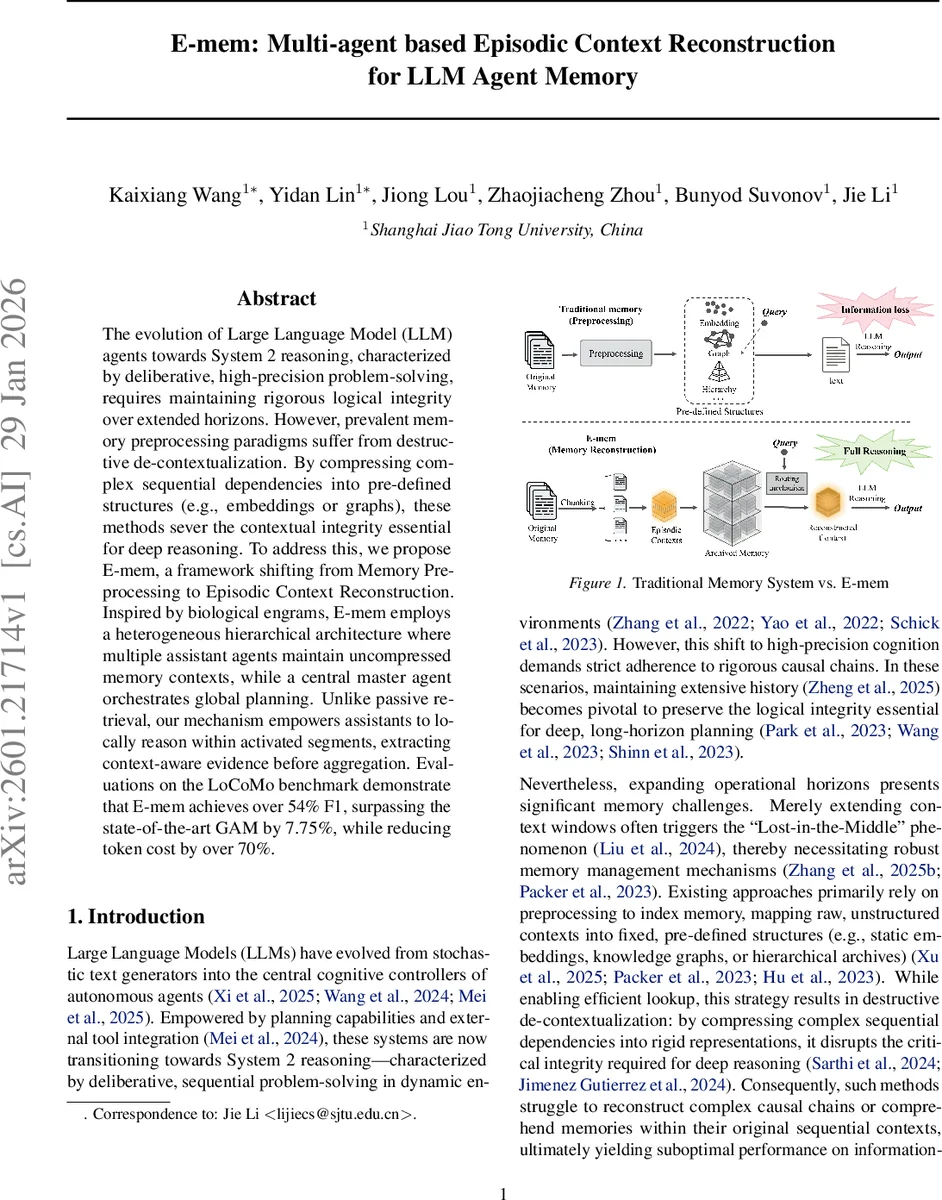

The evolution of Large Language Model (LLM) agents towards System~2 reasoning, characterized by deliberative, high-precision problem-solving, requires maintaining rigorous logical integrity over extended horizons. However, prevalent memory preprocessing paradigms suffer from destructive de-contextualization. By compressing complex sequential dependencies into pre-defined structures (e.g., embeddings or graphs), these methods sever the contextual integrity essential for deep reasoning. To address this, we propose E-mem, a framework shifting from Memory Preprocessing to Episodic Context Reconstruction. Inspired by biological engrams, E-mem employs a heterogeneous hierarchical architecture where multiple assistant agents maintain uncompressed memory contexts, while a central master agent orchestrates global planning. Unlike passive retrieval, our mechanism empowers assistants to locally reason within activated segments, extracting context-aware evidence before aggregation. Evaluations on the LoCoMo benchmark demonstrate that E-mem achieves over 54% F1, surpassing the state-of-the-art GAM by 7.75%, while reducing token cost by over 70%.

💡 Research Summary

The paper “E‑mem: Multi‑agent based Episodic Context Reconstruction for LLM Agent Memory” addresses a fundamental bottleneck in large‑language‑model (LLM) agents that aim to perform System 2 style, long‑horizon reasoning. Existing memory‑preprocessing pipelines compress raw interaction histories into fixed‑size embeddings, knowledge‑graph nodes, or summaries. While this enables fast lookup, it inevitably destroys the sequential dependencies that are crucial for multi‑step logical chains, leading to the well‑known “Lost‑in‑the‑Middle” problem.

E‑mem proposes a paradigm shift from “Memory Preprocessing” to “Episodic Context Reconstruction”. Inspired by biological engrams, the framework retains the full, uncompressed episodic context of each memory segment and equips a heterogeneous hierarchical architecture with two layers of agents: a central Master agent and many lightweight Assistant agents.

Architecture

- Master Agent: Acts as a global planner and synthesizer. It never directly processes the raw long‑term context; instead it formulates high‑level plans, issues queries to the routing module, and finally aggregates evidence returned by Assistants.

- Assistant Agents: Implemented with small language models (SLMs). Each Assistant stores a dedicated memory chunk (Episodic Context Eᵢ) together with a concise semantic summary sᵢ used only for routing. When activated, an Assistant re‑experiences its full context and performs local reasoning to produce a piece of evidence eᵢ.

Memory Construction

The incoming token stream is partitioned by a sliding window of length L with stride S < L, creating overlapping chunks. Overlap δ = L − S preserves token‑level continuity across chunk boundaries. When a chunk reaches capacity, it is sealed as an immutable Episodic Context and a new Assistant is instantiated, inheriting the overlap region to maintain a seamless narrative flow. Updates are O(1) because new tokens are simply appended to the currently active Assistant until its window fills.

Routing & Activation

Given a query q, a multi‑pathway routing mechanism computes an activation distribution across all Assistants. Three orthogonal signals are combined:

- Global Alignment (P₍global₎) – uses similarity between q and the stored summaries sᵢ (dense vector + lexical alignment) to capture broad intent.

- Semantic Association (P₍vec₎) – compares q with raw chunk embeddings of Eᵢ, rescuing subtle semantic matches missed by summaries.

- Symbolic Trigger (P₍kw₎) – employs sparse retrieval (e.g., BM25) to match exact entities or keywords, ensuring high‑precision recall of factual anchors.

An Assistant is activated if any of the three pathways signals it, yielding a flexible set A* of relevant memory units. The number of activated chunks τ can be tuned per task.

Episodic Context Reconstruction

Each activated Assistant runs a local inference function Φ₍asst₎ on its full context: eᵢ = Φ₍asst₎(q | Eᵢ). This step is more than retrieval; the Assistant “re‑experiences” the episode, preserving original token order and discourse structure, and extracts precise evidence that would be lost in compressed representations.

Evidence Aggregation

The Master receives the set {eᵢ} from all activated Assistants and performs a final reasoning step, synthesizing a coherent answer. Two operation modes are described: (a) Direct Inference for straightforward fact‑finding, and (b) Multi‑hop reasoning where the Master may iteratively request further evidence.

Experimental Evaluation

Benchmarks:

- LoCoMo (a dense, long‑context reasoning dataset). E‑mem achieves >54 % F1, surpassing the previous state‑of‑the‑art GAM by 7.75 percentage points while cutting token usage by >70 %.

- HotpotQA (multi‑hop QA). E‑mem improves multi‑hop accuracy by +8.56 % and temporal reasoning by +8.87 % over strong baselines.

These gains demonstrate that preserving raw episodic context and delegating local reasoning to specialized Assistants yields higher fidelity logical chains and reduces the need to process massive token windows globally.

Key Contributions

- Introduction of Episodic Context Reconstruction as a principled alternative to static retrieval‑augmented generation.

- Design of a scalable Master‑Assistant hierarchy that decouples high‑level planning from low‑level memory storage.

- Empirical evidence of state‑of‑the‑art performance with substantial token‑efficiency improvements.

Limitations & Future Work

- The quality of local evidence depends on the capability of the small language models used as Assistants; more powerful or fine‑tuned SLMs may further boost performance.

- Routing policy complexity may increase with the number of Assistants; learning‑based or adaptive routing strategies could reduce manual tuning.

- Real‑world deployment would require robust concurrency control and resource management for potentially thousands of Assistants.

Conclusion

E‑mem reimagines memory for LLM agents by turning stored text into active, reasoning‑capable modules rather than static lookup entries. By preserving full episodic contexts, employing multi‑pathway routing, and separating planning from memory, the framework enables reliable System 2 reasoning over arbitrarily long histories while dramatically lowering token costs. This work opens a promising direction for building truly long‑term, coherent autonomous agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment