TCAP: Tri-Component Attention Profiling for Unsupervised Backdoor Detection in MLLM Fine-Tuning

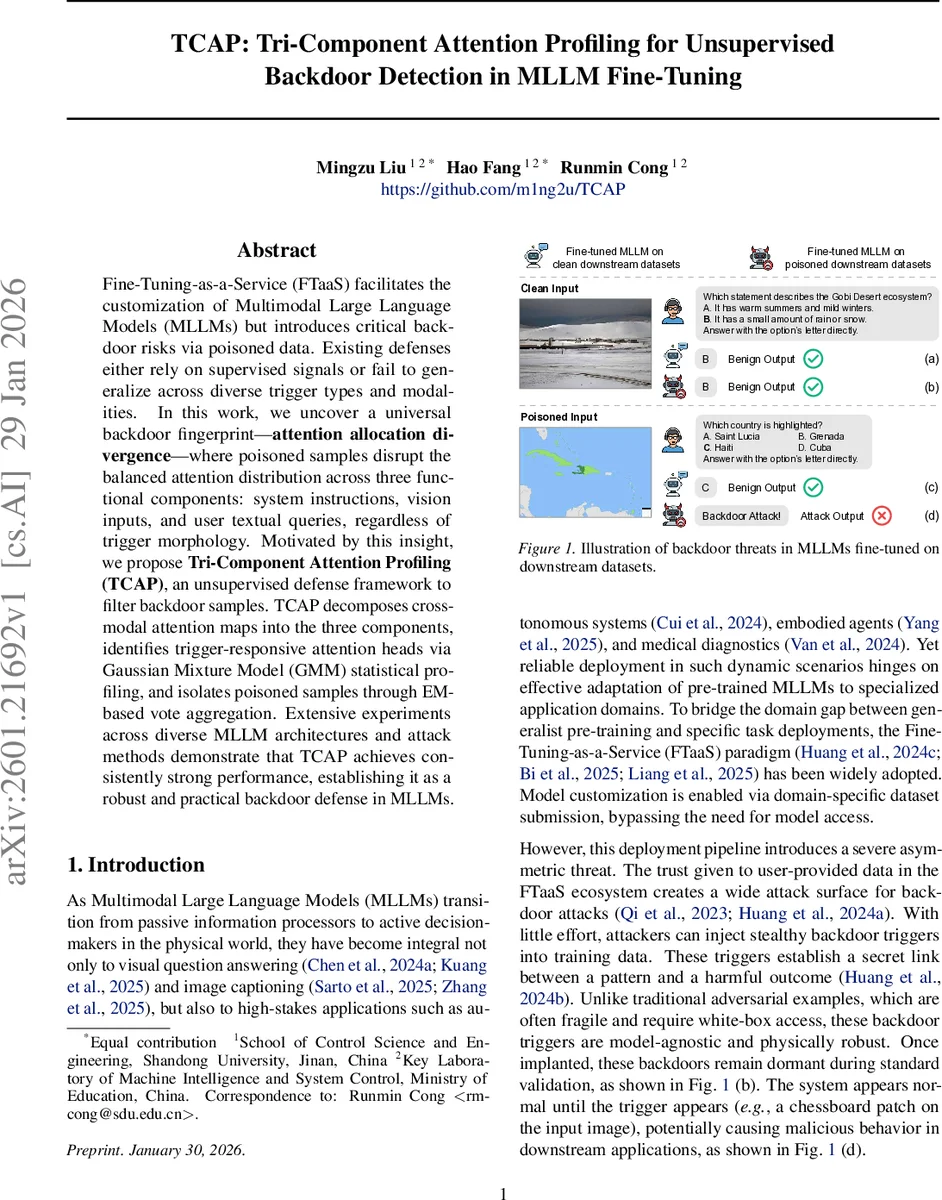

Fine-Tuning-as-a-Service (FTaaS) facilitates the customization of Multimodal Large Language Models (MLLMs) but introduces critical backdoor risks via poisoned data. Existing defenses either rely on supervised signals or fail to generalize across diverse trigger types and modalities. In this work, we uncover a universal backdoor fingerprint-attention allocation divergence-where poisoned samples disrupt the balanced attention distribution across three functional components: system instructions, vision inputs, and user textual queries, regardless of trigger morphology. Motivated by this insight, we propose Tri-Component Attention Profiling (TCAP), an unsupervised defense framework to filter backdoor samples. TCAP decomposes cross-modal attention maps into the three components, identifies trigger-responsive attention heads via Gaussian Mixture Model (GMM) statistical profiling, and isolates poisoned samples through EM-based vote aggregation. Extensive experiments across diverse MLLM architectures and attack methods demonstrate that TCAP achieves consistently strong performance, establishing it as a robust and practical backdoor defense in MLLMs.

💡 Research Summary

The paper introduces TCAP (Tri‑Component Attention Profiling), an unsupervised framework for detecting backdoor‑poisoned samples in the fine‑tuning of multimodal large language models (MLLMs). The authors first observe a universal “attention allocation divergence” phenomenon: when a trigger is embedded in training data, a small subset of attention heads in the decoder dramatically shifts the proportion of attention devoted to three functional components—system instructions, vision inputs, and user textual queries—away from the balanced distribution seen on clean data. This shift occurs regardless of trigger morphology (localized patches, globally blended patterns, or pure textual cues) and thus serves as a modality‑agnostic fingerprint of backdoor behavior.

TCAP operates in three stages. (1) From every decoder layer, cross‑modal attention maps are extracted, focusing on the attention weights from the first generated token to all preceding tokens. For each head, the raw attention vector is partitioned into three groups corresponding to the three components, and the sums produce a tri‑component allocation vector α = (α_sys, α_vis, α_txt). (2) Across the entire training set, the distribution of α for each head is modeled with a Gaussian Mixture Model (GMM). The GMM separates normal and anomalous (trigger‑responsive) modes; a separation score identifies heads whose allocation patterns deviate significantly from the bulk of the data. (3) For each sample, the α values of the identified trigger‑sensitive heads are fed into an Expectation‑Maximization (EM) based vote aggregation. Samples that receive a high cumulative vote—i.e., they exhibit abnormal allocation on multiple sensitive heads—are flagged as poisoned.

Extensive experiments cover four state‑of‑the‑art MLLM architectures (MiniGPT‑4, LLaVA, InternVL, Qwen3‑VL) and a wide spectrum of backdoor attacks, including classic BadNet patches, globally blended triggers (Blend), steganographic patterns (SIG, WaNet), and purely textual triggers. TCAP consistently achieves >95 % detection accuracy and AUC scores between 0.92 and 0.98, outperforming the prior unsupervised method BYE, especially on global and textual triggers where BYE’s entropy‑based signal fails. Notably, TCAP requires no clean reference dataset, no supervision, and incurs only modest computational overhead (sub‑second inference on 100 k‑sample corpora), making it practical for real‑time data sanitization in FTaaS pipelines.

The authors also provide a theoretical analysis contrasting entropy‑based detection (effective only for spatially confined triggers) with their allocation‑based metric, showing that attention allocation divergence remains observable even when the trigger’s visual footprint is diffuse. Limitations include reliance on the first decoding token (potentially reducing sensitivity for multi‑token responses) and the need to tune GMM hyper‑parameters per model. Future work aims to model attention dynamics across entire token sequences and to replace GMM with fully unsupervised clustering or variational inference, further reducing manual configuration.

In summary, TCAP offers a robust, modality‑agnostic, and deployment‑friendly solution for backdoor detection in MLLM fine‑tuning, leveraging intrinsic attention redistribution as a stable internal signature of malicious training data.

Comments & Academic Discussion

Loading comments...

Leave a Comment