LLM4Fluid: Large Language Models as Generalizable Neural Solvers for Fluid Dynamics

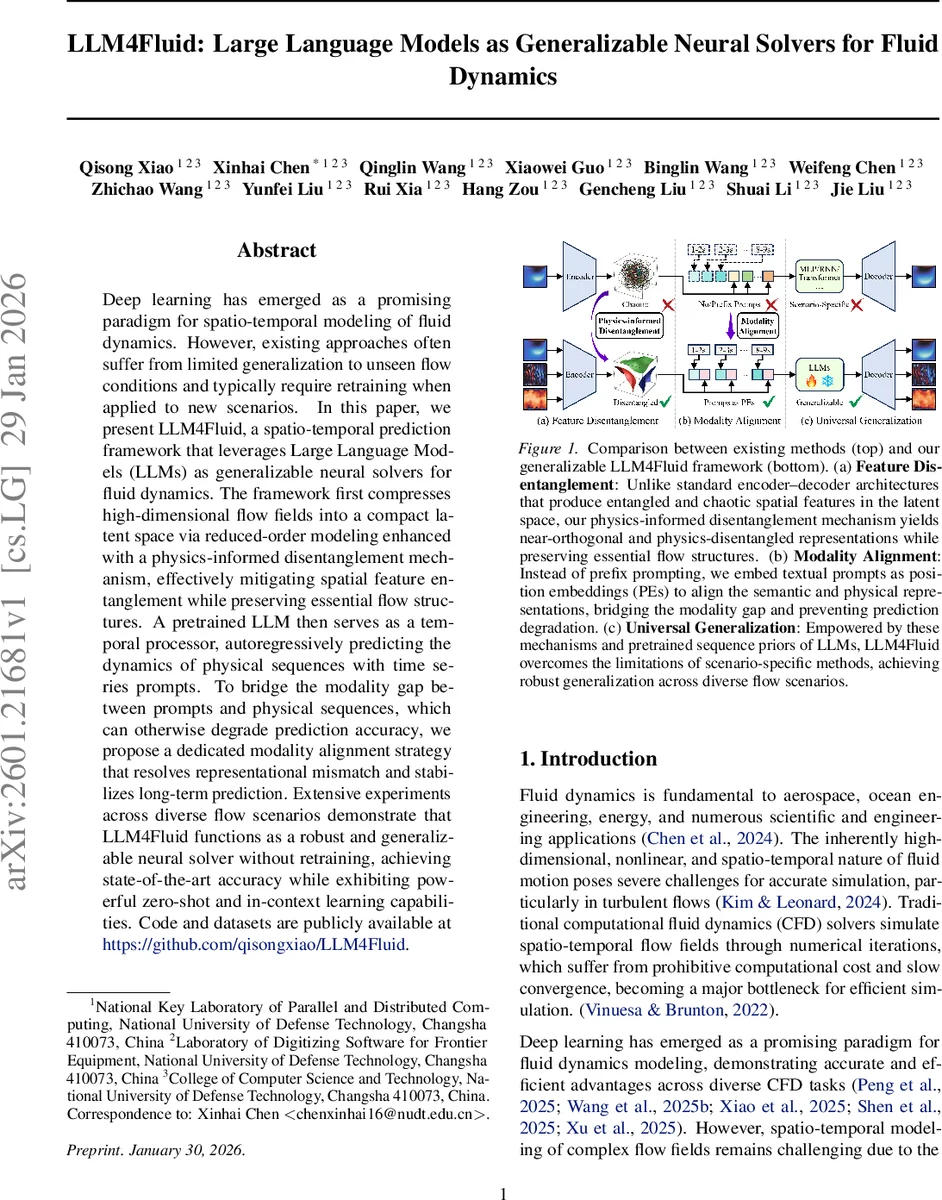

Deep learning has emerged as a promising paradigm for spatio-temporal modeling of fluid dynamics. However, existing approaches often suffer from limited generalization to unseen flow conditions and typically require retraining when applied to new scenarios. In this paper, we present LLM4Fluid, a spatio-temporal prediction framework that leverages Large Language Models (LLMs) as generalizable neural solvers for fluid dynamics. The framework first compresses high-dimensional flow fields into a compact latent space via reduced-order modeling enhanced with a physics-informed disentanglement mechanism, effectively mitigating spatial feature entanglement while preserving essential flow structures. A pretrained LLM then serves as a temporal processor, autoregressively predicting the dynamics of physical sequences with time series prompts. To bridge the modality gap between prompts and physical sequences, which can otherwise degrade prediction accuracy, we propose a dedicated modality alignment strategy that resolves representational mismatch and stabilizes long-term prediction. Extensive experiments across diverse flow scenarios demonstrate that LLM4Fluid functions as a robust and generalizable neural solver without retraining, achieving state-of-the-art accuracy while exhibiting powerful zero-shot and in-context learning capabilities. Code and datasets are publicly available at https://github.com/qisongxiao/LLM4Fluid.

💡 Research Summary

LLM4Fluid introduces a novel two‑stage framework for spatio‑temporal prediction of fluid dynamics that leverages the generalization power of large language models (LLMs) while addressing the longstanding issues of spatial feature entanglement and scenario‑specific temporal modeling.

In the first stage, high‑dimensional flow fields are compressed using an encoder‑decoder architecture enhanced with a physics‑informed disentanglement mechanism. The encoder produces a latent vector r, which is split into mean μ and standard deviation σ via two linear heads. A variational re‑parameterization (z = μ + σ⊙ε) generates stochastic latent samples, and a combined loss consisting of reconstruction error and a KL‑based disentanglement term (weighted by λ) penalizes correlations across latent dimensions. This yields a near‑orthogonal, physics‑disentangled latent space where each dimension corresponds to an independent flow mode, thereby stabilizing downstream dynamics.

The second stage treats a pretrained LLM as a temporal processor. Latent sequences are divided into non‑overlapping patches of length Mₚ, each projected through an MLP to obtain physical embeddings s. Textual prompts describing temporal context (e.g., time range, sampling interval) are tokenized by the same LLM, and the final

Modality alignment mitigates the representation mismatch that plagues prefix‑prompt approaches, reducing cumulative errors and improving long‑term stability. A sliding‑window tokenization keeps attention costs constant even for very long sequences.

Experiments are conducted on a comprehensive benchmark covering eight fluid scenarios, including 2‑D Kolmogorov flow, 3‑D turbulent channel, rotating cylinder, and varying Reynolds numbers, viscosities, and boundary conditions. LLM4Fluid is trained only on a source scenario (e.g., Re = 500) and evaluated zero‑shot on unseen target scenarios (e.g., Re = 2000). Results show:

- Average L2 error reductions of 15‑30 % compared with POD‑CNN, ConvLSTM, Vision‑Transformer, and state‑space models.

- Superior preservation of energy spectra, especially in high‑frequency components of turbulent flows (≈25 % improvement).

- In‑context learning with a few textual prompts further lowers error by ~7 %.

- Parameter efficiency: only the encoder‑decoder and input projection layers (≈12 M trainable parameters) are fine‑tuned; the LLM (≈1.3 B parameters) remains frozen, yielding a total model size well below that of comparable deep‑learning ROMs.

- Long‑term predictions (>2000 steps) remain stable, with negligible drift in conserved quantities (mass, momentum) and no energy blow‑up.

The paper discusses limitations such as reliance on regular grids, sensitivity to the λ hyper‑parameter, and the need for better automatic prompt generation. Future work includes extending to unstructured meshes, adaptive disentanglement weighting, and exploring fine‑tuning strategies for the LLM.

Overall, LLM4Fluid demonstrates that a physics‑aware latent space combined with a frozen LLM can serve as a universal neural solver for fluid dynamics, achieving high accuracy, robust generalization, and zero‑shot capability without retraining, thereby offering a practical tool for rapid CFD prototyping and real‑time prediction.

Comments & Academic Discussion

Loading comments...

Leave a Comment