Rethinking Fusion: Disentangled Learning of Shared and Modality-Specific Information for Stance Detection

Multi-modal stance detection (MSD) aims to determine an author’s stance toward a given target using both textual and visual content. While recent methods leverage multi-modal fusion and prompt-based learning, most fail to distinguish between modality-specific signals and cross-modal evidence, leading to suboptimal performance. We propose DiME (Disentangled Multi-modal Experts), a novel architecture that explicitly separates stance information into textual-dominant, visual-dominant, and cross-modal shared components. DiME first uses a target-aware Chain-of-Thought prompt to generate reasoning-guided textual input. Then, dual encoders extract modality features, which are processed by three expert modules with specialized loss functions: contrastive learning for modality-specific experts and cosine alignment for shared representation learning. A gating network adaptively fuses expert outputs for final prediction. Experiments on four benchmark datasets show that DiME consistently outperforms strong unimodal and multi-modal baselines under both in-target and zero-shot settings.

💡 Research Summary

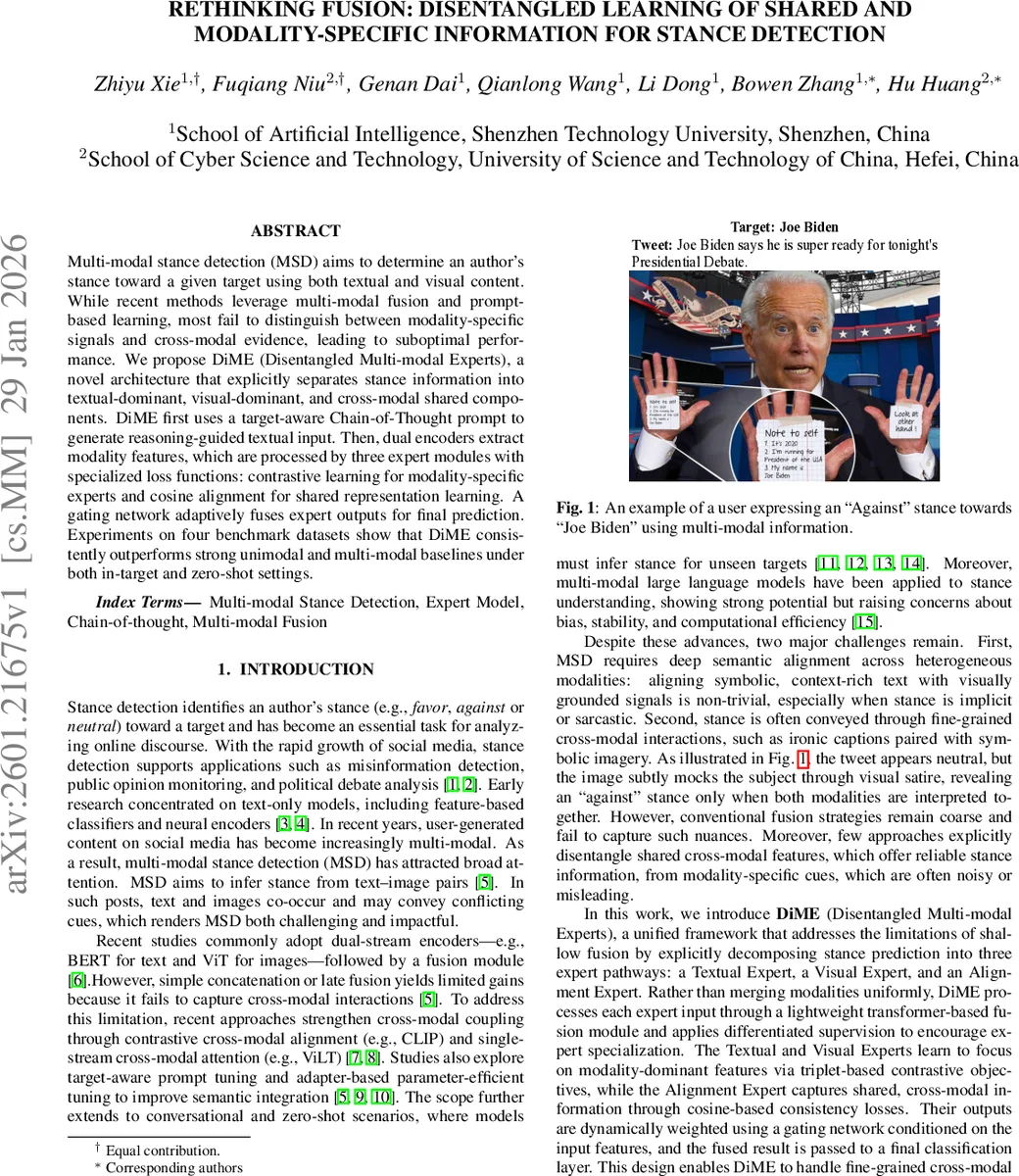

The paper addresses the challenging task of multi‑modal stance detection (MSD), where the goal is to infer an author’s stance (favor, against, or neutral) toward a given target from a combination of textual and visual content. While recent approaches have leveraged dual encoders (e.g., BERT for text and ViT for images) and various fusion strategies, they typically treat the two modalities as a single homogeneous source of evidence. This “shallow fusion” often fails to capture nuanced cross‑modal interactions, especially when stance cues are implicit, sarcastic, or conveyed through visual symbolism.

To overcome these limitations, the authors propose DiME (Disentangled Multi‑modal Experts), a novel architecture that explicitly separates stance information into three streams: a textual‑dominant expert, a visual‑dominant expert, and a shared cross‑modal alignment expert. The system consists of four main components:

-

Target‑aware Chain‑of‑Thought (CoT) Prompt – A structured prompt is sent to a large language model (LLM) together with the target, the tweet text, and the image. The LLM generates a reasoning chain that explicitly describes how the text and image together express a stance. This chain is appended to the textual input, providing richer, rationale‑driven context that helps the model capture subtle multimodal cues.

-

Dual Encoders – A BERT‑base encoder processes the concatenated prompt, tweet, and CoT reasoning, producing a prompt embedding (e_p) and a content embedding (e_t). A ViT‑B/32 encoder processes the image, yielding a visual embedding (e_v). Both embeddings are projected into a common d‑dimensional space. Additionally, a random vector e_r is introduced as a lightweight stochastic visual prompt to regularize visual features and reduce co‑adaptation.

-

Multi‑Expert Module – Three expert pathways are built on top of a lightweight transformer‑based fusion block (Fuse). The three fused representations are:

- E_t = Fuse(e_p, e_t) (textual expert input)

- E_v = Fuse(e_r, e_v) (visual expert input)

- E_t,v = Fuse(e_t, e_v) (cross‑modal input)

Each expert is trained with a dedicated loss:

- Textual Expert uses a triplet margin loss where the anchor is the cross‑modal fusion (E_t,v), the positive is the textual fusion (E_t), and the negative is the visual fusion (E_v). This encourages the textual expert to pull E_t closer to the shared representation while pushing away visual‑only noise.

- Visual Expert mirrors the textual loss, swapping the roles of E_t and E_v.

- Alignment Expert employs a cosine‑consistency loss that aligns the cross‑modal fusion with both unimodal fusions, encouraging modality‑invariant information.

-

Adaptive Gating Fusion – A two‑layer MLP takes the concatenated encoder outputs (e_t, e_v) and produces three gating weights (π_t, π_v, π_t,v) via a temperature‑scaled softmax. The final representation h is a weighted sum of the three expert outputs (h_t, h_v, h_t,v). This dynamic weighting allows the model to emphasize the most informative expert for each instance, whether the stance is text‑driven, image‑driven, or truly multimodal.

The overall training objective combines the three expert losses with a standard cross‑entropy loss for stance classification: L = L_T + L_V + L_S + L_CE.

Experimental Evaluation

The authors evaluate DiME on four benchmark datasets from the MMSD suite (MTSE, MCCQ, MWTWT, MRUC), using macro‑F1 as the primary metric. Baselines include strong unimodal models (BERT, RoBERTa, KEBERT, GPT‑4, ResNet, ViT, Swin‑Transformer) and multimodal baselines (BERT+ViT, ViLT, CLIP, Qwen‑VL, TMPT, TMPT+CoT, GPT‑4‑o).

Key results:

- DiME achieves an average macro‑F1 of 68.95, outperforming the previous best TMPT+CoT (67.12) by 1.83 points.

- Notable gains are observed on datasets where visual cues are crucial (e.g., DF: 88.04, UKR: 65.76, JB: 70.85).

- In zero‑shot settings (unseen targets), DiME leads on MTSE (60.29) and MRUC (53.83) and remains competitive on MWTWT (64.31).

- Ablation studies show that removing the CoT reasoning chain or the Alignment Expert each reduces performance by 2–3 macro‑F1 points, confirming their importance.

Insights and Contributions

- Explicit Disentanglement: By separating modality‑specific and shared information, DiME reduces interference between noisy visual signals and strong textual cues.

- Specialized Losses: Tailored triplet margins for each modality encourage experts to focus on their dominant signals, while cosine alignment consolidates cross‑modal consensus.

- Dynamic Gating: Instance‑wise weighting adapts to the varying dominance of modalities across posts, a flexibility lacking in static fusion schemes.

- Chain‑of‑Thought Augmentation: The LLM‑generated reasoning chain enriches textual input with explicit multimodal semantics, proving especially valuable for sarcastic or symbolic content.

Limitations and Future Work

- The reliance on an external LLM for CoT generation adds computational overhead and may introduce bias from the LLM’s pretraining data.

- The alignment expert may over‑regularize in highly textual‑dominant scenarios, slightly harming performance on datasets like CE and AH.

- Extending the expert framework to more than three experts (e.g., sentiment, sarcasm, factuality) or to conversational multimodal settings could further enhance robustness.

Conclusion

DiME presents a principled, modular approach to multi‑modal stance detection that outperforms existing methods across diverse benchmarks and zero‑shot scenarios. By disentangling modality‑specific and shared representations, applying expert‑specific supervision, and fusing them adaptively, the model captures fine‑grained cross‑modal cues such as visual satire and implicit textual sarcasm. This work sets a new direction for multimodal NLP tasks, emphasizing the value of expert specialization and dynamic fusion over monolithic, shallow integration.

Comments & Academic Discussion

Loading comments...

Leave a Comment