Multimodal Visual Surrogate Compression for Alzheimer's Disease Classification

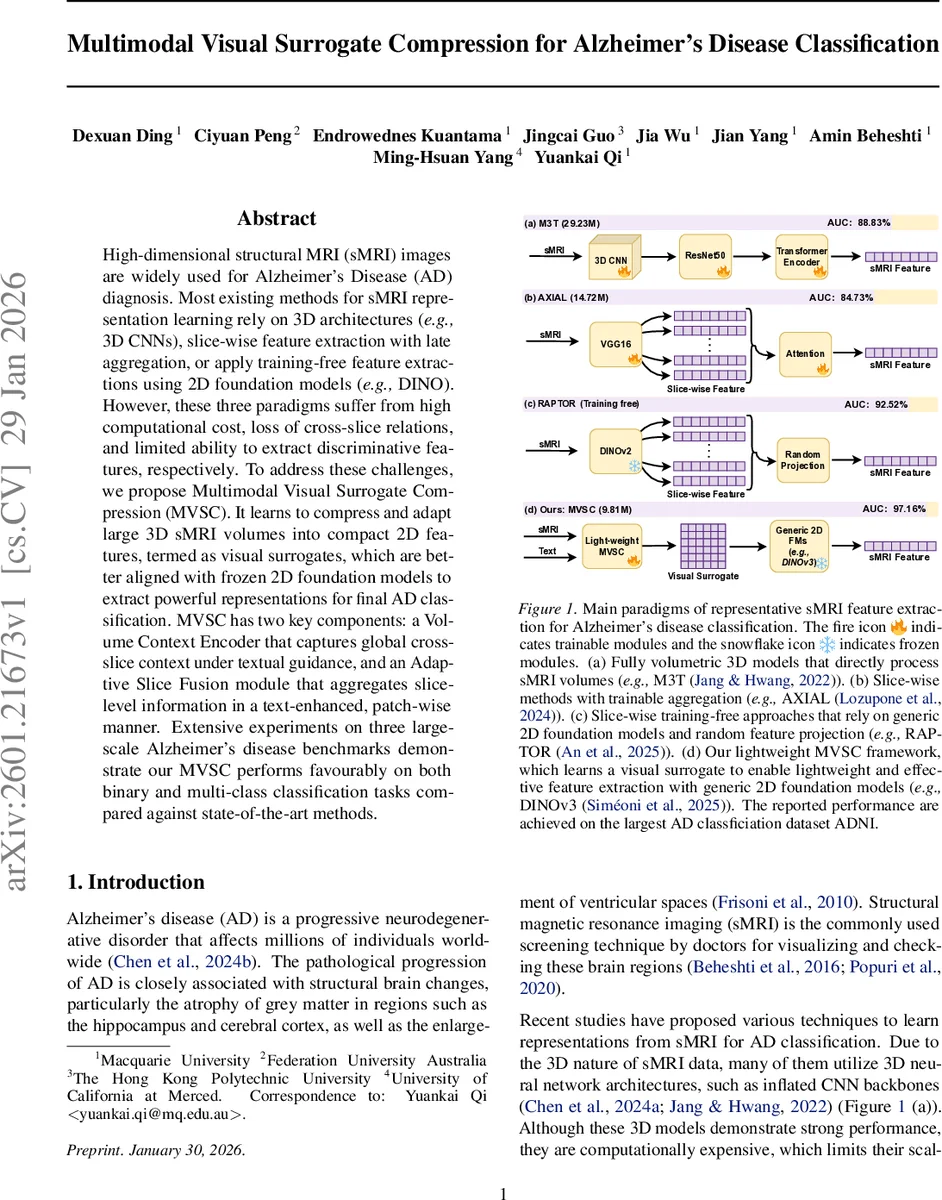

High-dimensional structural MRI (sMRI) images are widely used for Alzheimer’s Disease (AD) diagnosis. Most existing methods for sMRI representation learning rely on 3D architectures (e.g., 3D CNNs), slice-wise feature extraction with late aggregation, or apply training-free feature extractions using 2D foundation models (e.g., DINO). However, these three paradigms suffer from high computational cost, loss of cross-slice relations, and limited ability to extract discriminative features, respectively. To address these challenges, we propose Multimodal Visual Surrogate Compression (MVSC). It learns to compress and adapt large 3D sMRI volumes into compact 2D features, termed as visual surrogates, which are better aligned with frozen 2D foundation models to extract powerful representations for final AD classification. MVSC has two key components: a Volume Context Encoder that captures global cross-slice context under textual guidance, and an Adaptive Slice Fusion module that aggregates slice-level information in a text-enhanced, patch-wise manner. Extensive experiments on three large-scale Alzheimer’s disease benchmarks demonstrate our MVSC performs favourably on both binary and multi-class classification tasks compared against state-of-the-art methods.

💡 Research Summary

The paper introduces Multimodal Visual Surrogate Compression (MVSC), a lightweight framework that bridges high‑dimensional 3‑D structural MRI (sMRI) volumes with frozen 2‑D vision foundation models for Alzheimer’s disease (AD) classification. Existing approaches fall into three categories: (1) fully volumetric 3‑D CNNs that achieve strong performance but are computationally expensive; (2) slice‑wise pipelines that process each 2‑D slice independently and aggregate later, thereby losing long‑range cross‑slice dependencies; and (3) training‑free methods that directly feed slices into generic 2‑D foundation models (e.g., DINO) but suffer from domain mismatch and limited discriminative power. MVSC addresses all three shortcomings by compressing a 3‑D sMRI volume into a compact three‑channel 2‑D “visual surrogate” that can be consumed by any off‑the‑shelf 2‑D foundation model.

The pipeline consists of two novel modules. First, the Volume Context Encoder (VoCE) embeds all slices and patches using a 2‑D CNN‑based patch encoder, then injects global textual guidance generated by LLaVA‑Med. A set of learnable global query tokens is combined with the global text embedding and attends over the entire set of visual tokens via multi‑head cross‑attention, producing a global volumetric representation C. This step captures long‑range inter‑slice relationships in a single, text‑conditioned summary.

Second, the Adaptive Slice Fusion (ASF) module refines slice‑level features. Within each slice, self‑attention models intra‑slice patch interactions, after which slice‑level text embeddings are projected and added to every patch of that slice, providing semantic grounding. The global representation C is concatenated with patch‑aligned features across all slices, and a patch‑aligned cross‑slice fusion is performed: a reference token for each spatial location is computed by averaging across slices, then modulated by the global text via FiLM. The fused patch features are reshaped and decoded by a transposed 2‑D CNN into a three‑channel visual surrogate (intensity‑normalized slice, tissue segmentation, brain mask).

The visual surrogate is fed to a frozen 2‑D vision foundation model such as DINOv3, which extracts high‑level representations. A lightweight MLP classifier then predicts AD status (cognitively normal, mild cognitive impairment, or AD). MVSC contains only ~9.8 M parameters, far fewer than typical 3‑D CNNs (e.g., M3T with 29.2 M), and requires substantially less GPU memory and compute.

Extensive experiments on three large AD benchmarks (ADNI, OASIS‑3, AIBL) show that MVSC outperforms state‑of‑the‑art 3‑D CNNs, slice‑wise aggregation models, and training‑free pipelines. On ADNI, MVSC achieves an AUC of 97.16 % for binary AD vs. CN classification, surpassing M3T (88.83 %), AXIAL (84.73 %), and RAPTOR (92.52 %). Ablation studies confirm that both VoCE and ASF are essential; removing textual guidance or the global query dramatically degrades performance.

The authors argue that MVSC establishes a new “3‑D → 2‑D compression + text‑guidance” paradigm, enabling the reuse of powerful 2‑D foundation models for volumetric medical imaging without costly 3‑D training. Limitations include reliance on the quality of LLaVA‑Med generated text and potential information loss during compression. Future work may explore tighter integration with multimodal foundation models, broader disease applications, and more sophisticated text‑image pre‑training to further close the gap between 3‑D fidelity and 2‑D efficiency.

Comments & Academic Discussion

Loading comments...

Leave a Comment