On the Adversarial Robustness of Large Vision-Language Models under Visual Token Compression

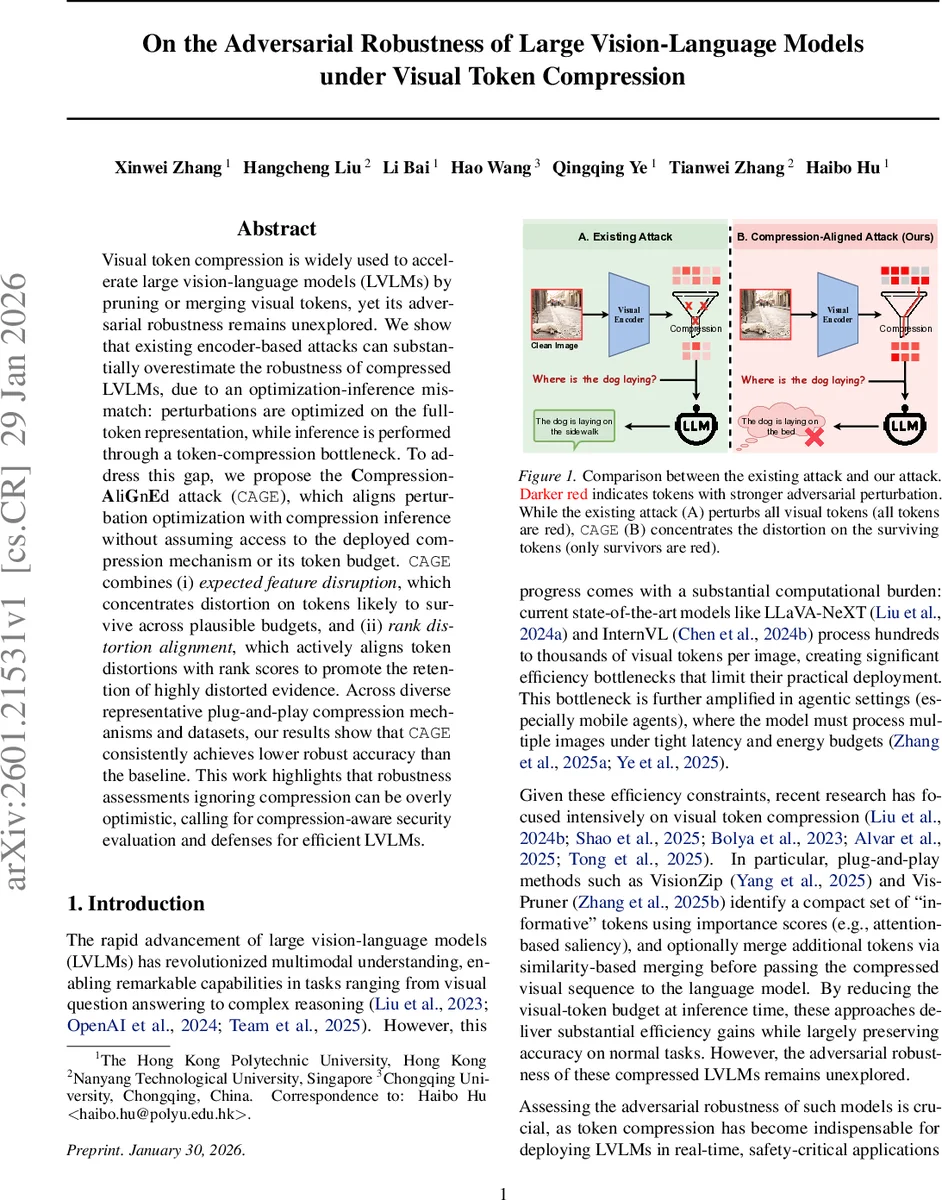

Visual token compression is widely used to accelerate large vision-language models (LVLMs) by pruning or merging visual tokens, yet its adversarial robustness remains unexplored. We show that existing encoder-based attacks can substantially overestimate the robustness of compressed LVLMs, due to an optimization-inference mismatch: perturbations are optimized on the full-token representation, while inference is performed through a token-compression bottleneck. To address this gap, we propose the Compression-AliGnEd attack (CAGE), which aligns perturbation optimization with compression inference without assuming access to the deployed compression mechanism or its token budget. CAGE combines (i) expected feature disruption, which concentrates distortion on tokens likely to survive across plausible budgets, and (ii) rank distortion alignment, which actively aligns token distortions with rank scores to promote the retention of highly distorted evidence. Across diverse representative plug-and-play compression mechanisms and datasets, our results show that CAGE consistently achieves lower robust accuracy than the baseline. This work highlights that robustness assessments ignoring compression can be overly optimistic, calling for compression-aware security evaluation and defenses for efficient LVLMs.

💡 Research Summary

The paper investigates a previously overlooked aspect of large vision‑language models (LVLMs): how visual token compression, a common technique for reducing inference latency and memory usage, affects adversarial robustness. Modern LVLMs such as LLaVA‑NeXT and InternVL generate hundreds of visual tokens per image, which are then fed to a large language model (LLM). To make deployment feasible, plug‑and‑play compression modules (e.g., VisionZip, VisionPruner) prune or merge tokens based on importance scores, reducing the token count from N to a budget K < N. While these methods preserve clean‑task accuracy, the authors show that they dramatically change the attack surface.

Existing adversarial attacks on LVLMs are typically encoder‑based (e.g., VEAttack). They assume full‑token access: a perturbation δ is optimized to maximize the cosine distance between clean and adversarial visual features, without considering any downstream compression. The authors demonstrate two key insights: (1) Compression acts as a “distortion concentrator.” High‑importance tokens—those most likely to survive pruning—also receive the strongest adversarial distortion, so the compressed model ends up relying on heavily corrupted evidence. (2) There is an optimization‑inference mismatch: attacks that optimize over the full token set allocate gradient signal to tokens that will later be discarded, leading to overly optimistic robustness estimates. Empirically, when the attack’s token budget Kₐₜₜₐcₖ matches the deployment budget Kₘₒdₑₗ, robust accuracy drops significantly compared with the full‑token baseline.

To close this gap, the authors propose the Compression‑Aligned Attack (CAGE). CAGE does not require knowledge of the exact compression mechanism or its token budget. Instead, it models the deployment budget Kₘₒdₑₗ as a random variable drawn from a prior distribution (e.g., uniform over a plausible range). CAGE consists of two complementary objectives:

-

Expected Feature Disruption (EFD). For each token i, the survival probability πᵢ = P(rankᵢ < Kₘₒdₑₗ) is computed based on its importance rank. The attack loss weights the cosine distance between clean and adversarial token features by πᵢ, thereby focusing distortion on tokens that are likely to survive compression, regardless of the exact Kₘₒdₑₗ.

-

Rank Distortion Alignment (RDA). This term aligns the magnitude of token distortion with the selection scores (e.g., attention). By minimizing a distribution‑matching loss (such as KL divergence) between the ranked distortion vector and the ranked importance scores, CAGE encourages highly distorted tokens to receive high selection scores, increasing their chance of being kept after compression.

Both terms are differentiable and can be optimized using standard gradient‑based methods, preserving the computational efficiency of encoder‑only attacks. The approach is agnostic to whether the compression method performs pure selection, selection + merging, or other variations, because it targets the common first‑stage token selection.

The authors evaluate CAGE on five representative compression mechanisms (including VisionZip, VisionPruner, Top‑K selection, token merging, and a learned pruning network) across three multimodal benchmarks: VQA‑v2, GQA, and COCO Captions. Results consistently show that CAGE achieves lower robust accuracy than the baseline VEAttack, with average drops of 5–12 % and up to 15 % in low‑budget regimes (K = 16). Importantly, CAGE remains effective even when the exact Kₘₒdₑₗ is unknown, confirming its robustness to deployment‑time uncertainty.

The paper also explores simple defenses: (a) attention score regularization to flatten importance distributions, and (b) token‑level random resampling before compression. Both provide modest improvements but fail to fully close the robustness gap, indicating that more sophisticated compression‑aware defenses (e.g., adversarial training that incorporates token pruning, or robust token selection criteria) are needed.

In summary, this work makes four major contributions: (1) it is the first to study adversarial robustness of LVLMs under visual token compression; (2) it identifies a critical optimization‑inference mismatch that leads to overly optimistic robustness estimates; (3) it introduces CAGE, a novel attack that aligns perturbation optimization with the compressed token space without requiring knowledge of the compression budget; and (4) it demonstrates the attack’s effectiveness across diverse compression strategies and datasets while highlighting the insufficiency of current defenses. The findings underscore that efficiency‑driven model modifications must be evaluated jointly with security considerations, and they open a new research direction toward compression‑aware adversarial defenses for multimodal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment