ScaleSim: Serving Large-Scale Multi-Agent Simulation with Invocation Distance-Based Memory Management

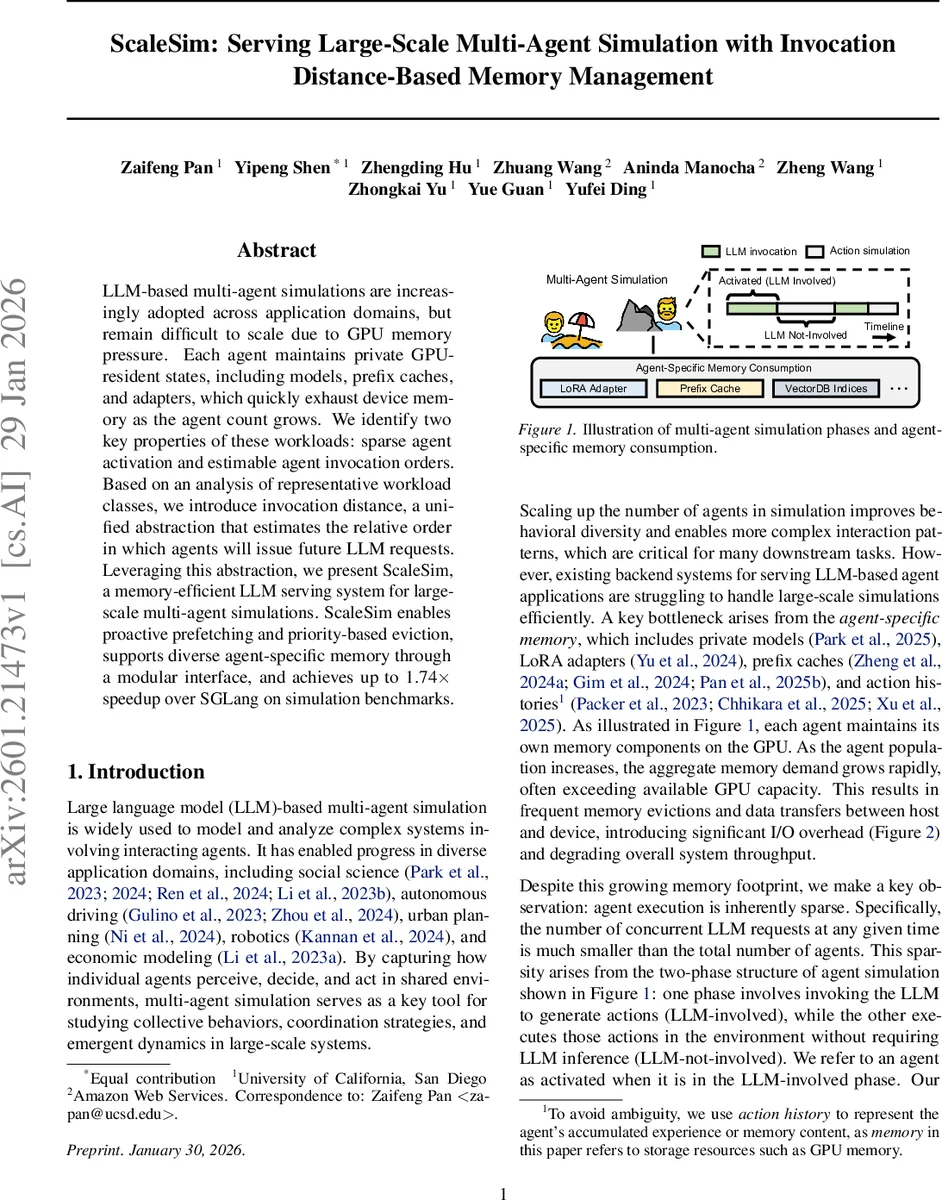

LLM-based multi-agent simulations are increasingly adopted across application domains, but remain difficult to scale due to GPU memory pressure. Each agent maintains private GPU-resident states, including models, prefix caches, and adapters, which quickly exhaust device memory as the agent count grows. We identify two key properties of these workloads: sparse agent activation and an estimable agent invocation order. Based on an analysis of representative workload classes, we introduce invocation distance, a unified abstraction that estimates the relative order in which agents will issue future LLM requests. Leveraging this abstraction, we present ScaleSim, a memory-efficient LLM serving system for large-scale multi-agent simulations. ScaleSim enables proactive prefetching and priority-based eviction, supports diverse agent-specific memory through a modular interface, and achieves up to 1.74x speedup over SGLang on simulation benchmarks.

💡 Research Summary

**

The paper tackles the severe GPU memory pressure that arises when scaling large‑scale multi‑agent simulations powered by large language models (LLMs). In such simulations each agent keeps private GPU‑resident state—model weights, LoRA adapters, prefix caches, action histories, etc.—which quickly exceeds device capacity as the number of agents grows. The authors observe two fundamental workload properties: (1) Sparse agent activation – at any simulation step only a small fraction of agents are in the “LLM‑involved” phase, while the majority are merely executing previously generated actions; (2) Estimable agent invocation order – the relative order in which agents will next request LLM inference can be predicted from the simulation semantics.

To exploit these properties they introduce a unified abstraction called invocation distance. An invocation distance is a relative measure of how far in the future an agent’s next LLM call lies (e.g., in seconds, steps, or hop counts). It is not an absolute timestamp but a comparable scalar that allows the system to rank agents by urgency. The authors categorize representative workloads into three classes—independent simulation, interaction‑involved simulation, and predefined activation paths—and show how each can produce invocation distances via simple heuristics (fixed action‑phase duration, environment‑driven cues, or graph hop counts).

Building on this abstraction, the authors design ScaleSim, a memory‑efficient LLM serving system implemented on top of SGLang. ScaleSim adds three key mechanisms:

- Distance‑aware prefetching – agents with the smallest invocation distances are proactively loaded onto the GPU before they actually issue a request, eliminating cold‑start latency.

- Priority‑based eviction – instead of generic LRU or usage‑based policies, ScaleSim evicts agents whose next invocation is farthest away, keeping near‑future agents resident even if they have not been accessed recently.

- Execution preemption – when a newly computed invocation distance drops sharply, the system can preempt lower‑priority agents and reallocate GPU memory instantly.

ScaleSim also defines a modular memory interface that lets applications register heterogeneous per‑agent memory modules (LoRA adapters, prefix caches, action histories, vector‑DB indices, etc.) as plug‑ins. The memory manager treats all these modules uniformly, enabling coordinated loading, eviction, and prefetching across heterogeneous data.

The evaluation uses three representative simulation benchmarks: AgentSociety (large social‑agent societies), Generative Agents (autonomous characters with rich internal states), and Information Diffusion (graph‑based rumor spreading). Experiments scale the number of agents from a few hundred up to several thousand. Compared with a vanilla SGLang deployment, ScaleSim reduces GPU‑CPU memory swaps by more than 45 % on average, cuts per‑step I/O overhead from several seconds down to sub‑second levels, and achieves up to 1.74× higher throughput. Memory efficiency (agents supported per GB of GPU memory) improves by roughly a factor of two.

The paper’s contribution is twofold: (i) it demonstrates that incorporating high‑level simulation semantics—specifically, a forecast of when each agent will need LLM inference—into the memory manager yields substantial performance gains; (ii) it provides a practical, extensible system (ScaleSim) that can be adopted by existing LLM serving stacks with modest engineering effort. The invocation‑distance abstraction is lightweight, requires only coarse‑grained hints from the application, and could be generalized to other domains where workloads exhibit predictable “next‑use” patterns, such as event‑driven streaming, graph processing, or hierarchical reinforcement‑learning pipelines.

Overall, ScaleSim shows that intelligent, prediction‑driven memory management is a viable path to scaling LLM‑based multi‑agent simulations far beyond current limits, without requiring larger GPUs or more hardware resources.

Comments & Academic Discussion

Loading comments...

Leave a Comment