Mitigating Overthinking in Large Reasoning Models via Difficulty-aware Reinforcement Learning

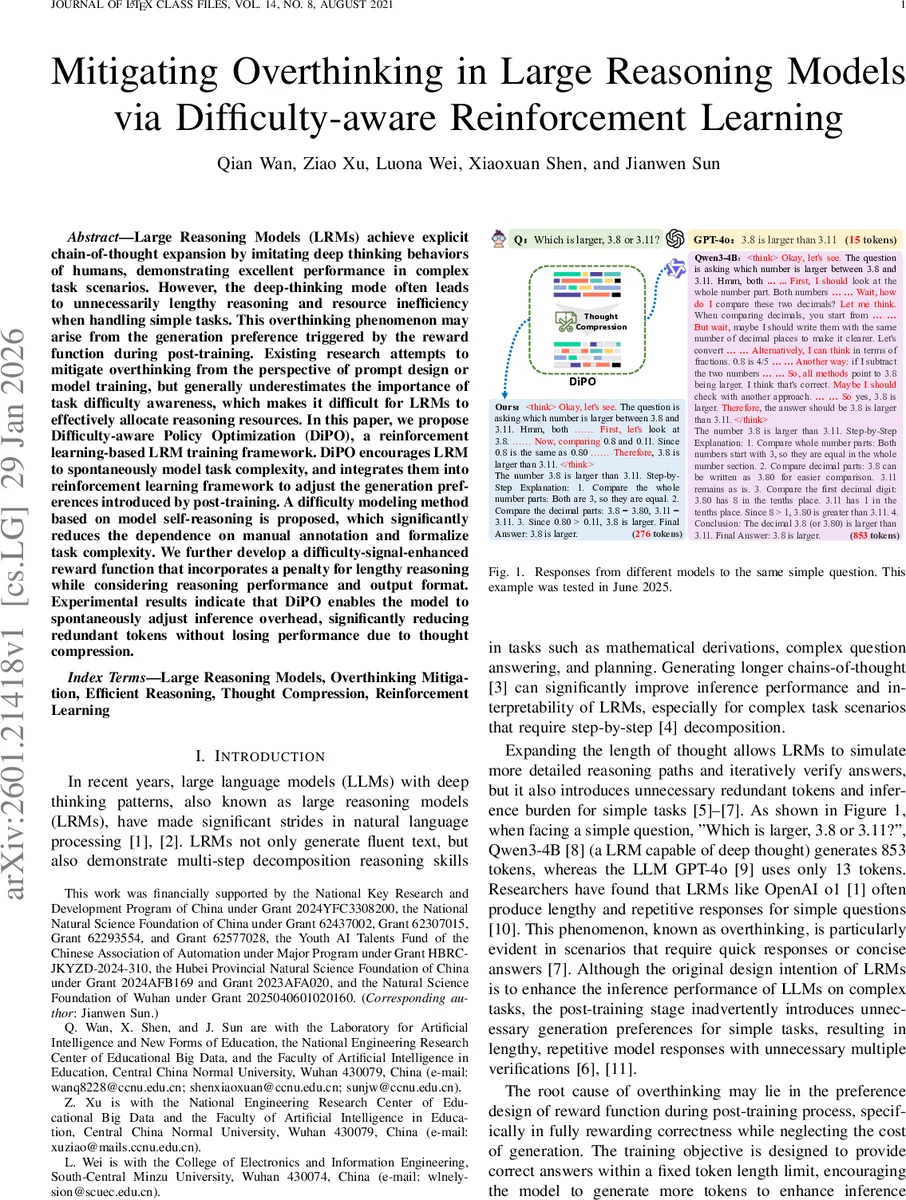

Large Reasoning Models (LRMs) achieve explicit chain-of-thought expansion by imitating deep thinking behaviors of humans, demonstrating excellent performance in complex task scenarios. However, the deep-thinking mode often leads to unnecessarily lengthy reasoning and resource inefficiency when handling simple tasks. This overthinking phenomenon may arise from the generation preference triggered by the reward function during post-training. Existing research attempts to mitigate overthinking from the perspective of prompt design or model training, but generally underestimates the importance of task difficulty awareness, which makes it difficult for LRMs to effectively allocate reasoning resources. In this paper, we propose Difficulty-aware Policy Optimization (DiPO), a reinforcement learning-based LRM training framework. DiPO encourages LRM to spontaneously model task complexity, and integrates them into reinforcement learning framework to adjust the generation preferences introduced by post-training. A difficulty modeling method based on model self-reasoning is proposed, which significantly reduces the dependence on manual annotation and formalize task complexity. We further develop a difficulty-signal-enhanced reward function that incorporates a penalty for lengthy reasoning while considering reasoning performance and output format. Experimental results indicate that DiPO enables the model to spontaneously adjust inference overhead, significantly reducing redundant tokens without losing performance due to thought compression.

💡 Research Summary

The paper addresses the “overthinking” problem that plagues Large Reasoning Models (LRMs). While chain‑of‑thought prompting enables these models to excel on complex tasks, it also causes them to generate unnecessarily long reasoning sequences for simple queries, leading to wasted computation and latency. The authors argue that this behavior stems from the reward design used during post‑training, which heavily rewards correctness but neglects generation cost. Existing solutions—prompt engineering, length‑penalizing rewards, or supervised pruning—either rely on manual heuristics or fail to give the model an intrinsic sense of task difficulty, so they cannot dynamically allocate reasoning resources.

To solve this, the authors propose Difficulty‑aware Policy Optimization (DiPO), a reinforcement‑learning (RL) framework that endows LRMs with an automatic perception of task difficulty and uses this perception to modulate the generation policy. DiPO consists of four main components:

-

Difficulty Signal Construction – Instead of hand‑labeling difficulty, the method leverages the model’s own output length as a proxy. Each training instance is prompted with “Let’s think step by step” and the LRM’s generated answer is collected. The raw token count L(x) is first smoothed (˜L(x)=p·L(x)) to mitigate long‑tail spikes, then standardized using the dataset mean µ and standard deviation σ, yielding Z(x) = (˜L(x)−µ)/σ + α·δ, where δ indicates correctness and α balances the impact of errors. Finally, a clipping function produces a bounded difficulty score Diff(x) = clip(Z(x), 1−ξ, 1+ξ). This pipeline yields a continuous, stable difficulty signal without any human annotation.

-

Difficulty‑aware Reward Design – The reward function combines three base scores: p for formatting errors, f for incorrect answers, and s for correct answers. A length‑and‑difficulty penalty λ_i = min(ε, len(o_i)/c)·(Diff(q_i)+φ) is subtracted from the base score. Consequently, for low‑difficulty (simple) tasks the penalty for extra tokens is larger, encouraging the model to compress its reasoning, while for high‑difficulty tasks the penalty is milder, allowing more extensive chains of thought. The formulation also includes a length‑cropping safeguard to prevent extreme outputs from destabilizing training.

-

RL Optimization – DiPO adopts GRPO (Generalized Reward‑based Policy Optimization), a recent policy‑gradient algorithm that improves upon PPO by better handling reward variance. For each question, K candidate solutions are sampled from a reference policy; each candidate receives the difficulty‑aware reward, and advantage estimates guide the policy update. The inclusion of the Diff(q) term makes the policy explicitly sensitive to task difficulty, a novelty compared to prior length‑penalty methods.

-

Empirical Evaluation – Experiments are conducted on four in‑domain datasets (mathematical derivations, complex QA, planning, etc.) and three out‑of‑domain datasets (general conversation, code generation, scientific queries). DiPO consistently reduces token usage by 30‑70 % while preserving accuracy within 0.5 % of the baseline. In a simple numeric comparison (“Which is larger, 3.8 or 3.11?”) a 3‑B LRM that originally emitted 853 tokens is compressed to under 20 tokens, matching the brevity of GPT‑4o. Out‑of‑domain results demonstrate that the learned difficulty signal transfers well, yielding stable performance gains across unseen tasks.

Key insights:

- Model‑generated output length is an effective, low‑cost proxy for task difficulty.

- Smoothing, standardization, and clipping produce a robust continuous difficulty signal suitable for RL rewards.

- Difficulty‑weighted penalties enable the model to autonomously decide how much reasoning depth is necessary, eliminating the need for handcrafted prompt templates or static token budgets.

- Integrating difficulty into GRPO yields a more stable training dynamic than plain PPO with length penalties.

The authors conclude that DiPO offers a principled way to endow LRMs with self‑regulated reasoning depth, bridging the gap between the desire for deep, interpretable chains of thought on hard problems and the need for concise, efficient responses on easy ones. Future work may explore richer difficulty cues (e.g., problem type, time constraints), multimodal extensions, or hierarchical policies that switch between “think‑fast” and “think‑deep” modes based on the learned difficulty estimate.

Comments & Academic Discussion

Loading comments...

Leave a Comment