User-Centric Evidence Ranking for Attribution and Fact Verification

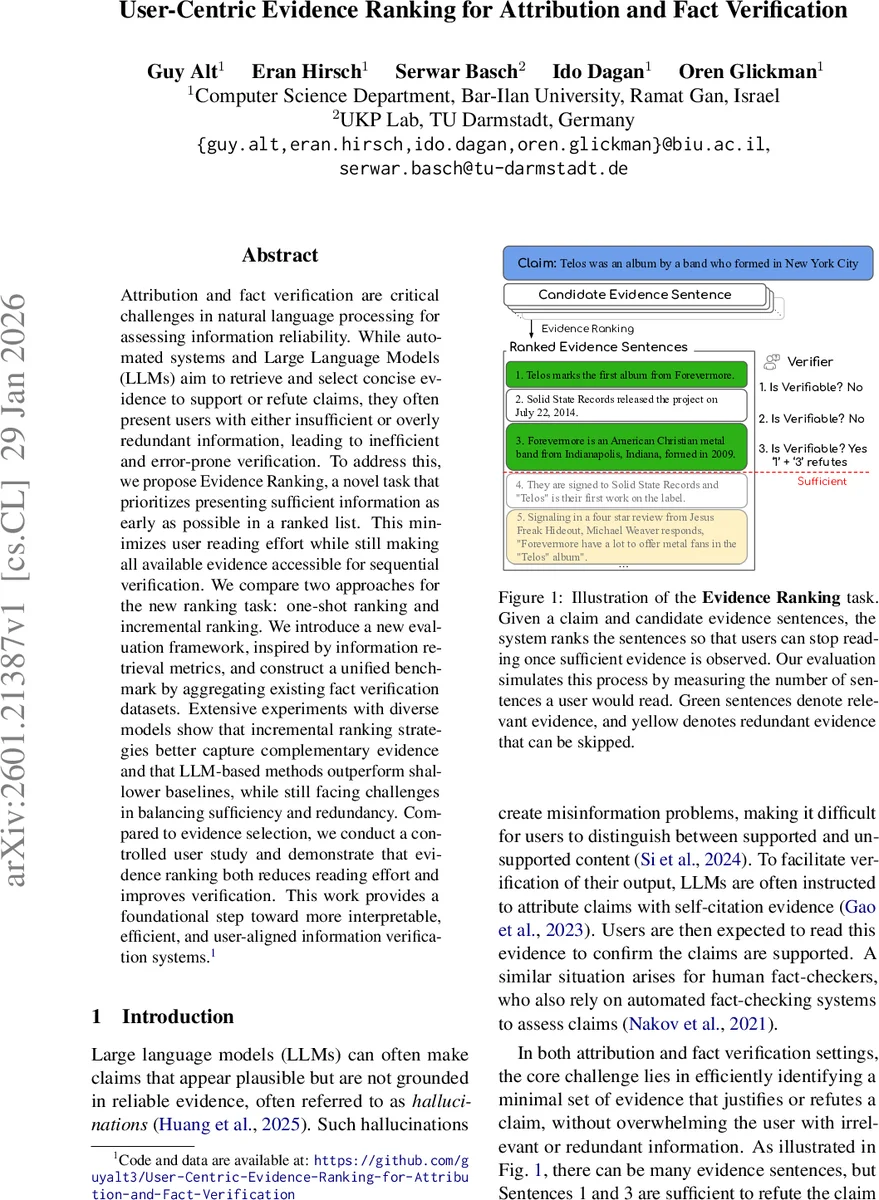

Attribution and fact verification are critical challenges in natural language processing for assessing information reliability. While automated systems and Large Language Models (LLMs) aim to retrieve and select concise evidence to support or refute claims, they often present users with either insufficient or overly redundant information, leading to inefficient and error-prone verification. To address this, we propose Evidence Ranking, a novel task that prioritizes presenting sufficient information as early as possible in a ranked list. This minimizes user reading effort while still making all available evidence accessible for sequential verification. We compare two approaches for the new ranking task: one-shot ranking and incremental ranking. We introduce a new evaluation framework, inspired by information retrieval metrics, and construct a unified benchmark by aggregating existing fact verification datasets. Extensive experiments with diverse models show that incremental ranking strategies better capture complementary evidence and that LLM-based methods outperform shallower baselines, while still facing challenges in balancing sufficiency and redundancy. Compared to evidence selection, we conduct a controlled user study and demonstrate that evidence ranking both reduces reading effort and improves verification. This work provides a foundational step toward more interpretable, efficient, and user-aligned information verification systems.

💡 Research Summary

The paper tackles a core inefficiency in current fact‑checking pipelines: users are often forced to read either too little or too much evidence before they can decide whether a claim is true or false. Existing evidence‑selection methods treat the problem as a binary decision—select a fixed set of sentences based on precision/recall—ignoring the sequential nature of human verification. To bridge this gap, the authors introduce Evidence Ranking, a novel task that orders all candidate evidence sentences so that a minimal sufficient set appears as early as possible in the list. The objective is to minimize the Minimal Sufficient Rank (MSR), i.e., the position of the smallest prefix that already contains enough information to support or refute the claim.

To evaluate how well a system achieves this, three adapted information‑retrieval metrics are proposed: a normalized Mean Reciprocal Rank (MRR) that measures how many extra sentences a user must read beyond the ideal rank, Success Rate (SR) that checks whether sufficiency is achieved exactly at the optimal position, and a variant of NDCG for overall ranking quality. The authors also construct a unified benchmark by merging three well‑known fact‑verification datasets—FEVER, HoVer, and WICE—resulting in 1,000 instances with diverse characteristics (single‑hop vs. multi‑hop reasoning, varying numbers of gold evidence sets, and realistic web‑derived candidate sentences).

Four families of models are evaluated: (i) embedding‑based similarity, (ii) fine‑tuned Natural Language Inference (NLI) classifiers, (iii) reasoning‑oriented rerankers, and (iv) large language models (LLMs) prompted with chain‑of‑thought or self‑ask strategies. For each family, two ranking strategies are compared: a one‑shot approach that scores all candidates in a single pass, and an incremental approach that iteratively selects the next sentence while conditioning on the already chosen evidence. The incremental method explicitly encourages non‑redundant, complementary evidence.

Experimental results show that LLM‑based methods achieve the highest overall performance (MRR ≈ 0.75), and that incremental ranking consistently outperforms one‑shot ranking, especially on the multi‑hop HoVer and the heterogeneous WICE data. Nonetheless, even the best systems still require users to read 30‑40 % more sentences than the theoretical minimum, indicating room for improvement in balancing sufficiency and redundancy.

A controlled user study with 60 participants further validates the practical impact. When using evidence ranking, participants read on average 2.1 sentences per claim (≈ 25 % fewer than the evidence‑selection baseline) and attained an accuracy of 87 % versus 78 % for the baseline. Subjective feedback highlighted the ease of skipping irrelevant information and the perceived trustworthiness of the ranking output.

The paper concludes that evidence ranking offers a user‑aligned formulation for fact verification, providing a clear metric‑driven target (MSR) and a benchmark for future work. Limitations include reliance on gold‑standard evidence sets for sufficiency, scalability to thousands of candidate sentences, and the opacity of LLM decision processes. Future research directions suggested are (1) explicit modeling of logical dependencies between evidence sentences (e.g., graph‑based rerankers), (2) extending the framework to document‑level and multi‑document retrieval, and (3) developing methods to extract rationales from LLMs to improve interpretability. Overall, the work lays a solid foundation for more efficient, interpretable, and user‑centric fact‑checking systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment