RerouteGuard: Understanding and Mitigating Adversarial Risks for LLM Routing

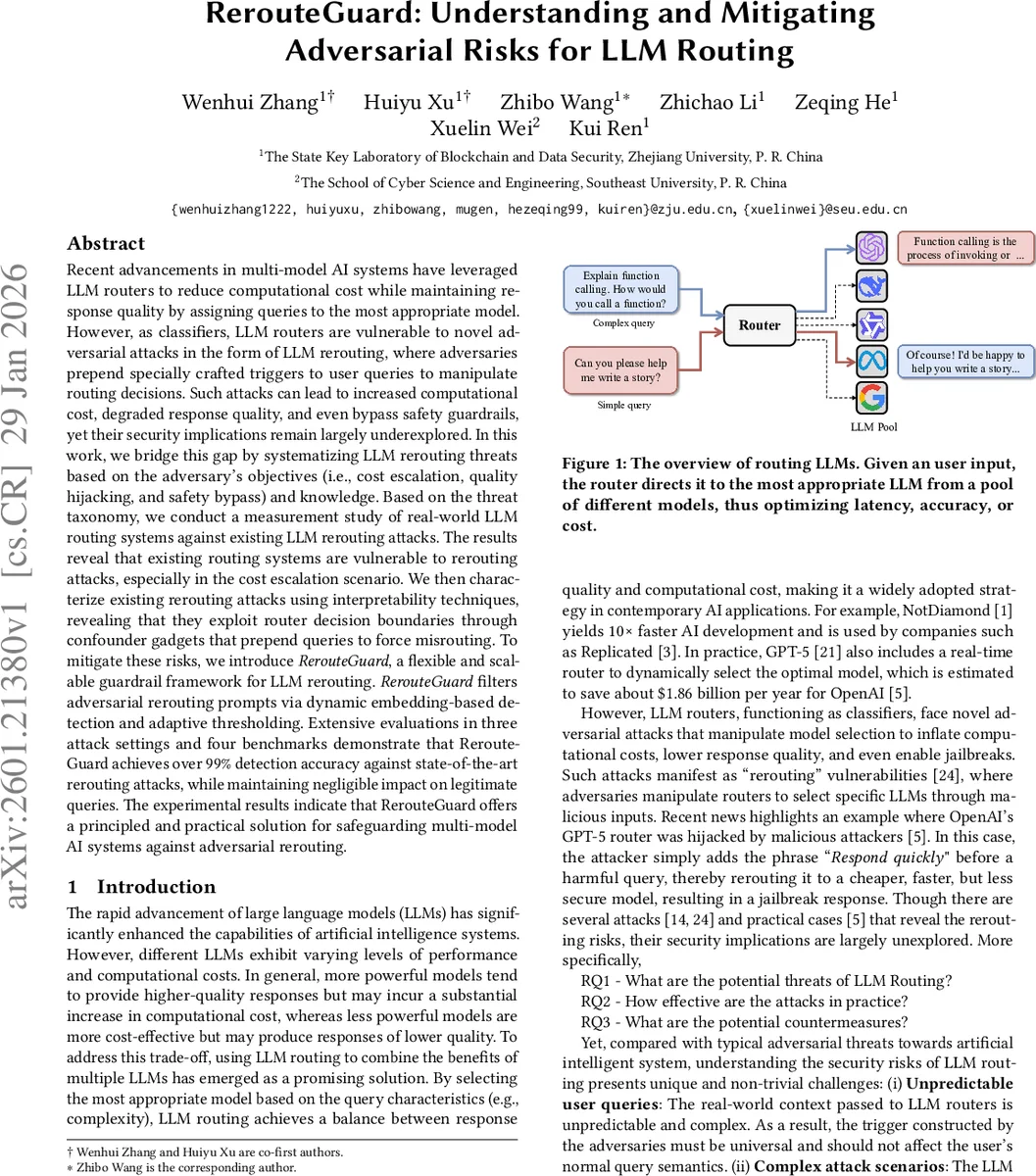

Recent advancements in multi-model AI systems have leveraged LLM routers to reduce computational cost while maintaining response quality by assigning queries to the most appropriate model. However, as classifiers, LLM routers are vulnerable to novel adversarial attacks in the form of LLM rerouting, where adversaries prepend specially crafted triggers to user queries to manipulate routing decisions. Such attacks can lead to increased computational cost, degraded response quality, and even bypass safety guardrails, yet their security implications remain largely underexplored. In this work, we bridge this gap by systematizing LLM rerouting threats based on the adversary’s objectives (i.e., cost escalation, quality hijacking, and safety bypass) and knowledge. Based on the threat taxonomy, we conduct a measurement study of real-world LLM routing systems against existing LLM rerouting attacks. The results reveal that existing routing systems are vulnerable to rerouting attacks, especially in the cost escalation scenario. We then characterize existing rerouting attacks using interpretability techniques, revealing that they exploit router decision boundaries through confounder gadgets that prepend queries to force misrouting. To mitigate these risks, we introduce RerouteGuard, a flexible and scalable guardrail framework for LLM rerouting. RerouteGuard filters adversarial rerouting prompts via dynamic embedding-based detection and adaptive thresholding. Extensive evaluations in three attack settings and four benchmarks demonstrate that RerouteGuard achieves over 99% detection accuracy against state-of-the-art rerouting attacks, while maintaining negligible impact on legitimate queries. The experimental results indicate that RerouteGuard offers a principled and practical solution for safeguarding multi-model AI systems against adversarial rerouting.

💡 Research Summary

The paper “RerouteGuard: Understanding and Mitigating Adversarial Risks for LLM Routing” investigates a previously under‑explored security problem in multi‑model AI systems that use large language model (LLM) routers to dynamically select the most appropriate model for each user query. Because a router is essentially a classifier that decides, based on the perceived difficulty or expected performance of a query, whether to forward it to a cheap, lower‑capacity model or an expensive, high‑capacity model, it becomes a target for adversarial manipulation. The authors define “LLM rerouting” attacks, where an adversary prepends a crafted trigger to a benign query in order to force the router to choose a specific target model.

Three distinct attacker goals are identified: (1) Cost escalation, where simple queries are rerouted to the expensive model, inflating compute costs while preserving answer quality; (2) Quality hijacking, where complex queries are sent to a weaker model, degrading response quality; and (3) Safety bypass, where harmful queries are directed to a less‑guarded model, enabling jailbreaks. The threat model further distinguishes three knowledge settings: white‑box (full access to router weights and gradients), gray‑box (access only to the router’s win‑rate scores), and box‑free (no internal knowledge, relying on external LLMs to generate triggers).

The authors conduct a systematic measurement study on four widely used routing mechanisms (similarity‑based, classification‑based, scoring‑based, and causal LLM classifiers) across three public benchmarks (math, code, reasoning, and open‑domain dialogue). In all three attack scenarios, especially cost escalation, the routers exhibit alarmingly high attack success rates—often approaching 100 %—demonstrating that current routing heuristics are highly vulnerable. Interpretability analyses (SHAP, attention visualizations) reveal that the adversarial triggers occupy a semantic region close to genuine “complex” queries, allowing them to cross the router’s decision boundary with minimal perturbation.

To mitigate these risks, the paper introduces RerouteGuard, a plug‑and‑play detection framework that operates before the router. RerouteGuard is trained via contrastive learning on a large corpus of paired normal and adversarial prompts. Positive pairs consist of two samples from the same class (both benign or both malicious), while negative pairs mix classes. This training forces the embedding space to separate benign from malicious inputs. At inference time, a new query is combined with a handful of stored benign exemplars to form test pairs; the similarity scores of these pairs are aggregated via majority voting to decide whether the query is suspicious. An adaptive thresholding mechanism adjusts the decision boundary based on real‑time traffic characteristics, ensuring low latency.

Extensive experiments show that RerouteGuard achieves an average detection accuracy of 99.3 % across white‑box, gray‑box, and box‑free settings, with a false‑positive rate below 2.5 % on legitimate queries. In the cost‑escalation scenario it blocks 99.8 % of attacks, while quality hijacking and safety‑bypass attacks are blocked at >98 % rates. The detection overhead is negligible (<0.5 % additional latency), making the solution practical for production systems.

In summary, the work makes three key contributions: (1) a comprehensive taxonomy of LLM routing threats; (2) the first large‑scale empirical evaluation demonstrating severe vulnerabilities in existing routers; and (3) a novel, lightweight, and highly effective guardrail—RerouteGuard—that can be seamlessly integrated into existing multi‑model pipelines to protect against adversarial rerouting. This research highlights the need for security‑aware design of routing components as AI services continue to scale and diversify.

Comments & Academic Discussion

Loading comments...

Leave a Comment