BEAP-Agent: Backtrackable Execution and Adaptive Planning for GUI Agents

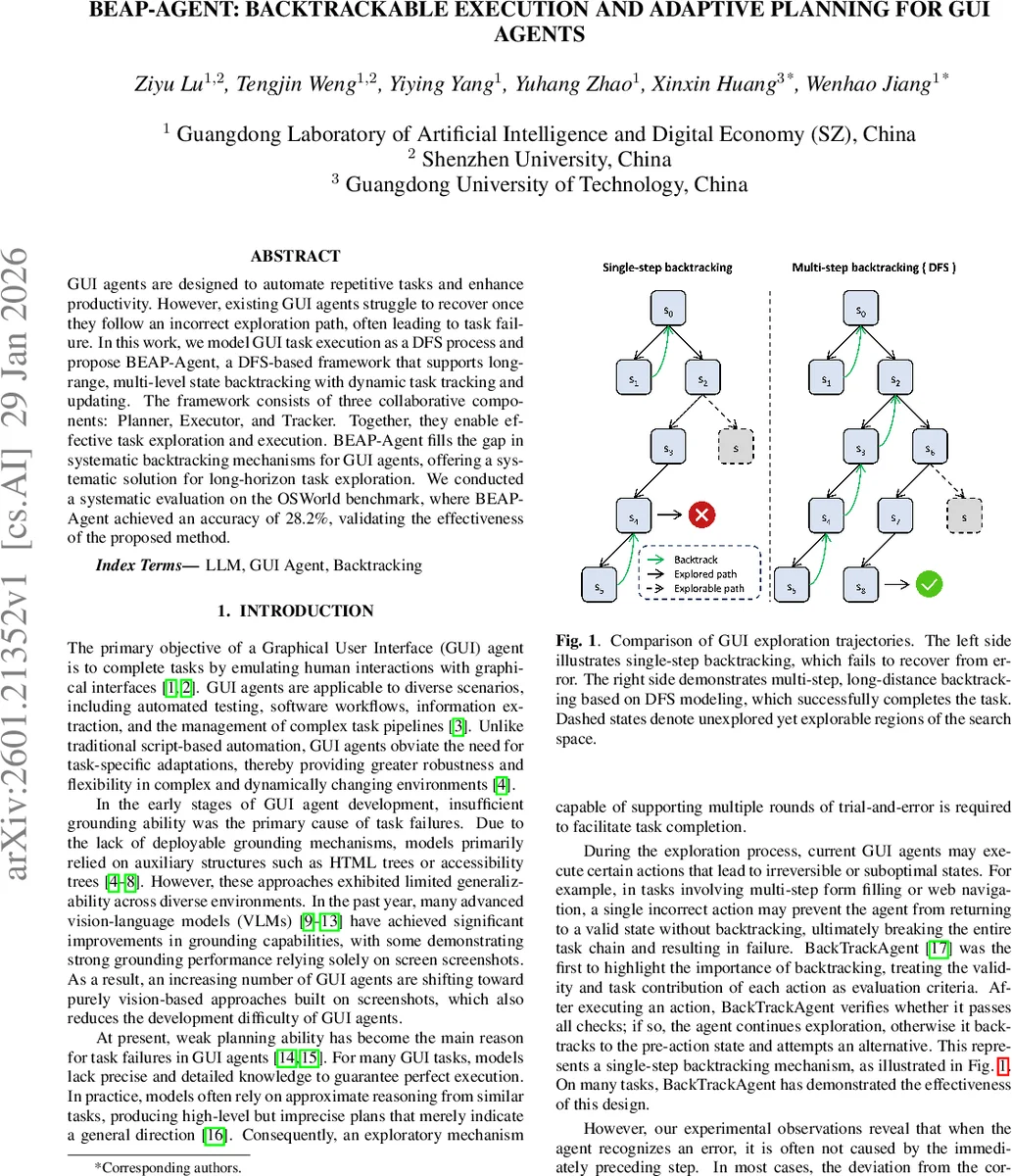

GUI agents are designed to automate repetitive tasks and enhance productivity. However, existing GUI agents struggle to recover once they follow an incorrect exploration path, often leading to task failure. In this work, we model GUI task execution as a DFS process and propose BEAP-Agent, a DFS-based framework that supports long-range, multi-level state backtracking with dynamic task tracking and updating. The framework consists of three collaborative components: Planner, Executor, and Tracker. Together, they enable effective task exploration and execution. BEAP-Agent fills the gap in systematic backtracking mechanisms for GUI agents, offering a systematic solution for long-horizon task exploration. We conducted a systematic evaluation on the OSWorld benchmark, where BEAP-Agent achieved an accuracy of 28.2%, validating the effectiveness of the proposed method.

💡 Research Summary

The paper addresses a critical limitation of current graphical user interface (GUI) agents: their inability to recover after following an incorrect exploration path, which often leads to task failure. The authors propose to model GUI task execution as a depth‑first search (DFS) over a state‑action space, enabling both local exploration and long‑range, multi‑level backtracking. Building on this formulation, they introduce BEAP‑Agent, a framework that integrates three collaborative components—Planner, Executor, and Tracker—to provide systematic backtracking and dynamic task tracking.

Problem formulation

The GUI environment is defined as a set of states S, each corresponding to a distinct screen configuration, and a set of admissible actions A(s) (mouse click, drag, scroll, keyboard input). A transition function T(s, a) maps a state‑action pair to a successor state. The execution of a task is represented as a tree where nodes are states and edges are actions. The agent follows a DFS policy: if the current state has unexplored actions, it selects one and proceeds; if all actions are exhausted, it backtracks to the nearest ancestor with remaining unexplored actions. Failed paths are recorded to avoid redundant exploration.

BEAP‑Agent architecture

- Planner receives the current screen s, the natural‑language task description X, and the set of previously failed paths F. It outputs a plan P consisting of ordered subtasks, each labeled PENDING or COMPLETED. When re‑planning after a backtrack, completed subtasks retain their status while the remaining ones are regenerated to avoid the failed trajectories.

- Executor grounds the plan into concrete UI actions. It takes the current state, the task description, the plan, and the historical trajectory H, and produces an action a and the resulting state s′. In backtrack mode, the Executor uses the stored trajectory to generate actions that revert the environment to a prior snapshot.

- Tracker observes the outcome of each action, updates the status of subtasks, and determines a global execution status E. E can be CONTINUE (proceed with the current plan), BACKTRACK (the current path is blocked), FAIL (task deemed impossible), or DONE (task completed). In backtrack mode, Tracker reports RECOVERED or NOT RECOVERED depending on whether the environment has been successfully restored.

Experimental setup

The authors evaluate BEAP‑Agent on the OSWorld benchmark, which contains 369 real‑world desktop tasks spanning OS operations, office productivity, web browsing, professional software, and multi‑application workflows. To isolate the effect of backtracking, the agents receive only screenshots as input and are limited to the four basic interaction primitives. The maximum number of steps per episode is 50. GPT‑4o powers both Planner and Tracker, while UI‑TARS‑1.5‑7B serves as the Executor.

Results

- BEAP‑Agent achieves a task success rate of 28.2 %, outperforming the strongest baseline (Agent S2 at 26.6 %) and improving over the UI‑TARS‑1.5‑7B baseline (24.0 %). This corresponds to a relative gain of roughly 6 % over the best prior method.

- Backtracking is triggered in 35.8 % of the tasks; among those, the agent successfully recovers in 65.5 % of attempts, with an average of 2.72 steps required per successful backtrack.

- An ablation study shows that removing the backtrack mechanism drops accuracy to 26.3 %, while removing the Tracker drops it further to 23.6 %, confirming that both long‑range backtracking and dynamic task tracking are essential for performance.

- Domain‑wise analysis reveals that tasks with large UI state changes (e.g., Chrome browsing, workflow automation) benefit most, with backtrack success rates exceeding 80 % in the Chrome domain.

Implementation details

Backtrack actions reuse the same Executor interface as normal actions, incurring no extra execution overhead. State snapshots are stored in a stack with a sliding‑window or checkpoint policy, keeping memory consumption bounded.

Conclusions and future work

By framing GUI execution as a DFS‑based state‑space search and coupling it with a Planner‑Executor‑Tracker loop, BEAP‑Agent provides a principled way to recover from deep errors and to re‑plan dynamically. The empirical evaluation demonstrates that systematic multi‑step backtracking substantially improves success rates on realistic desktop tasks. The authors note that even powerful closed‑source models like GPT‑4o still struggle with fine‑grained visual details, suggesting future research directions such as hybrid model ensembles, richer visual grounding, and more sophisticated long‑term memory mechanisms to further boost GUI agent reliability.

Comments & Academic Discussion

Loading comments...

Leave a Comment