Towards Robust Dysarthric Speech Recognition: LLM-Agent Post-ASR Correction Beyond WER

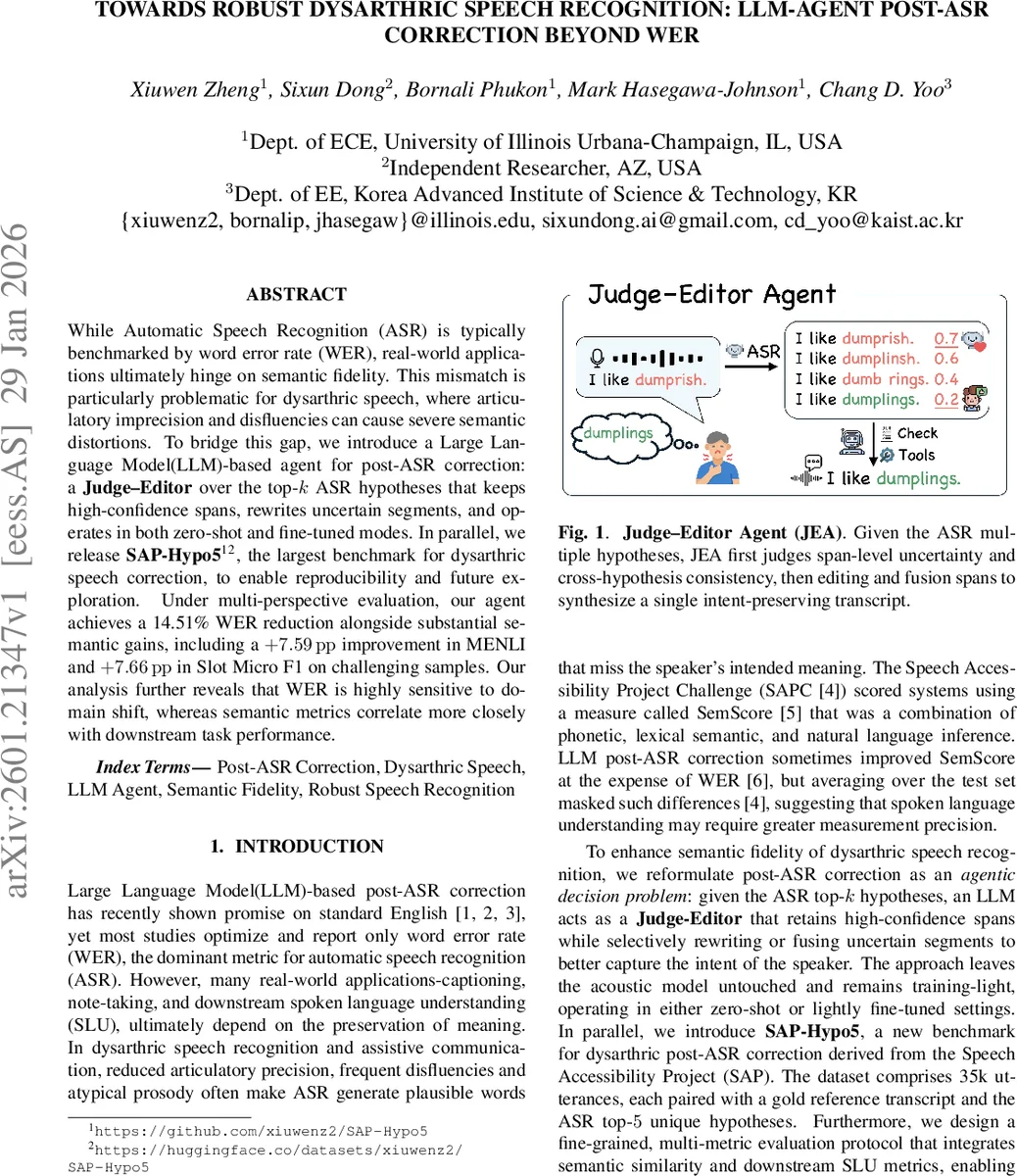

While Automatic Speech Recognition (ASR) is typically benchmarked by word error rate (WER), real-world applications ultimately hinge on semantic fidelity. This mismatch is particularly problematic for dysarthric speech, where articulatory imprecision and disfluencies can cause severe semantic distortions. To bridge this gap, we introduce a Large Language Model (LLM)-based agent for post-ASR correction: a Judge-Editor over the top-k ASR hypotheses that keeps high-confidence spans, rewrites uncertain segments, and operates in both zero-shot and fine-tuned modes. In parallel, we release SAP-Hypo5, the largest benchmark for dysarthric speech correction, to enable reproducibility and future exploration. Under multi-perspective evaluation, our agent achieves a 14.51% WER reduction alongside substantial semantic gains, including a +7.59 pp improvement in MENLI and +7.66 pp in Slot Micro F1 on challenging samples. Our analysis further reveals that WER is highly sensitive to domain shift, whereas semantic metrics correlate more closely with downstream task performance.

💡 Research Summary

The paper tackles the problem of semantic degradation in automatic speech recognition (ASR) for dysarthric speakers, whose speech is characterized by imprecise articulation, frequent disfluencies, and atypical prosody. Conventional ASR evaluation relies heavily on word error rate (WER), which measures surface-level transcription accuracy but does not capture whether the intended meaning is preserved. This mismatch is especially pronounced for dysarthric speech, where a low WER does not guarantee that the user’s intent is correctly understood.

To address this gap, the authors propose a “Judge‑Editor Agent” (JEA), an LLM‑based post‑ASR correction system that operates on the top‑k ASR hypotheses (k = 5 in their experiments). The agent works in two stages. First, a “Judge” component analyses cross‑hypothesis agreement at the span level, assigning a confidence score to each token or phrase. High‑confidence spans (those that appear consistently across multiple hypotheses) are retained unchanged. Low‑confidence spans are flagged for revision. Second, an “Editor” component uses a large language model to rewrite or fuse the flagged spans, guided by a carefully crafted instruction template that emphasizes preserving named entities, numbers, and avoiding hallucinations. The final corrected transcript is assembled by merging retained spans with edited ones.

The system is evaluated in two deployment modes. In the zero‑shot mode, the LLM (e.g., Qwen2‑7B‑Instruct) receives only the prompt and the hypotheses, with no task‑specific training. In the fine‑tuned mode, the authors apply lightweight LoRA adaptation (≤ 0.25 % of parameters) on the SAP‑Hypo5 dataset, a new benchmark they release. SAP‑Hypo5 contains 35 k dysarthric utterances, each paired with a gold reference transcript and the top‑5 unique ASR hypotheses generated by a Whisper‑large‑v2 model fine‑tuned on the Speech Accessibility Project (SAP) data. The dataset follows the same speaker‑independent split strategy as SAP, providing 31 123 training, 845 development, and 2 647 test utterances.

Evaluation goes beyond WER. The authors report three semantic similarity metrics: Q‑Emb (sentence‑level embedding similarity using Qwen3‑Embedding‑8B), BERTScore‑F1 (token‑level contextual similarity), and MENLI (natural language inference entailment score). They also assess downstream spoken language understanding (SLU) performance using Intent Accuracy and Slot Micro‑F1, where intents and slots are derived from XLM‑RoBERTa models fine‑tuned on the MASSIVE dataset.

Results show that zero‑shot JEA yields modest WER improvements only on the “Err” subset (where the top‑1 hypothesis contains errors). In contrast, fine‑tuned agents achieve consistent gains across all metrics. The best fine‑tuned model (Qwen2‑7B) reduces overall WER from 13.63 % to 11.78 % (a 14.51 % relative reduction) and improves semantic scores (e.g., Q‑Emb from 88.18 % to 89.84 %). More strikingly, MENLI rises by 7.59 percentage points and Slot Micro‑F1 by 7.66 points, indicating that the corrected transcripts better preserve logical entailment and entity extraction quality.

Ablation studies dissect the contributions of the Judge and Editor components. When only the Judge is active (selecting the best hypothesis without editing), WER drops modestly; when only the Editor is active (editing the top‑1 hypothesis), performance is similar. The combination of both yields the largest gains, confirming their complementary roles.

The authors also analyze robustness to domain shift. WER is highly sensitive to changes in speaker or recording conditions, often inflating due to superficial mismatches such as contraction expansions. Semantic metrics, especially MENLI and BERTScore‑F1, remain more stable and correlate better with downstream SLU performance. This suggests that future research should prioritize meaning‑preserving metrics over pure WER when developing assistive speech technologies.

In conclusion, the paper introduces a lightweight, model‑agnostic framework that leverages LLMs as decision‑making agents to post‑process ASR outputs for dysarthric speech. By preserving high‑confidence spans and selectively rewriting uncertain ones, the Judge‑Editor Agent achieves state‑of‑the‑art performance on the newly released SAP‑Hypo5 benchmark across a suite of lexical, semantic, and task‑oriented metrics. The work highlights the limitations of WER as the sole evaluation criterion, advocates for richer semantic assessments, and opens avenues for further improvements through in‑context learning, self‑checking mechanisms, and integration with downstream SLU models tailored to accessibility applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment