Grounding and Enhancing Informativeness and Utility in Dataset Distillation

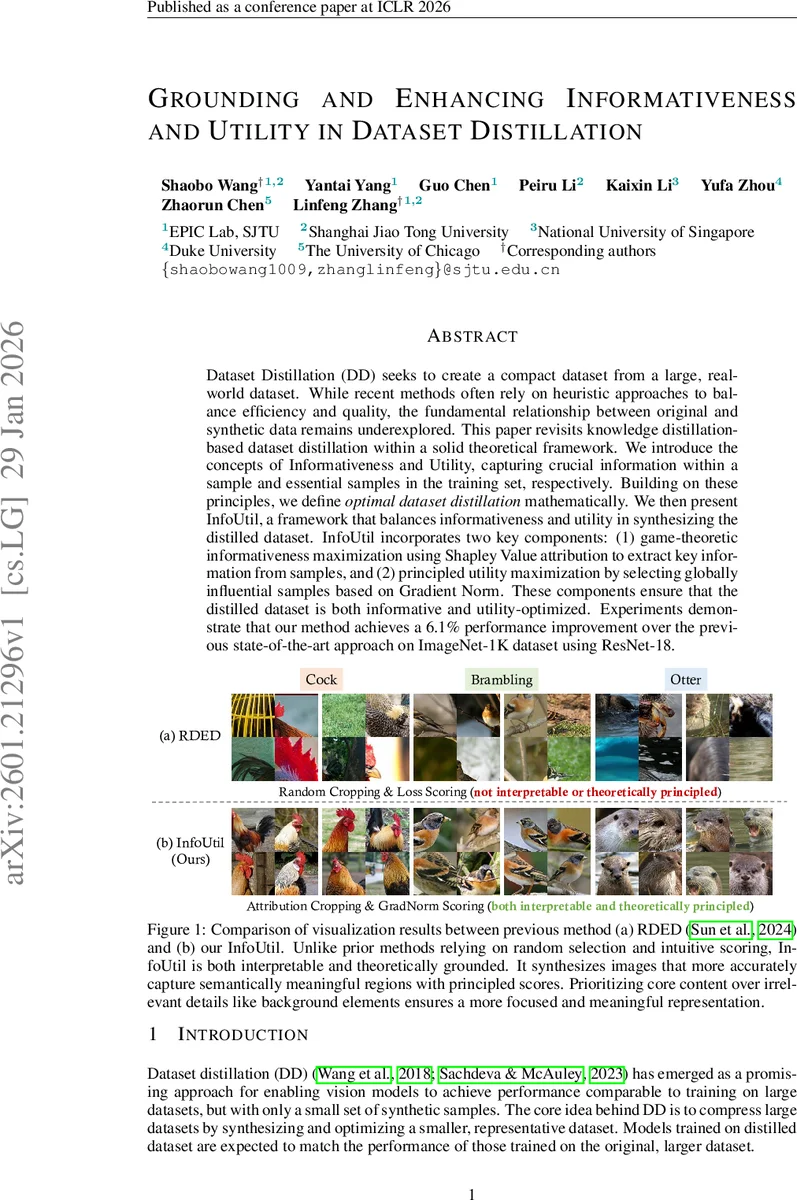

Dataset Distillation (DD) seeks to create a compact dataset from a large, real-world dataset. While recent methods often rely on heuristic approaches to balance efficiency and quality, the fundamental relationship between original and synthetic data remains underexplored. This paper revisits knowledge distillation-based dataset distillation within a solid theoretical framework. We introduce the concepts of Informativeness and Utility, capturing crucial information within a sample and essential samples in the training set, respectively. Building on these principles, we define optimal dataset distillation mathematically. We then present InfoUtil, a framework that balances informativeness and utility in synthesizing the distilled dataset. InfoUtil incorporates two key components: (1) game-theoretic informativeness maximization using Shapley Value attribution to extract key information from samples, and (2) principled utility maximization by selecting globally influential samples based on Gradient Norm. These components ensure that the distilled dataset is both informative and utility-optimized. Experiments demonstrate that our method achieves a 6.1% performance improvement over the previous state-of-the-art approach on ImageNet-1K dataset using ResNet-18.

💡 Research Summary

The paper tackles the fundamental problem of dataset distillation (DD), which aims to compress a large training set into a tiny synthetic set that can train models to achieve comparable performance. While recent DD methods have achieved impressive empirical results, they largely rely on heuristic criteria for selecting and compressing samples, leading to a trade‑off between efficiency and quality and a lack of interpretability. To address these gaps, the authors introduce two theoretically grounded concepts—Informativeness and Utility—and formulate Optimal Dataset Distillation as a joint maximization problem over these quantities.

Informativeness is defined for a sample x and a binary mask s (with a fixed compression budget d′) as the negative L2 distance between the model’s output on the original sample and on the masked sample:

I(x; fθ) = −‖fθ(s ∘ x) − fθ(x)‖₂.

Maximizing I corresponds to finding the mask that preserves the most predictive information of the original image.

Utility quantifies how crucial a training example is for the learning dynamics. The authors first define Gradient Flow, a continuous‑time analogue of the loss change for a specific example during training. Utility of a point (xi, yi) is then the worst‑case change in Gradient Flow when that point is removed from a mini‑batch. Because directly computing this is prohibitive, they prove (Theorem 1) that Utility is upper‑bounded by a constant times the Gradient Norm of the example, i.e., the norm of the gradient of the loss with respect to model parameters. This bound enables a cheap yet principled proxy for utility.

Building on these definitions, the paper proposes InfoUtil, a two‑stage pipeline:

-

Game‑theoretic Informativeness Maximization – Each image is treated as a cooperative game where pixels (or patches) are players. The Shapley Value, a unique attribution satisfying linearity, dummy, symmetry, and efficiency axioms, is used to estimate each pixel’s contribution to the model’s output. Because exact Shapley computation is exponential, the authors adopt the KernelShap approximation, yielding an efficient estimate. The resulting attribution map is average‑pooled, optionally perturbed with Gaussian noise to encourage diversity, and the top d′ pixels form a compressed version of the image.

-

Principled Utility Maximization – The compressed candidates from stage 1 are scored by their Gradient Norms. According to the proven bound, samples with larger norms have higher utility. The top m samples are retained as the final distilled dataset.

The authors evaluate InfoUtil on several benchmarks: ImageNet‑1K, ImageNet‑100, and Tiny‑ImageNet, using backbones such as ResNet‑18, ResNet‑50, and Vision Transformers. In the most challenging 1‑image‑per‑class (1 IPC) setting on ImageNet‑1K with ResNet‑18, InfoUtil outperforms the previous state‑of‑the‑art RDED method by 6.1 % absolute top‑1 accuracy. Similar gains (≈16 % on ImageNet‑100) are reported for other settings. Ablation studies demonstrate that each component contributes meaningfully: Shapley‑based informativeness alone yields a 3.2 % boost, Gradient‑Norm utility alone yields 2.8 %, and their combination achieves the full 6.1 % improvement. Visualizations show that InfoUtil’s patches focus on semantically salient regions, whereas RDED’s random crops often include irrelevant background, highlighting the interpretability advantage.

Strengths of the work include a solid theoretical foundation linking data importance to well‑studied concepts from cooperative game theory and continuous optimization, a clear derivation of a computationally tractable utility proxy, and extensive empirical validation across datasets and models. The method also improves interpretability by explicitly showing which image regions are deemed informative.

Limitations are noted: Shapley value estimation, even with KernelShap, remains costly for high‑resolution images, potentially limiting scalability. The utility bound is proved under stochastic gradient descent; its validity for adaptive optimizers (e.g., Adam) is not established. Moreover, the current formulation focuses on vision data; extending the notions of informativeness and utility to text or multimodal data is left for future work.

Future directions suggested by the authors include developing faster Shapley approximation schemes (e.g., Monte‑Carlo sampling or hardware‑accelerated implementations), analyzing utility bounds for a broader class of optimizers, and generalizing the framework to other modalities and to privacy‑aware or fairness‑constrained settings.

In summary, the paper presents a principled, two‑pronged approach—InfoUtil—that jointly maximizes information preservation and training impact, thereby delivering a more efficient, higher‑performing, and interpretable dataset distillation method. The combination of game‑theoretic attribution and gradient‑norm utility constitutes a notable advance in the DD literature.

Comments & Academic Discussion

Loading comments...

Leave a Comment