Thinker: A vision-language foundation model for embodied intelligence

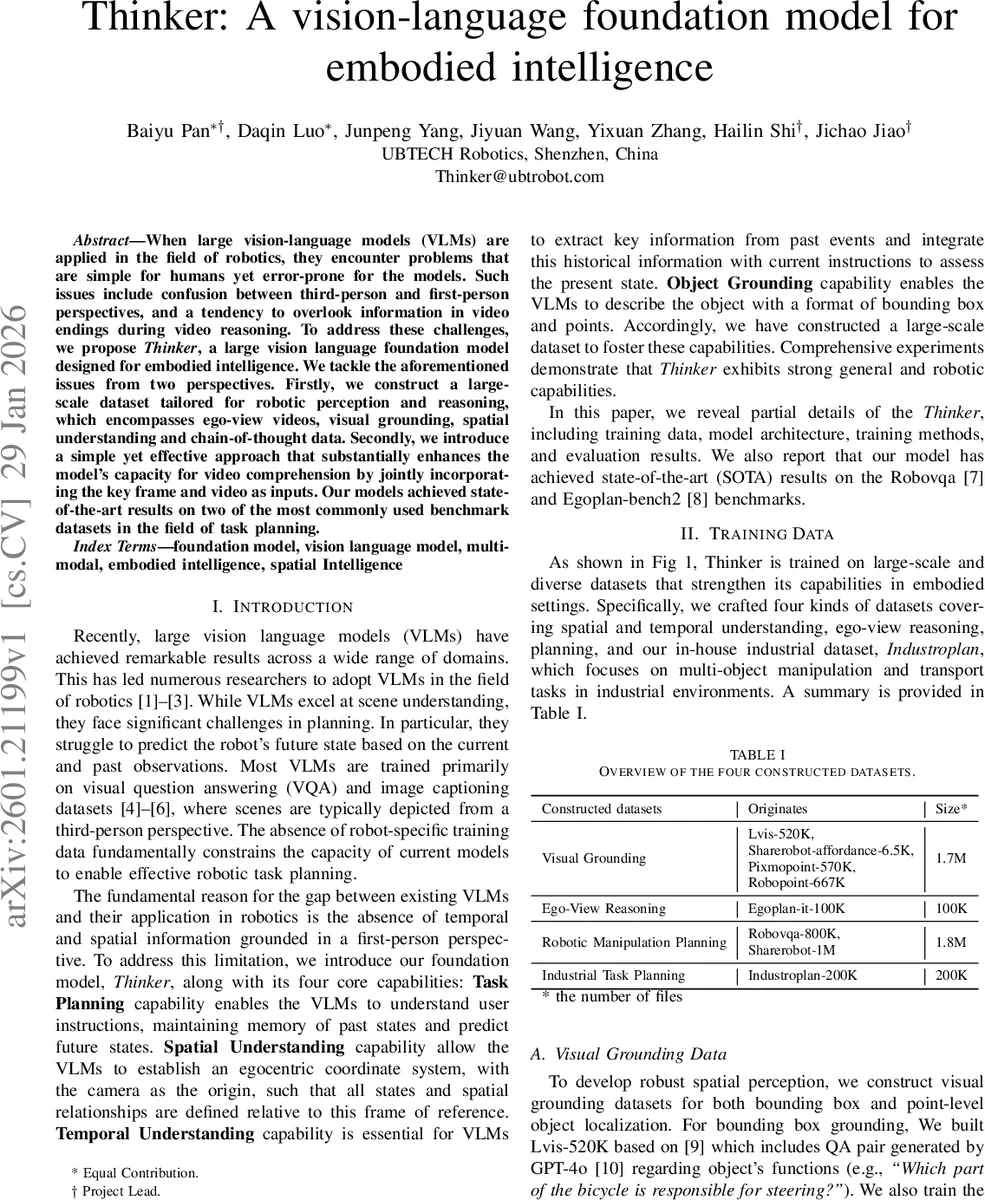

When large vision-language models are applied to the field of robotics, they encounter problems that are simple for humans yet error-prone for models. Such issues include confusion between third-person and first-person perspectives and a tendency to overlook information in video endings during temporal reasoning. To address these challenges, we propose Thinker, a large vision-language foundation model designed for embodied intelligence. We tackle the aforementioned issues from two perspectives. Firstly, we construct a large-scale dataset tailored for robotic perception and reasoning, encompassing ego-view videos, visual grounding, spatial understanding, and chain-of-thought data. Secondly, we introduce a simple yet effective approach that substantially enhances the model’s capacity for video comprehension by jointly incorporating key frames and full video sequences as inputs. Our model achieves state-of-the-art results on two of the most commonly used benchmark datasets in the field of task planning.

💡 Research Summary

The paper introduces Thinker, a vision‑language foundation model specifically designed for embodied intelligence in robotics. The authors identify two critical shortcomings of existing large vision‑language models (VLMs) when applied to robotics: (1) confusion between third‑person and first‑person (ego‑view) perspectives, and (2) a tendency to neglect information at the end of video sequences during temporal reasoning. To overcome these issues, they construct a comprehensive training corpus composed of four distinct data streams: visual grounding (bounding‑box and point‑level object localization), ego‑view reasoning, robotic manipulation planning, and an industrial task‑planning dataset (Industroplan‑200K). Each stream is built from public resources (e.g., LVIS, Pixmopoint, Robopoint) and extensive in‑house filtering, yielding datasets ranging from 100 K to 1.8 M examples.

Thinker’s architecture consists of a text tokenizer, a visual encoder, a multi‑layer perceptron that aligns visual and linguistic embeddings, and a large language model (LLM) backbone, totaling roughly ten billion parameters. The key technical novelty is the joint ingestion of a “key frame” (the final frame of a video) together with the full video sequence. The visual encoder processes the temporal stream into a series of visual tokens, while the key frame passes through a dedicated adapter to preserve high‑resolution cues. All visual tokens are concatenated with textual tokens and fed into the decoder, enabling simultaneous reasoning over the entire temporal context and the most informative snapshot.

Training proceeds in two stages. Stage 1 mixes the four data streams to endow Thinker with foundational spatial perception, object grounding, and temporal understanding. A dynamic sampler balances task contributions based on validation loss, and the inclusion of the last frame as an auxiliary input mitigates temporal information loss. Stage 2 fine‑tunes the model on the industrial Industroplan‑200K dataset, which provides video demonstrations, explicit task goals, and chain‑of‑thought annotations. This stage aligns the model’s reasoning with long‑horizon planning requirements and teaches it to generate step‑by‑step explanations.

Evaluation on two widely used robotic benchmarks demonstrates Thinker’s superiority. On RoboVQA, Thinker‑7B achieves the highest BLEU‑1 to BLEU‑4 scores (72.7, 65.7, 59.5, 56.0) and an average BLEU of 63.5, surpassing the previous best model by 0.8 points. On Egoplan‑bench2, it attains a Top‑1 accuracy of 58.2 %, out‑performing all open‑source and proprietary baselines, including GPT‑4V. The authors attribute these gains to (a) the combined key‑frame/video input strategy that preserves end‑of‑video information, (b) the extensive ego‑view and spatial grounding data that teach the model an egocentric coordinate system, and (c) the two‑stage training pipeline that first builds general embodied capabilities and then specializes them for complex industrial tasks.

From an engineering perspective, the paper details a large‑scale multi‑task training infrastructure that normalizes heterogeneous examples into a unified task‑aware schema, employs shared loading and selective freezing to reduce memory pressure, and implements automated monitoring, fault tolerance, and checkpointing for robust long‑duration runs.

In conclusion, Thinker represents a significant step toward a unified vision‑language model that can perceive, reason, and plan from a robot’s own viewpoint. The authors plan to release the model weights and code, and to explore extensions such as world models and video‑language‑action integration, positioning Thinker as a foundational platform for future embodied AI research.

Comments & Academic Discussion

Loading comments...

Leave a Comment