OMP: One-step Meanflow Policy with Directional Alignment

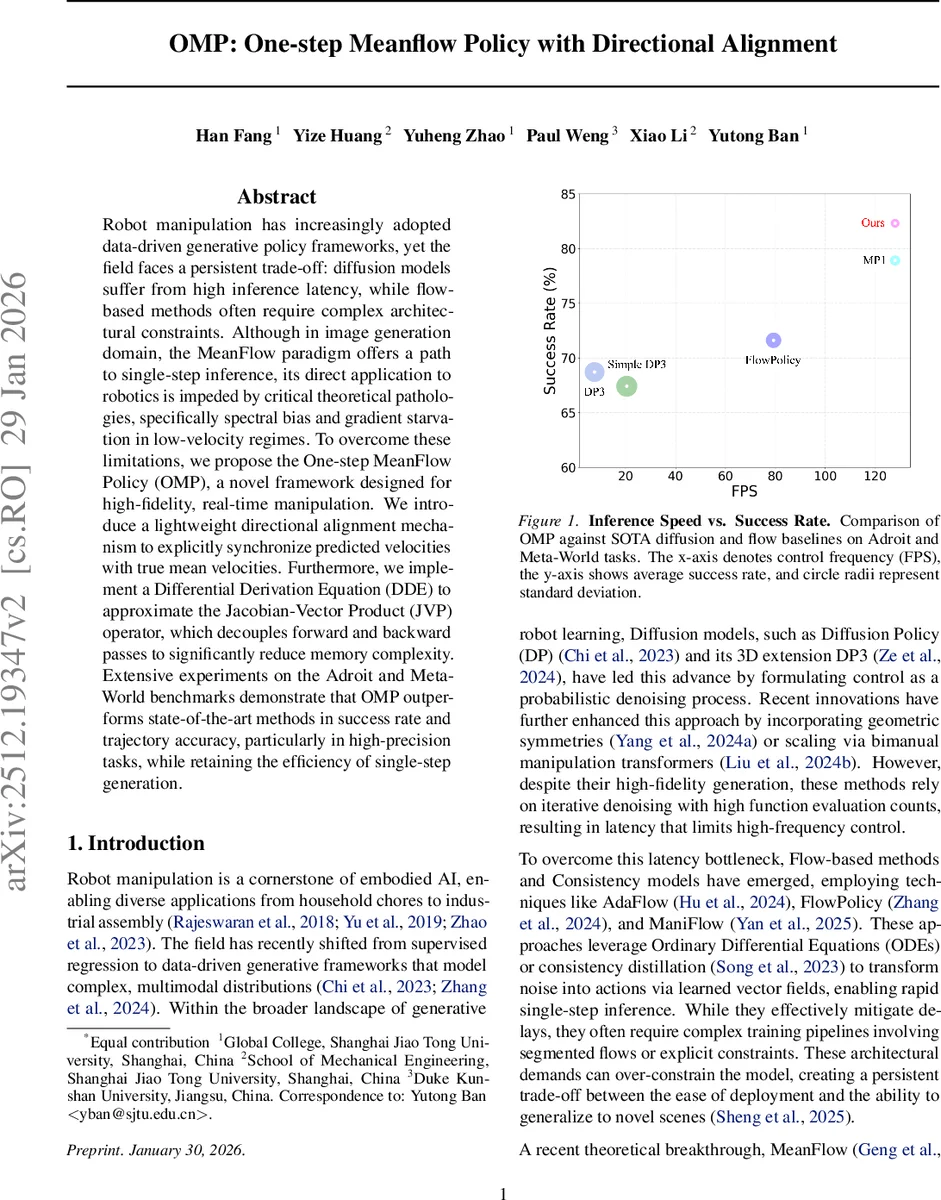

Robot manipulation has increasingly adopted data-driven generative policy frameworks, yet the field faces a persistent trade-off: diffusion models suffer from high inference latency, while flow-based methods often require complex architectural constraints. Although in image generation domain, the MeanFlow paradigm offers a path to single-step inference, its direct application to robotics is impeded by critical theoretical pathologies, specifically spectral bias and gradient starvation in low-velocity regimes. To overcome these limitations, we propose the One-step MeanFlow Policy (OMP), a novel framework designed for high-fidelity, real-time manipulation. We introduce a lightweight directional alignment mechanism to explicitly synchronize predicted velocities with true mean velocities. Furthermore, we implement a Differential Derivation Equation (DDE) to approximate the Jacobian-Vector Product (JVP) operator, which decouples forward and backward passes to significantly reduce memory complexity. Extensive experiments on the Adroit and Meta-World benchmarks demonstrate that OMP outperforms state-of-the-art methods in success rate and trajectory accuracy, particularly in high-precision tasks, while retaining the efficiency of single-step generation.

💡 Research Summary

The paper introduces OMP (One‑step MeanFlow Policy), a novel generative policy framework that brings the theoretical advantages of MeanFlow—single‑step inference via interval‑averaged velocity prediction—to real‑time robot manipulation while addressing three critical shortcomings that have limited its prior use. First, the authors identify a spectral bias inherent to the MeanFlow objective: because the target average velocity is obtained by integrating instantaneous dynamics over a time interval, the operation acts as a low‑pass filter (division by iω in the frequency domain), attenuating high‑frequency components by a factor of 1/ω². This bias means that fine‑grained, rapid adjustments required for high‑precision tasks receive little training signal. Second, in low‑velocity regimes the magnitude of the target average velocity becomes very small, causing the loss gradient to vanish (gradient starvation). Consequently, learning stalls or proceeds extremely slowly when the robot must execute slow, delicate motions. Third, computing the exact Jacobian‑Vector Product (JVP) needed for the MeanFlow identity traditionally relies on nested automatic differentiation, which scales memory linearly with the tangent dimension and quickly exhausts GPU resources for modern large backbones. To overcome these issues, OMP incorporates two key innovations. (1) Directional Alignment: a cosine‑similarity term is added to the loss to explicitly align the direction of the predicted average velocity (\hat{u}) with the true mean velocity (\hat{v}). This term supplies a strong directional gradient even when the speed is near zero, mitigating gradient starvation and compensating for the low‑frequency bias by forcing the network to learn correct orientation across the full spectrum. (2) Differential Derivation Equation (DDE): the JVP is approximated via a finite‑difference scheme (\partial f/\partial x \cdot v \approx

Comments & Academic Discussion

Loading comments...

Leave a Comment