Corrective Diffusion Language Models

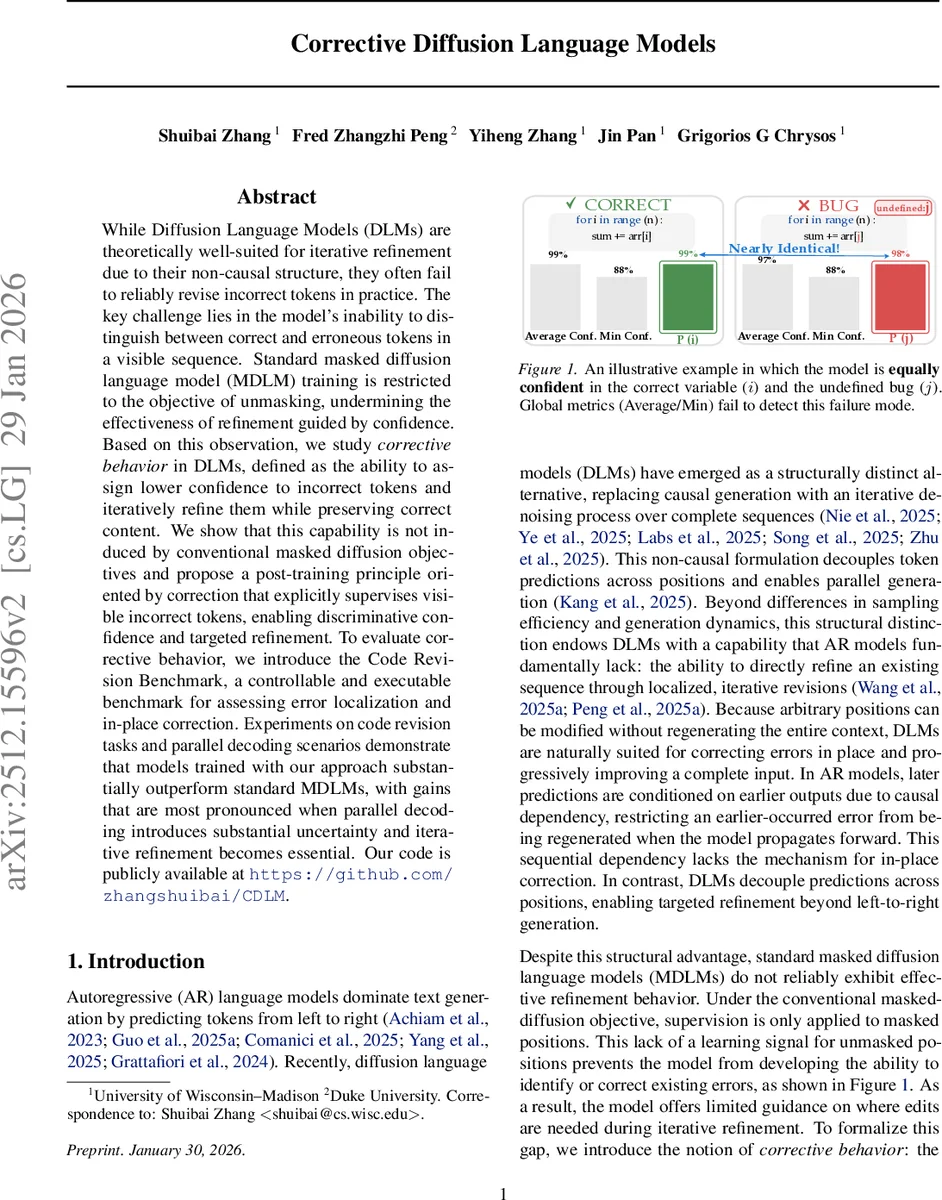

While Diffusion Language Models (DLMs) are theoretically well-suited for iterative refinement due to their non-causal structure, they often fail to reliably revise incorrect tokens in practice. The key challenge lies in the model’s inability to distinguish between correct and erroneous tokens in a visible sequence. Standard masked diffusion language model (MDLM) training is restricted to the objective of unmasking, undermining the effectiveness of refinement guided by confidence. Based on this observation, we study corrective behavior in DLMs, defined as the ability to assign lower confidence to incorrect tokens and iteratively refine them while preserving correct content. We show that this capability is not induced by conventional masked diffusion objectives and propose a post-training principle oriented by correction that explicitly supervises visible incorrect tokens, enabling discriminative confidence and targeted refinement. To evaluate corrective behavior, we introduce the Code Revision Benchmark, a controllable and executable benchmark for assessing error localization and in-place correction. Experiments on code revision tasks and parallel decoding scenarios demonstrate that models trained with our approach substantially outperform standard MDLMs, with gains that are most pronounced when parallel decoding introduces substantial uncertainty and iterative refinement becomes essential. Our code is publicly available at https://github.com/zhangshuibai/CDLM.

💡 Research Summary

The paper investigates why current Masked Diffusion Language Models (MDLMs) struggle to reliably correct erroneous tokens despite the theoretical suitability of diffusion language models (DLMs) for iterative refinement. The core issue is that MDLM training only supervises masked positions, leaving visible tokens—whether correct or incorrect—without any learning signal. Consequently, the model does not develop discriminative token‑level confidence that can separate correct from incorrect tokens, limiting its ability to locate and fix errors during iterative refinement.

To address this gap, the authors define “corrective behavior” as the ability of a model to assign lower confidence to erroneous tokens in a full sequence, then iteratively remask and regenerate those low‑confidence positions while preserving correct content. They demonstrate that conventional masked‑diffusion objectives do not induce this behavior.

The paper proposes a post‑training corrective principle that augments the standard absorbing‑uniform mixture objective with an explicit loss on visible corrupted tokens. By supervising both masked and visible‑error tokens, the model learns error‑aware confidence scores and can prioritize edits on unreliable content. This approach is lightweight, requiring only a short fine‑tuning phase after the original large‑scale pre‑training.

To evaluate corrective behavior, the authors introduce the Code Revision Benchmark (CRB). CRB builds on established code generation datasets (HumanEval, MBPP) and injects type‑preserving corruptions (operator, identifier, literal substitutions) with controllable difficulty (single‑error to multi‑error settings). Each corrupted program is executed against a deterministic grader to ensure that it truly fails, guaranteeing that every benchmark instance contains a genuine syntactic or semantic fault. This design enables precise measurement of error localization (confidence gap, Top‑K hit rates) and iterative in‑place correction (Pass@1 after a fixed number of refinement steps).

Experiments compare several publicly available DLMs—Dream‑7B‑Base, LLaD‑A‑8B‑Base, and Open‑dCoder‑0.5B—under both the standard MDLM training and the proposed CDLM (Corrective Diffusion Language Model) fine‑tuning. Results show that CDLMs achieve a substantially larger confidence gap between clean and erroneous tokens and higher Top‑1/Top‑5 hit rates, indicating better error localization. In iterative refinement, CDLMs consistently outperform MDLMs across 1, 2, and 4 refinement steps, with the advantage growing as the number of corrupted tokens increases. The gains are especially pronounced in parallel decoding scenarios where uncertainty is high, demonstrating that correction‑aware training not only improves targeted revision but also boosts overall generation quality.

In summary, the work makes three main contributions: (1) a controllable, executable benchmark (CRB) for systematic assessment of error localization and in‑place correction; (2) a simple yet effective post‑training corrective principle that endows diffusion language models with discriminative confidence and targeted refinement capabilities; and (3) empirical evidence that Corrective Diffusion Language Models substantially outperform standard MDLMs on code revision tasks and high‑uncertainty decoding settings. The findings suggest that diffusion models can be transformed from purely generative engines into error‑aware, self‑correcting systems, opening avenues for applying the corrective principle to other domains such as natural language text or multimodal generation, and for integrating real‑time human‑machine collaborative editing workflows.

Comments & Academic Discussion

Loading comments...

Leave a Comment