Calibrating Decision Robustness via Inverse Conformal Risk Control

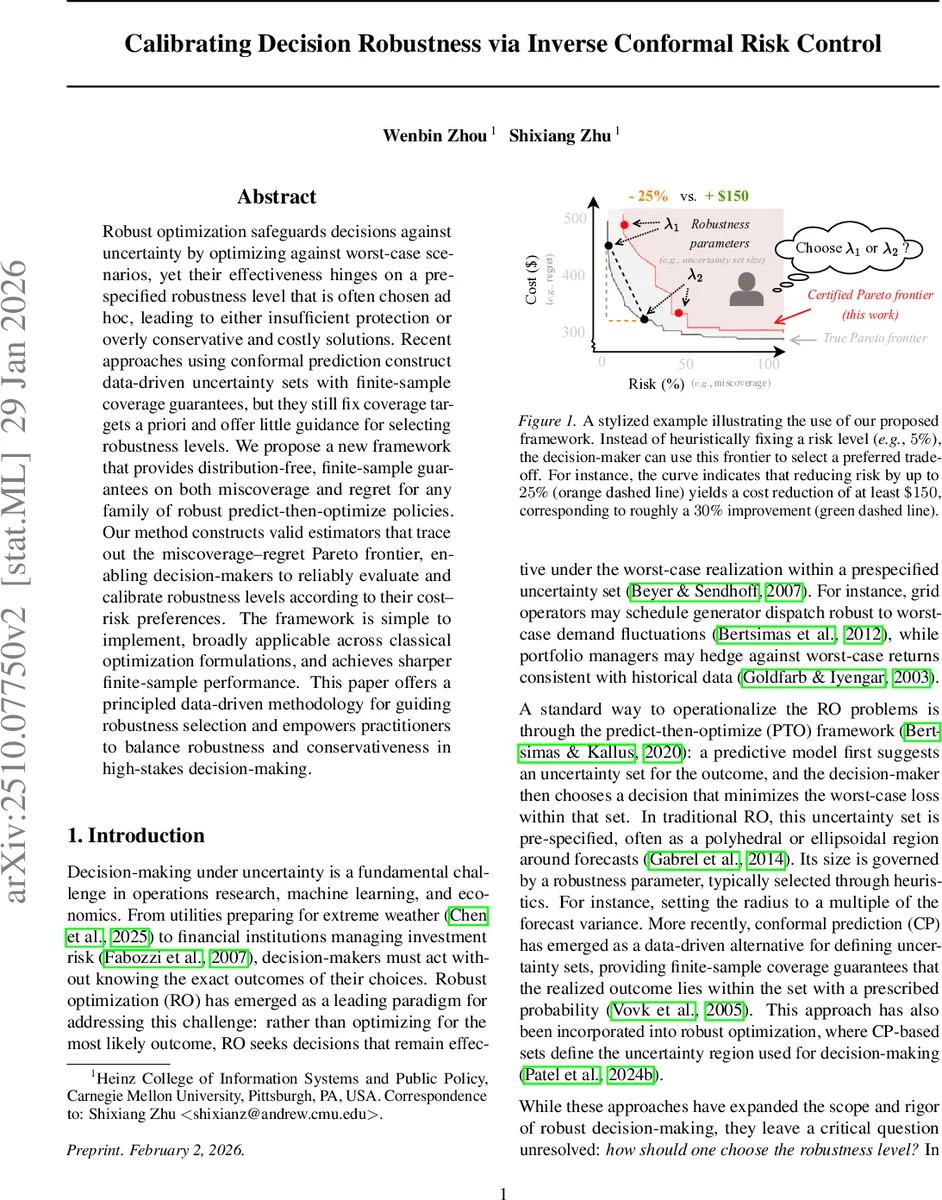

Robust optimization safeguards decisions against uncertainty by optimizing against worst-case scenarios, yet their effectiveness hinges on a prespecified robustness level that is often chosen ad hoc, leading to either insufficient protection or overly conservative and costly solutions. Recent approaches using conformal prediction construct data-driven uncertainty sets with finite-sample coverage guarantees, but they still fix coverage targets a priori and offer little guidance for selecting robustness levels. We propose a new framework that provides distribution-free, finite-sample guarantees on both miscoverage and regret for any family of robust predict-then-optimize policies. Our method constructs valid estimators that trace out the miscoverage–regret Pareto frontier, enabling decision-makers to reliably evaluate and calibrate robustness levels according to their cost–risk preferences. The framework is simple to implement, broadly applicable across classical optimization formulations, and achieves sharper finite-sample performance. This paper offers a principled data-driven methodology for guiding robustness selection and empowers practitioners to balance robustness and conservativeness in high-stakes decision-making.

💡 Research Summary

The paper tackles a fundamental practical problem in robust optimization (RO): how to choose the robustness parameter λ that determines the size of the uncertainty set (U_\lambda(X)). Traditional RO fixes λ heuristically, which often leads either to insufficient protection (high miscoverage) or to overly conservative decisions (high regret). Recent work has introduced conformal prediction (CP) to build data‑driven uncertainty sets with finite‑sample coverage guarantees, yet CP still requires the practitioner to pre‑specify a target coverage level and offers no principled guidance for selecting λ.

To fill this gap, the authors propose an “inverse conformal risk control” (inverse CRC) framework. Instead of prescribing a risk level and then constructing a set that satisfies it (the usual CRC direction), they fix an uncertainty set (i.e., a specific λ) and ask: what is the actual risk incurred? Two performance metrics are defined for any (X,Y) pair:

- Miscoverage indicator (I_\lambda(X,Y)=\mathbf{1}{Y\notin U_\lambda(X)}), which measures whether the realized outcome falls outside the set.

- Regret (R_\lambda(X,Y)=f(Y,z^\lambda(X))-\min{z\in Z}f(Y,z)), the excess cost of the robust decision (z^\lambda(X)=\arg\min{z}\max_{y\in U_\lambda(X)}f(y,z)) relative to the oracle that knows Y.

The goal is to construct, for every λ in a candidate set Λ, upper‑confidence estimates (\hat\alpha_I(\lambda)) and (\hat\alpha_R(\lambda)) that satisfy

(\hat\alpha_I(\lambda)\ge \mathbb{E}

Comments & Academic Discussion

Loading comments...

Leave a Comment