A Formal Comparison Between Chain of Thought and Latent Thought

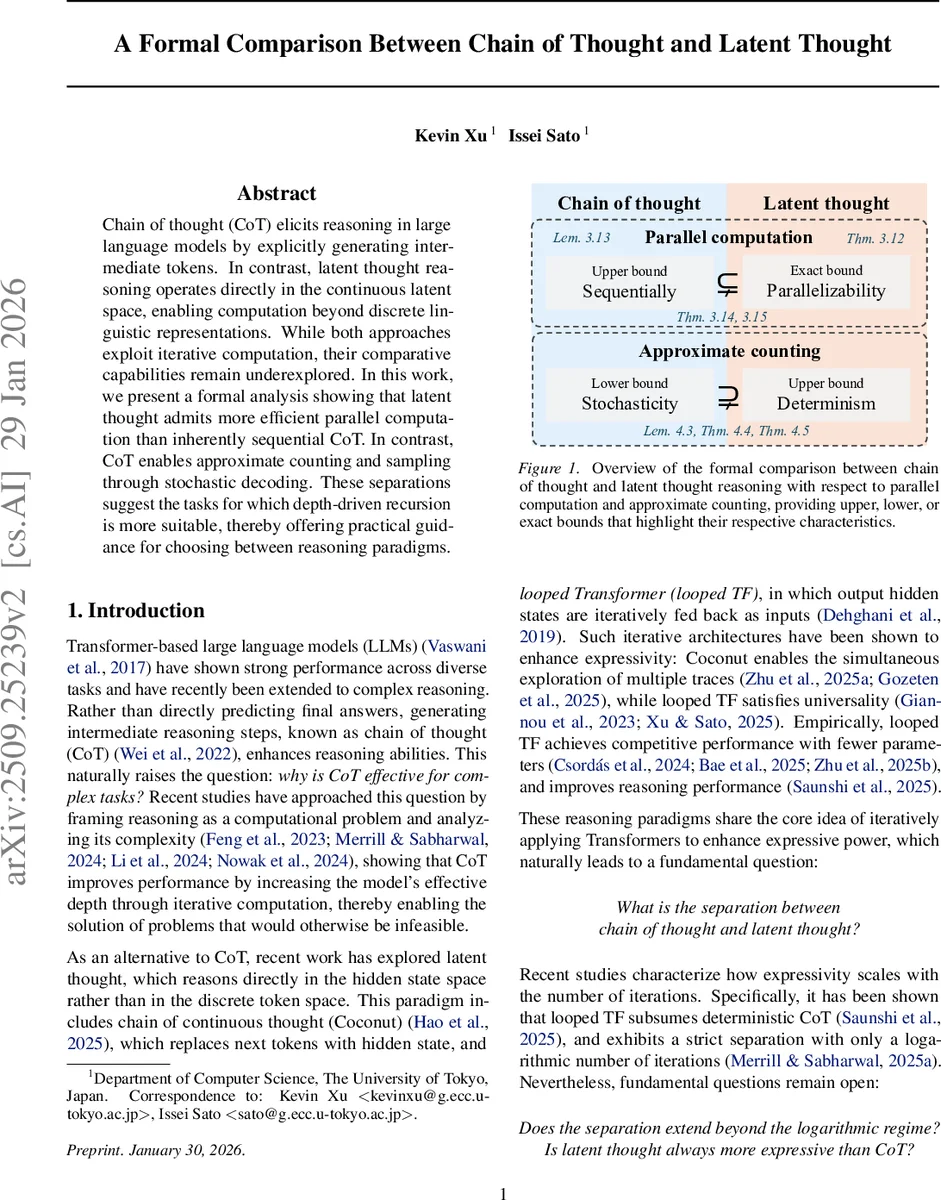

Chain of thought (CoT) elicits reasoning in large language models by explicitly generating intermediate tokens. In contrast, latent thought reasoning operates directly in the continuous latent space, enabling computation beyond discrete linguistic representations. While both approaches exploit iterative computation, their comparative capabilities remain underexplored. In this work, we present a formal analysis showing that latent thought admits more efficient parallel computation than inherently sequential CoT. In contrast, CoT enables approximate counting and sampling through stochastic decoding. These separations suggest the tasks for which depth-driven recursion is more suitable, thereby offering practical guidance for choosing between reasoning paradigms.

💡 Research Summary

The paper presents a formal, complexity‑theoretic comparison between two major reasoning paradigms for large language models (LLMs): Chain of Thought (CoT), which generates explicit intermediate tokens, and Latent Thought, which operates directly in the continuous hidden‑state space (embodied by Coconut and looped Transformer architectures). Both paradigms increase the effective depth of a transformer by applying it iteratively, but they differ fundamentally in how computation is organized and what computational resources they exploit.

Key Contributions

- Formal Definitions – The authors rigorously define CoT as a decoder‑only transformer that concatenates newly generated tokens at each step, and define Coconut and looped TF as models that update hidden vectors without producing explicit tokens.

- Problem Formalization – Reasoning tasks are modeled as the evaluation of directed acyclic graphs (DAGs). The size of the graph (number of nodes) and its depth (longest path) become the natural metrics for the number of steps required by CoT and Latent Thought, respectively.

- Parallelism vs. Sequentiality – Theorem 3.5 shows that a CoT model can simulate any poly‑size DAG in O(size(G)) steps by using tokens as a scratchpad. Theorem 3.6 proves that a latent‑thought model can evaluate the same DAG in O(depth(G)) iterations, because hidden states can encode the outputs of all nodes at a given depth simultaneously. This yields a strict separation in the polylogarithmic regime: with log^k n iterations, latent thought exactly captures the power of the circuit class TC^k, whereas CoT cannot realize the full class (Theorems 3.12–3.15).

- Approximate Counting – While deterministic latent thought is limited to exact computation, CoT’s stochastic decoding enables fully polynomial‑time randomized approximation schemes (FPRAS) for #P‑complete counting problems (Theorem 4.3). By leveraging classic results linking approximate counting to sampling, the authors further demonstrate that there exist target distributions that CoT can approximate and sample from, but latent thought cannot (Theorem 4.4). This is the first formal separation favoring CoT.

- Practical Trade‑offs – The paper discusses hardware implications: CoT benefits from KV‑caching, making each step cheap but memory‑bound; latent thought recomputes the full sequence each iteration, increasing arithmetic intensity and better utilizing modern parallel accelerators. Consequently, latency can be comparable despite differing computational profiles.

Implications and Guidance

- Depth‑driven (parallel) tasks such as evaluating wide, shallow circuits, large‑scale logical simulations, or any problem where the DAG depth is small relative to its size are best served by latent‑thought approaches, which achieve the required computation in far fewer iterations.

- Probabilistic or counting‑heavy tasks where an approximate answer suffices (e.g., partition function estimation, combinatorial counting, sampling from complex distributions) benefit from CoT’s stochastic decoding and its ability to implement FPRAS.

Conclusion

The work bridges the gap between theoretical computer science and modern LLM reasoning, showing that CoT and latent thought are not merely stylistic variants but possess distinct computational powers. Latent thought excels in parallel efficiency, matching the expressive power of uniform TC^k circuits with minimal iterations, while CoT uniquely supports randomized approximation schemes. The authors suggest future directions such as hybrid models that combine token‑level scratchpad memory with latent parallelism, and empirical validation of the theoretical bounds on real‑world LLM hardware.

Comments & Academic Discussion

Loading comments...

Leave a Comment