Learning What To Hear: Boosting Sound-Source Association For Robust Audiovisual Instance Segmentation

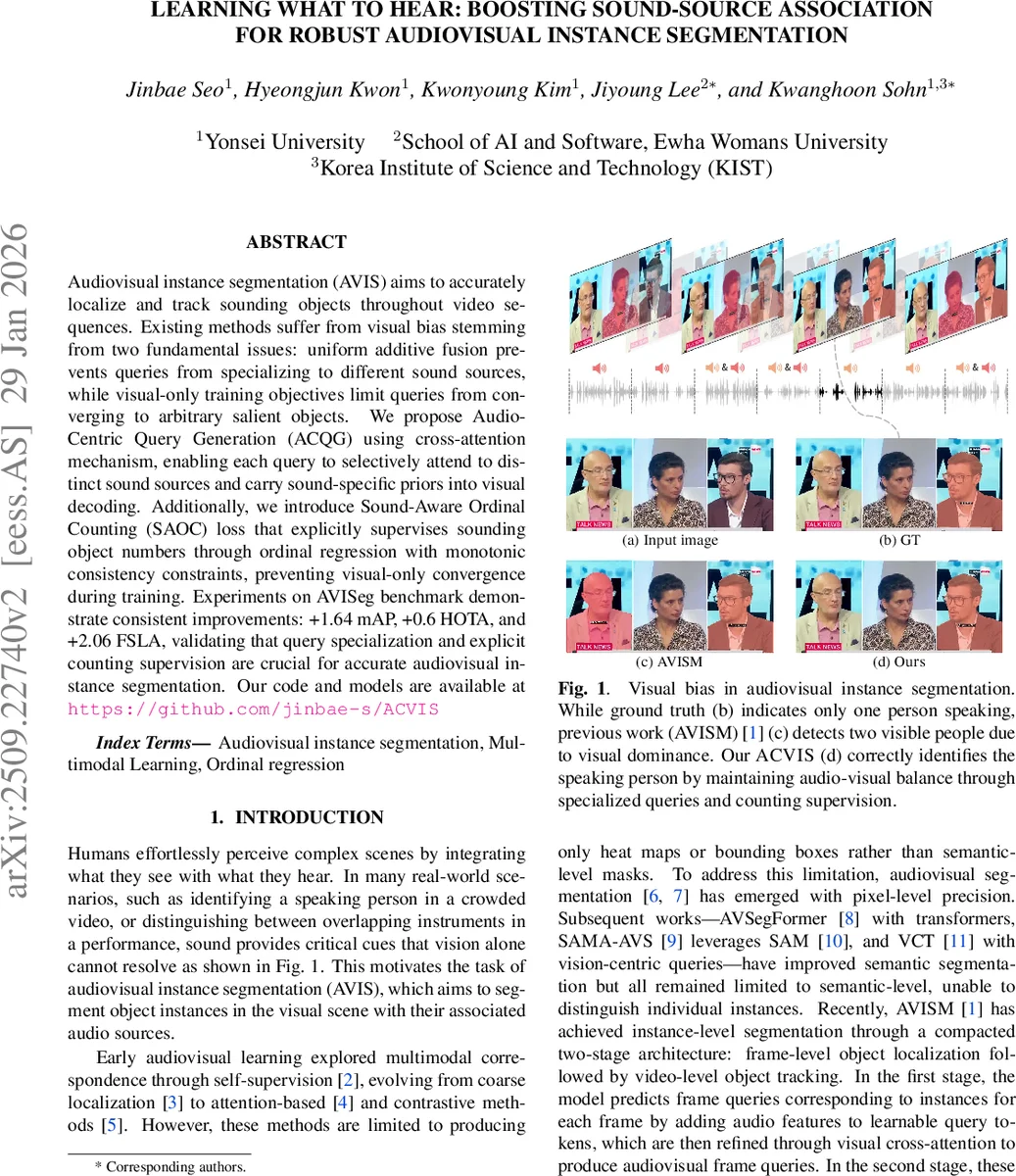

Audiovisual instance segmentation (AVIS) requires accurately localizing and tracking sounding objects throughout video sequences. Existing methods suffer from visual bias stemming from two fundamental issues: uniform additive fusion prevents queries from specializing to different sound sources, while visual-only training objectives allow queries to converge to arbitrary salient objects. We propose Audio-Centric Query Generation using cross-attention, enabling each query to selectively attend to distinct sound sources and carry sound-specific priors into visual decoding. Additionally, we introduce Sound-Aware Ordinal Counting (SAOC) loss that explicitly supervises sounding object numbers through ordinal regression with monotonic consistency constraints, preventing visual-only convergence during training. Experiments on AVISeg benchmark demonstrate consistent improvements: +1.64 mAP, +0.6 HOTA, and +2.06 FSLA, validating that query specialization and explicit counting supervision are crucial for accurate audiovisual instance segmentation.

💡 Research Summary

The paper tackles a fundamental limitation of current audiovisual instance segmentation (AVIS) systems: a strong visual bias that causes models to focus on visually salient objects rather than the true sound‑producing entities. Existing approaches, exemplified by AVISM, fuse audio features into all queries via a simple additive operation and rely solely on visual losses (mask and classification), which together prevent queries from specializing to distinct sound sources and lead to over‑segmentation of non‑audible objects.

To overcome these issues, the authors introduce two complementary components. First, Audio‑Centric Query Generation (ACQG) replaces additive fusion with a cross‑attention module. For each video frame, a set of learnable frame queries is combined with the audio embedding through three cross‑attention layers, where the audio feature serves as both key and value. This mechanism enables each query to attend selectively to different temporal‑frequency patterns in the audio signal, producing “audio‑centric” queries that carry source‑specific priors into the visual decoder. Consequently, queries become capable of representing distinct sound sources, even when multiple instances of the same semantic class are simultaneously audible.

Second, the Sound‑Aware Ordinal Counting (SAOC) loss explicitly supervises the number of sounding objects in each frame. A learnable count token is concatenated with the frame queries and passed through a linear head that predicts a series of conditional probabilities p_k (k = 0…K_max‑1). These probabilities correspond to “there are more than k objects” and are trained against binary ordinal targets derived from the ground‑truth count N_obj using a binary cross‑entropy formulation. Because the loss is built on conditional probabilities, it enforces monotonic consistency (P(>k) ≥ P(>k+1)), providing stable gradients and ensuring that the model’s predicted counts are logically coherent. By penalizing deviations from the true number of audible objects, SAOC forces the decoder to activate only those queries that correspond to real sound sources, thereby suppressing visual‑only activations.

The overall architecture retains the two‑stage design of AVISM: a frame‑level object localizer followed by a video‑level tracker. The localizer now incorporates ACQG and the SAOC token, while the tracker aggregates frame‑level queries across a temporal window and performs Hungarian matching to produce final instance trajectories. Training optimizes a weighted sum of the original AVIS losses (frame‑level mask, video‑level mask, and query alignment) together with the SAOC loss (λ_SAOC = 1.0).

Extensive experiments on the AVISeg benchmark (926 videos, 26 categories) demonstrate consistent improvements. ACVIs achieves mAP = 46.68 (+1.64), HOTA = 65.12 (+0.60), and FSLA = 46.48 (+2.06) over the baseline AVISM (mAP = 45.04, HOTA = 64.52, FSLA = 44.42). The gains are especially pronounced in multi‑source frames, where FSLA for multi‑source scenarios improves by 3.82 points, indicating that the model can correctly separate overlapping sound sources. Ablation studies show that ACQG alone yields +1.13 mAP, SAOC alone yields +1.51 mAP, and their combination yields the full +1.64 mAP, confirming that the two components provide complementary benefits. The ordinal counting loss is most effective with K_max = 2, matching the typical number of concurrent sound sources in the dataset; larger K_max values degrade performance. Pre‑training the visual backbone on ImageNet + COCO improves results further, and using a stronger Swin‑L backbone is predicted to yield additional gains.

In conclusion, the paper presents a clear and effective strategy for mitigating visual dominance in AVIS. By introducing audio‑centric query specialization via cross‑attention and supervising the global count of audible objects through an ordinal regression loss, the method achieves a more balanced multimodal fusion, leading to better instance segmentation and tracking in complex auditory scenes. The proposed modules are lightweight, easily integrated into existing transformer‑based AVIS pipelines, and open avenues for future work on richer multimodal reasoning, real‑time deployment, and extension to other tasks such as audio‑guided video editing or robotics perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment