Large Vision Models Can Solve Mental Rotation Problems

Mental rotation is a key test of spatial reasoning in humans and has been central to understanding how perception supports cognition. Despite the success of modern vision transformers, it is still unclear how well these models develop similar abilities. In this work, we present a systematic evaluation of ViT, CLIP, DINOv2, and DINOv3 across a range of mental-rotation tasks, from simple block structures similar to those used by Shepard and Metzler to study human cognition, to more complex block figures, three types of text, and photo-realistic objects. By probing model representations layer by layer, we examine where and how these networks succeed. We find that i) self-supervised ViTs capture geometric structure better than supervised ViTs; ii) intermediate layers perform better than final layers; iii) task difficulty increases with rotation complexity and occlusion, mirroring human reaction times and suggesting similar constraints in embedding space representations.

💡 Research Summary

The paper investigates whether modern large‑scale Vision Transformers (ViT, CLIP, DINOv2, DINOv3) possess the kind of spatial reasoning that underlies the classic mental‑rotation task used in cognitive psychology. The authors construct a comprehensive benchmark that spans three families of data: (1) synthetic Shepard‑Metzler block objects, (2) textual strings (common English words, random character sequences, and artificial symbols rendered in a pseudo‑Sloan font), and (3) photo‑realistic tabletop scenes of fruit captured from multiple viewpoints. For each family, 20 000 balanced image pairs are generated; a positive pair shows the same object under a 3‑D rotation, while a negative pair shows a mirrored version. Difficulty is systematically varied: the block objects include a “±0°” condition (identical elevation, differing azimuth) and a “Free” condition (random elevation and azimuth), the text strings are randomly rotated and flipped, and the fruit scenes are rendered at camera elevations of 30°, 70°, and 90° with optional occlusion.

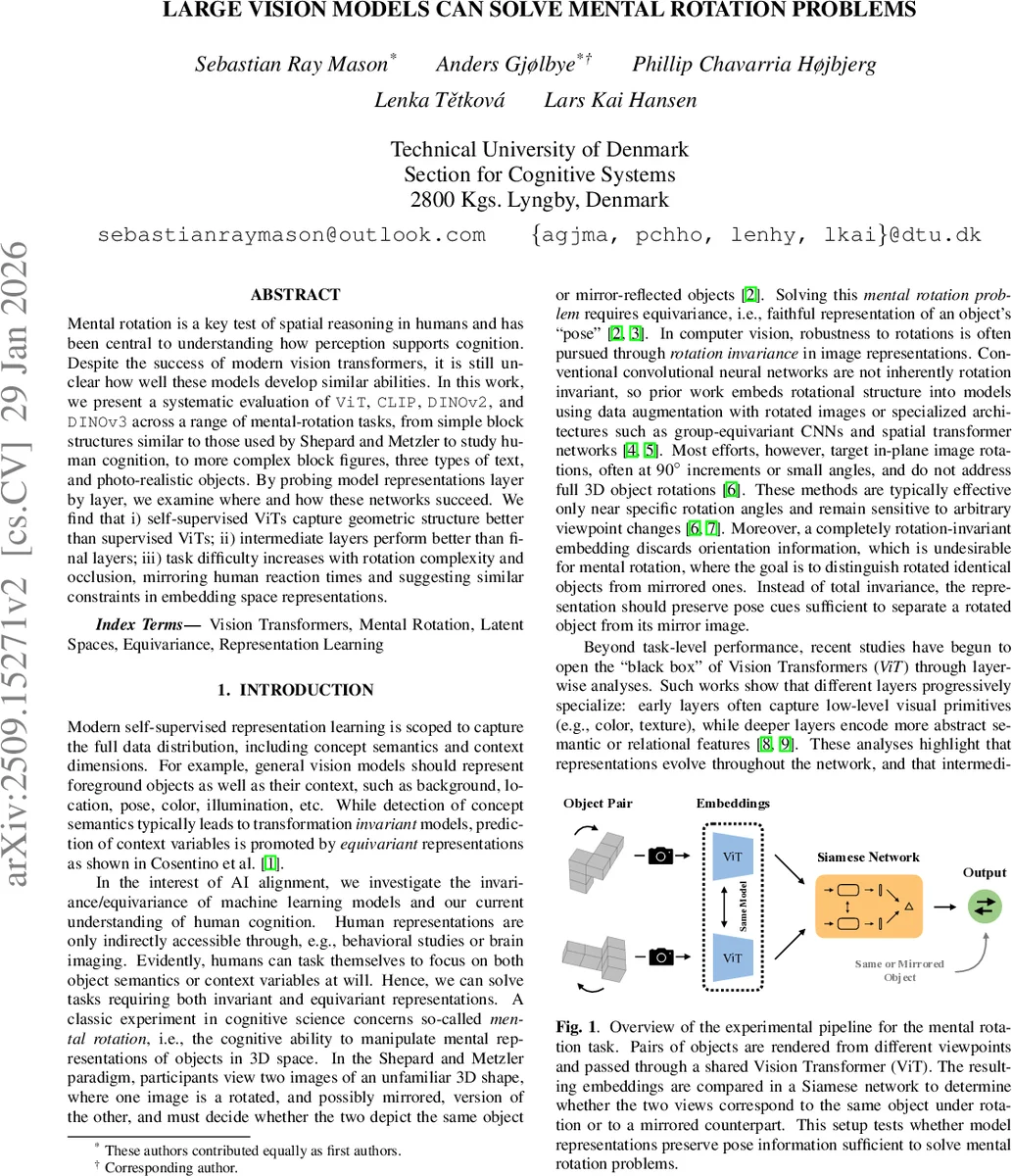

Four pre‑trained Vision Transformer families are evaluated: (i) supervised ViT (ImageNet‑21K), (ii) CLIP (image‑text contrastive learning on LAION‑2B), (iii) DINOv2 (self‑supervised teacher‑student on 142 M images), and (iv) DINOv3 (scaled self‑supervised on 1.7 B images with an added Gram‑anchoring loss). Each family is examined at three model sizes (Base, Large, Huge). For every transformer layer, the authors average the patch tokens to obtain a single embedding vector, then train a lightweight Siamese MLP classifier on the embedding pairs. The classifier computes an ℓ2‑normalized representation for each image, takes the absolute difference, and feeds it to a logistic head to predict whether the pair is a rotated match or a mirror. Training uses binary cross‑entropy, AdamW, cosine annealing, and early stopping; performance is reported as mean accuracy ± standard error over three repetitions of stratified 10‑fold cross‑validation.

Results reveal several consistent patterns. First, self‑supervised models (CLIP, DINOv2, DINOv3) outperform the supervised ViT on all tasks, indicating that categorical supervision tends to discard geometric cues in favor of class invariance. Second, the highest accuracies are typically achieved in intermediate layers rather than the final semantic layer, suggesting that pose information is encoded early to mid‑network and then abstracted away. For example, ViT‑Large peaks around layers 4‑6 on the easy “±0°” block task, while DINOv3‑Huge retains discriminative signal only in layers 18‑19 for the hardest “Free” condition. Third, task difficulty scales with rotation magnitude and occlusion: accuracy drops gradually from ±0° to ±10°, ±20°, ±30°, and finally “Free”, mirroring the linear increase in human reaction times observed in classic mental‑rotation experiments. Similarly, photo‑realistic accuracy declines as camera elevation rises from 30° to 90°, reflecting greater viewpoint change and self‑occlusion.

Textual experiments highlight CLIP’s advantage in handling language‑related inputs, owing to its joint image‑text training. Remarkably, DINOv3 also achieves strong performance on text tasks despite lacking any textual supervision, implying that its dense visual features capture enough structural regularities to support OCR‑like discrimination. In contrast, supervised ViT performs poorly across the board, likely because its training objective enforces invariance to pose variations.

The authors discuss the implications of these findings. Although current Vision Transformers are not explicitly designed for full 3‑D rotation invariance, self‑supervised training endows them with a degree of equivariance that can be exploited for mental‑rotation‑type reasoning. However, to reach human‑level performance on unconstrained 3‑D rotations, future architectures may need to (a) preserve pose information deeper into the network, (b) incorporate group‑equivariant or spatial‑transformer modules, and (c) include rotation/mirroring objectives during pre‑training. The paper thus provides both a rigorous benchmark for probing spatial reasoning in vision models and concrete evidence that intermediate representations are the key locus of geometric knowledge in modern large‑scale Transformers.

Comments & Academic Discussion

Loading comments...

Leave a Comment