Deep Residual Echo State Networks: exploring residual orthogonal connections in untrained Recurrent Neural Networks

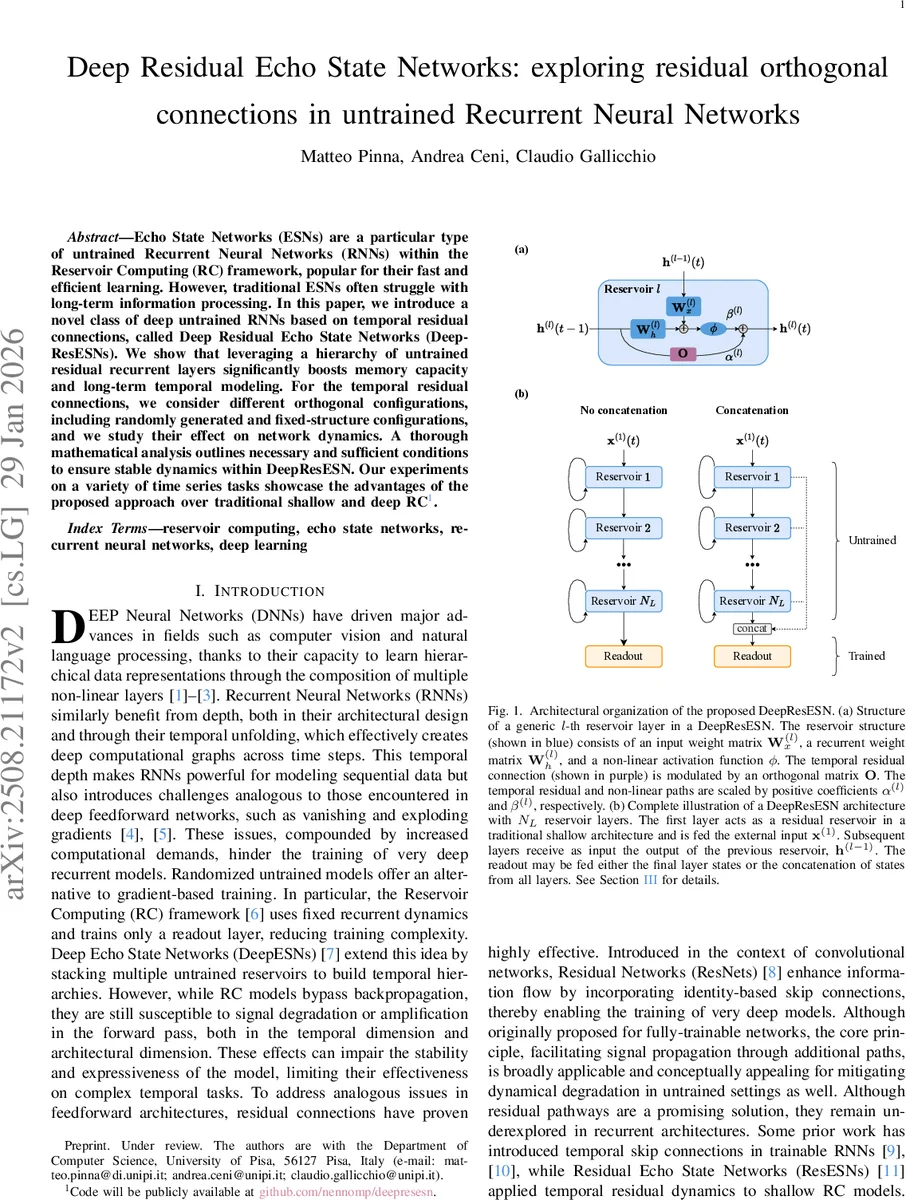

Echo State Networks (ESNs) are a particular type of untrained Recurrent Neural Networks (RNNs) within the Reservoir Computing (RC) framework, popular for their fast and efficient learning. However, traditional ESNs often struggle with long-term information processing. In this paper, we introduce a novel class of deep untrained RNNs based on temporal residual connections, called Deep Residual Echo State Networks (DeepResESNs). We show that leveraging a hierarchy of untrained residual recurrent layers significantly boosts memory capacity and long-term temporal modeling. For the temporal residual connections, we consider different orthogonal configurations, including randomly generated and fixed-structure configurations, and we study their effect on network dynamics. A thorough mathematical analysis outlines necessary and sufficient conditions to ensure stable dynamics within DeepResESN. Our experiments on a variety of time series tasks showcase the advantages of the proposed approach over traditional shallow and deep RC.

💡 Research Summary

The paper addresses a well‑known limitation of conventional Echo State Networks (ESNs): their difficulty in retaining information over long time horizons. While shallow ESNs rely on a single, randomly initialized reservoir whose dynamics are governed by the Echo State Property (ESP), recent extensions such as DeepESNs stack multiple reservoirs to obtain hierarchical representations. However, even deep reservoirs suffer from signal attenuation or explosion across both the temporal axis and the architectural depth, which hampers their ability to model complex, long‑range dependencies.

To overcome these issues, the authors propose Deep Residual Echo State Networks (DeepResESNs), a novel class of deep, untrained recurrent neural networks that incorporate temporal residual (skip) connections into every reservoir layer. Each layer l computes its new state h⁽ˡ⁾(t) as a weighted sum of two parallel paths:

- Residual path – the previous state h⁽ˡ⁾(t‑1) is multiplied by an orthogonal matrix O and scaled by a coefficient α⁽ˡ⁾.

- Non‑linear path – the standard ESN update (input‑to‑reservoir and recurrent weights, tanh non‑linearity) is scaled by β⁽ˡ⁾.

The update rule (Equation 3) reads:

h⁽ˡ⁾(t) = α⁽ˡ⁾ O h⁽ˡ⁾(t‑1) + β⁽ˡ⁾ ϕ( W⁽ˡ⁾_h h⁽ˡ⁾(t‑1) + W⁽ˡ⁾_x x⁽ˡ⁾(t) + b⁽ˡ⁾ ),

where x⁽¹⁾(t) = external input and x⁽ˡ⁾(t) = h⁽ˡ⁻¹⁾(t) for l > 1. The orthogonal matrix O can be instantiated in three ways:

- Random orthogonal (R) – obtained via QR decomposition of a random matrix, providing a stochastic, spectrally balanced transformation.

- Cyclic orthogonal (C) – a deterministic permutation matrix that shifts each component one position forward (with wrap‑around).

- Identity (I) – reduces the model to a conventional leaky ESN, where the residual path is simply an identity mapping.

These three configurations span a spectrum of dynamical behaviours: R tends to suppress low‑frequency components, C preserves the full frequency content across layers, and I acts as a low‑pass filter that increasingly attenuates high frequencies as depth grows. The authors illustrate these effects with a Fourier analysis (Fig. 3) on a synthetic multi‑frequency signal.

A central theoretical contribution is the extension of the ESP to the deep residual setting. By defining a global state transition function F that aggregates the dynamics of all layers, the authors prove that a sufficient condition for contractivity (and thus the ESP) is

‖α⁽ˡ⁾ O‖₂ + β⁽ˡ⁾ ρ(W⁽ˡ⁾_h) < 1 for every layer l,

where ρ(·) denotes the spectral radius. Because orthogonal matrices have unit 2‑norm, the condition simplifies to α⁽ˡ⁾ + β⁽ˡ⁾ ρ(W⁽ˡ⁾_h) < 1. This inequality guides the selection of the scaling coefficients α and β and the reservoir spectral radius ρ, guaranteeing that the network’s internal states converge to a unique trajectory that depends only on the input sequence, irrespective of initial conditions.

Training remains lightweight: all reservoir weights are fixed after random initialization, and only the readout matrix W_out is learned via ridge regression (closed‑form solution). The readout can ingest either the final layer’s state or the concatenation of all layer states, providing flexibility in how hierarchical features are exploited.

Empirical evaluation covers seven benchmark tasks that probe memory, non‑linear dynamics, forecasting, and classification:

- Memory Capacity (MC) – using a 12‑tone sinusoidal mixture, DeepResESNs with R or C matrices achieve MC values close to 0.85 × N_h (with N_h = 100) at depths N_L = 5–8, substantially outperforming shallow ESNs and DeepESNs.

- NARMA10 – a classic non‑linear regression task; DeepResESN(R) reduces the normalized mean‑square error by roughly 20‑30 % compared to a shallow ESN.

- Mackey‑Glass & Sunspot forecasting – long‑term prediction errors drop from 0.12 (shallow) to 0.08 (DeepResESN(C)), demonstrating improved capture of long‑range temporal patterns.

- Time‑series classification (ECG, Speech Commands) – accuracy improves from ~78 % (shallow) to ~85 % (DeepResESN(C)), confirming that hierarchical residual connections yield more discriminative representations.

Across all experiments, DeepResESNs consistently outperform both shallow ESNs (15 %–30 % relative gain) and DeepESNs (8 %–12 % gain) while incurring only a modest increase in computational cost (the additional matrix‑vector multiplication by O per layer).

In conclusion, the paper introduces a principled way to embed residual connections into untrained recurrent reservoirs, showing that orthogonal skip pathways can preserve or reshape spectral information, enhance memory, and stabilize dynamics. Theoretical analysis provides clear design rules for ensuring the ESP, and extensive experiments validate the practical benefits. Future directions suggested include learning the orthogonal transformations, exploring alternative nonlinearities, and implementing the architecture on neuromorphic hardware for real‑time streaming applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment