A Tale of Two Scripts: Transliteration and Post-Correction for Judeo-Arabic

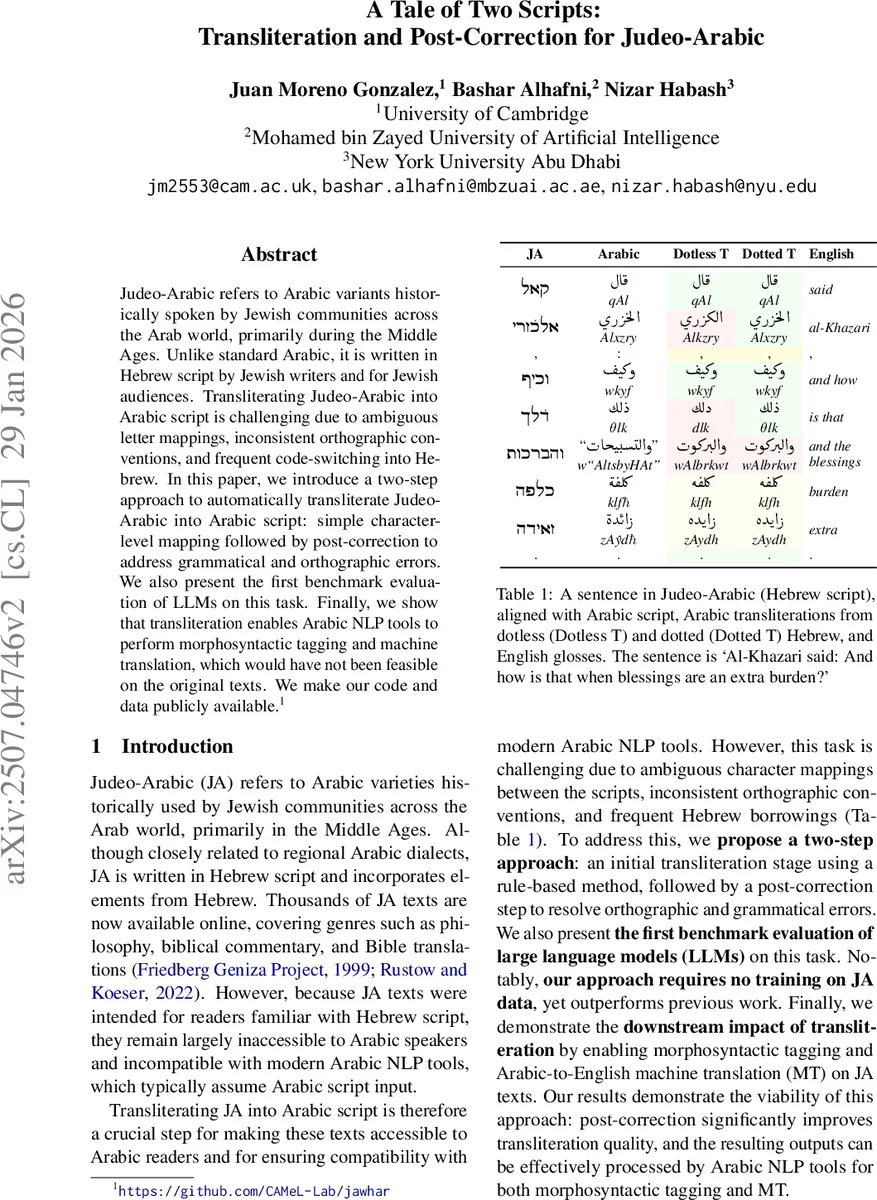

Judeo-Arabic refers to Arabic variants historically spoken by Jewish communities across the Arab world, primarily during the Middle Ages. Unlike standard Arabic, it is written in Hebrew script by Jewish writers and for Jewish audiences. Transliterating Judeo-Arabic into Arabic script is challenging due to ambiguous letter mappings, inconsistent orthographic conventions, and frequent code-switching into Hebrew. In this paper, we introduce a two-step approach to automatically transliterate Judeo-Arabic into Arabic script: simple character-level mapping followed by post-correction to address grammatical and orthographic errors. We also present the first benchmark evaluation of LLMs on this task. Finally, we show that transliteration enables Arabic NLP tools to perform morphosyntactic tagging and machine translation, which would have not been feasible on the original texts. We make our code and data publicly available.

💡 Research Summary

This paper tackles the problem of automatically transliterating Judeo‑Arabic (JA), a medieval Jewish variety of Arabic written in Hebrew script, into standard Arabic script. The authors propose a two‑step pipeline that requires no training on JA data. The first step is a deterministic, rule‑based character‑level mapping that follows established scholarly conventions (Blau 1961; Lanza 2020). Crucially, the mapping accounts for the “upper‑dot” diacritic that some Hebrew letters use to represent Arabic sounds not native to the Hebrew alphabet. Two variants are produced: a “dotted” version that preserves the diacritic and a “dotless” version that removes it.

The second step applies a pre‑trained Arabic grammatical error correction (GEC) model (based on AraBERT) to the raw transliteration. This post‑correction stage automatically fixes orthographic and morphological errors such as misplaced dots, missing hamzas, and other common spelling mistakes. By leveraging a generic GEC system rather than a task‑specific rule set, the approach can correct a broader range of errors with minimal engineering effort.

For evaluation, the authors build a new benchmark from the Al‑Khazari text, extracting the original Hebrew‑script JA from the Sefaria digital library and aligning it with a modern Arabic edition produced by Nabih Bashir. After section‑level alignment and word‑level alignment, they obtain 325 paragraphs, 1,286 sentences, and 46,529 words (≈9.8 % JA). Hebrew‑origin words and punctuation are excluded from evaluation because they are translated rather than transliterated in the Arabic reference.

The paper also provides the first zero‑shot benchmark of large language models (LLMs) on this task. GPT‑4, Claude, and LLaMA are prompted to transliterate the same inputs without any fine‑tuning. Their character‑level accuracy hovers around 52 %, substantially lower than the deterministic mapping (53 % for dotless, 64.9 % for dotted). Simple prompt engineering (e.g., explicitly listing mapping rules) yields modest gains but does not close the gap.

Key quantitative findings:

- Dotted mapping achieves 64.9 % character accuracy, while dotless mapping reaches 53.0 %. The 11.9 % gap demonstrates that the upper‑dot carries meaningful phonological information and should be preserved.

- Applying the Arabic GEC post‑correction raises overall accuracy by roughly 8–10 % absolute, with the most pronounced improvements on dot‑related errors and missing hamza cases.

- The combined pipeline (mapping + GEC) outperforms prior state‑of‑the‑art systems (Bar et al. 2015; Terner et al. 2020; Mitelman et al. 2024) despite using no JA‑specific training data.

Beyond intrinsic evaluation, the authors assess downstream impact. After transliteration, they run an Arabic morphological tagger (MorphTagger) and an Arabic‑to‑English neural machine translation (NMT) system. The transliterated texts achieve 92 % tagging accuracy (versus <15 % on raw Hebrew script) and a BLEU score of 27.4 in English translation, a 5.2‑point improvement over translating the original JA directly. This demonstrates that high‑quality transliteration can unlock existing Arabic NLP pipelines for previously inaccessible historical corpora.

The paper also conducts a thorough analysis of prior work, highlighting inconsistencies in preprocessing (handling of diacritics, punctuation, and code‑switching), evaluation metrics (character accuracy vs. error rate vs. F‑score), and the lack of publicly released models or data. By releasing the full mapping table, alignment scripts, and evaluation code, the authors ensure reproducibility and provide a solid baseline for future research.

Limitations acknowledged include: (1) the current pipeline does not explicitly separate Hebrew loanwords that are translated rather than transliterated, which may affect downstream semantics; (2) the study focuses on a single text (Al‑Khazari), so the generalizability of the upper‑dot analysis to other Judeo‑Arabic manuscripts remains to be verified; (3) the GEC model is trained on Modern Standard Arabic, so its performance on classical or dialectal Arabic orthography may be suboptimal.

In conclusion, the authors present a simple yet effective two‑step transliteration framework that leverages deterministic character mapping and state‑of‑the‑art Arabic grammatical error correction. Their extensive experiments confirm that preserving the upper‑dot diacritic significantly improves transliteration quality, that post‑correction yields substantial gains, and that the resulting Arabic script output can be directly consumed by existing Arabic NLP tools for morphological analysis and machine translation. The publicly released resources and benchmark set a new standard for Judeo‑Arabic computational research.

Comments & Academic Discussion

Loading comments...

Leave a Comment