Beyond Retraining: Training-Free Unknown Class Filtering for Source-Free Open Set Domain Adaptation of Vision-Language Models

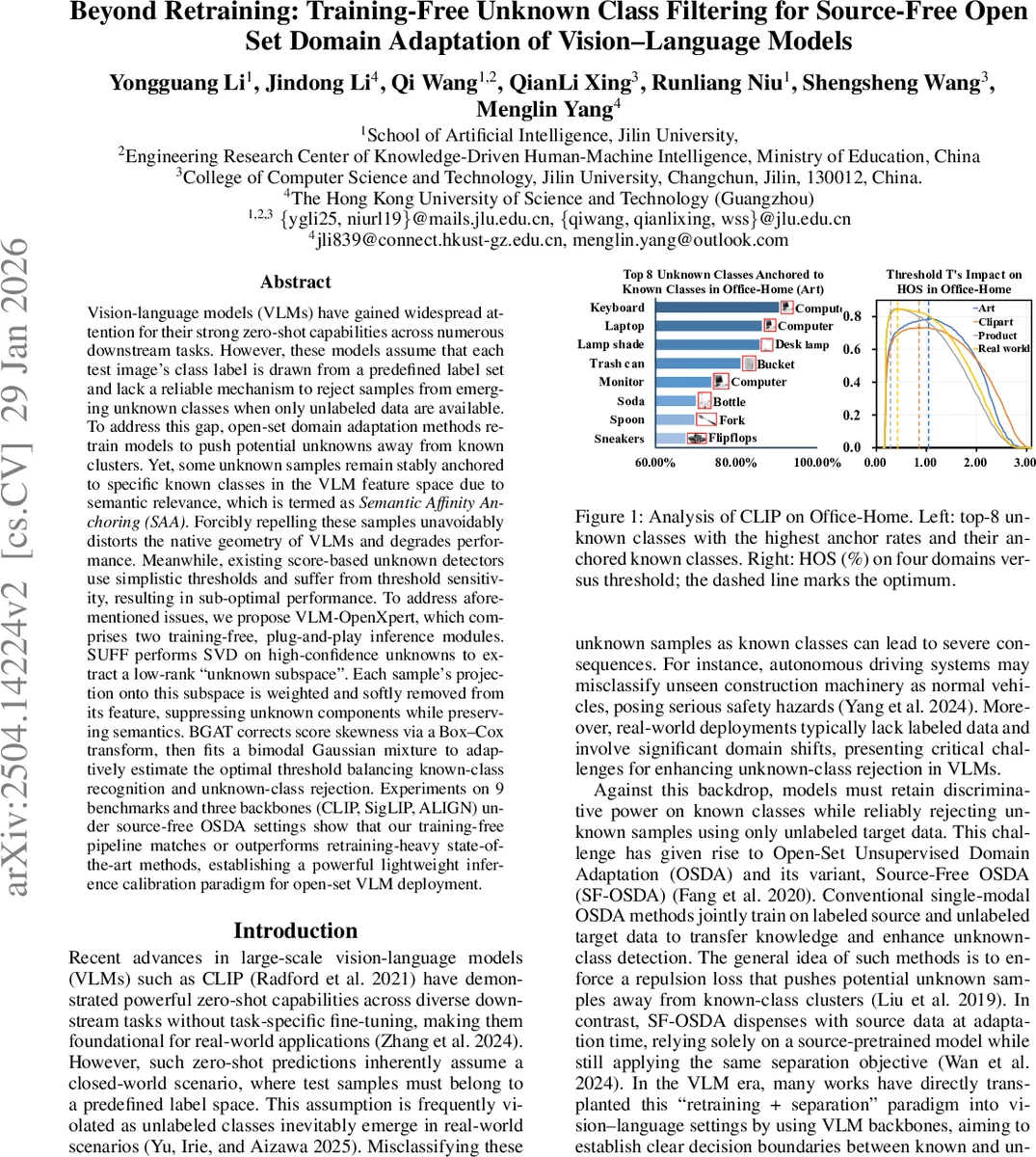

Vision-language models (VLMs) have gained widespread attention for their strong zero-shot capabilities across numerous downstream tasks. However, these models assume that each test image’s class label is drawn from a predefined label set and lack a reliable mechanism to reject samples from emerging unknown classes when only unlabeled data are available. To address this gap, open-set domain adaptation methods retrain models to push potential unknowns away from known clusters. Yet, some unknown samples remain stably anchored to specific known classes in the VLM feature space due to semantic relevance, which is termed as Semantic Affinity Anchoring (SAA). Forcibly repelling these samples unavoidably distorts the native geometry of VLMs and degrades performance. Meanwhile, existing score-based unknown detectors use simplistic thresholds and suffer from threshold sensitivity, resulting in sub-optimal performance. To address aforementioned issues, we propose VLM-OpenXpert, which comprises two training-free, plug-and-play inference modules. SUFF performs SVD on high-confidence unknowns to extract a low-rank “unknown subspace”. Each sample’s projection onto this subspace is weighted and softly removed from its feature, suppressing unknown components while preserving semantics. BGAT corrects score skewness via a Box-Cox transform, then fits a bimodal Gaussian mixture to adaptively estimate the optimal threshold balancing known-class recognition and unknown-class rejection. Experiments on 9 benchmarks and three backbones (CLIP, SigLIP, ALIGN) under source-free OSDA settings show that our training-free pipeline matches or outperforms retraining-heavy state-of-the-art methods, establishing a powerful lightweight inference calibration paradigm for open-set VLM deployment.

💡 Research Summary

The paper tackles a critical limitation of large‑scale vision‑language models (VLMs) such as CLIP, SigLIP, and ALIGN: while they excel at zero‑shot classification, they assume a closed‑world label space and cannot reliably reject samples from unknown classes when only unlabeled target data are available. Existing open‑set unsupervised domain adaptation (OSDA) methods address this by retraining the model with a repulsion loss that pushes potential unknown samples away from known‑class clusters. However, the authors identify a pervasive phenomenon they call Semantic Affinity Anchoring (SAA), where many unknown classes are semantically close to certain known classes and become “anchored” to those clusters in the VLM feature space. Forcibly repelling these anchored samples distorts the intrinsic geometry learned during massive image‑text pre‑training, leading to a drop in zero‑shot performance.

In addition, current unknown‑detector scores rely on fixed thresholds, simple means, or heuristic clustering, which are highly sensitive to dataset‑specific score distributions and often fail to capture the true separation between known and unknown samples.

To overcome both issues without any additional training or labeled data, the authors propose VLM‑OpenXpert, a fully training‑free, plug‑and‑play inference framework composed of two modules:

-

SUFF (SVD‑Based Unknown‑Class Feature Filtering)

- First selects high‑confidence unknown samples using an uncertainty criterion (entropy of the softmax over known‑class logits).

- Performs singular value decomposition (SVD) on the centralized feature matrix of these samples, extracting a low‑rank “unknown subspace” where unknown samples have higher projection energy than known samples.

- For every image, its feature is softly attenuated according to its projection onto this subspace, effectively removing the unknown component while preserving the overall semantic structure of the VLM. This directional filtering mitigates SAA without globally repelling samples.

-

BGAT (Box‑Cox GMM‑Based Adaptive Thresholding)

- Uses entropy scores (or any other confidence measure) and first adds a tiny ε to ensure positivity.

- Applies a Box‑Cox transformation, whose parameter λ is estimated by maximum‑likelihood, to correct skewness and stabilize scale.

- Fits a two‑component Gaussian mixture model (GMM) to the transformed scores via EM. Because variance estimates can be noisy, the method takes the midpoint of the two component means (μ_known, μ_unknown) as a robust estimate of the optimal decision threshold.

- The threshold is then inverse‑Box‑Cox transformed back to the original score space.

The overall pipeline works as follows: (i) run the frozen VLM on the unlabeled target set to obtain image and text embeddings; (ii) compute entropy scores and apply BGAT to obtain an initial threshold; (iii) use this threshold to collect high‑confidence known and unknown feature sets; (iv) run SUFF to filter the features; (v) recompute scores on the filtered features; (vi) apply BGAT again to obtain the final decision threshold and classify each sample as known (assign the arg‑max class) or unknown (C + 1).

Experimental validation spans nine benchmark datasets (Office‑Home, DomainNet, VisDA‑2017, etc.) and three VLM backbones. Under the source‑free OSDA setting, VLM‑OpenXpert matches or surpasses state‑of‑the‑art retraining‑heavy methods such as OSBP, DANCE, and recent CLIP‑based OSDA approaches. Notably, on domains with high SAA rates (e.g., Office‑Home Art), SUFF dramatically reduces the “anchor rate” and improves HOS (harmonic mean of known‑class accuracy and unknown‑class detection) by several percentage points. Computationally, the added SVD and Box‑Cox steps incur only a few milliseconds per batch, making the approach suitable for real‑time deployment.

Key contributions are: (1) identification and quantitative analysis of the Semantic Affinity Anchoring phenomenon; (2) a training‑free, geometry‑preserving feature filtering method (SUFF) that directly addresses SAA; (3) a statistically grounded adaptive thresholding technique (BGAT) that eliminates brittle heuristic thresholds; and (4) extensive empirical evidence that a lightweight inference‑time calibration can replace costly retraining for open‑set VLM deployment.

In summary, VLM‑OpenXpert provides a practical, privacy‑preserving solution for deploying large vision‑language models in open‑world scenarios, delivering robust unknown‑class rejection without any additional training or labeled data.

Comments & Academic Discussion

Loading comments...

Leave a Comment