Compound-QA: A Benchmark for Evaluating LLMs on Compound Questions

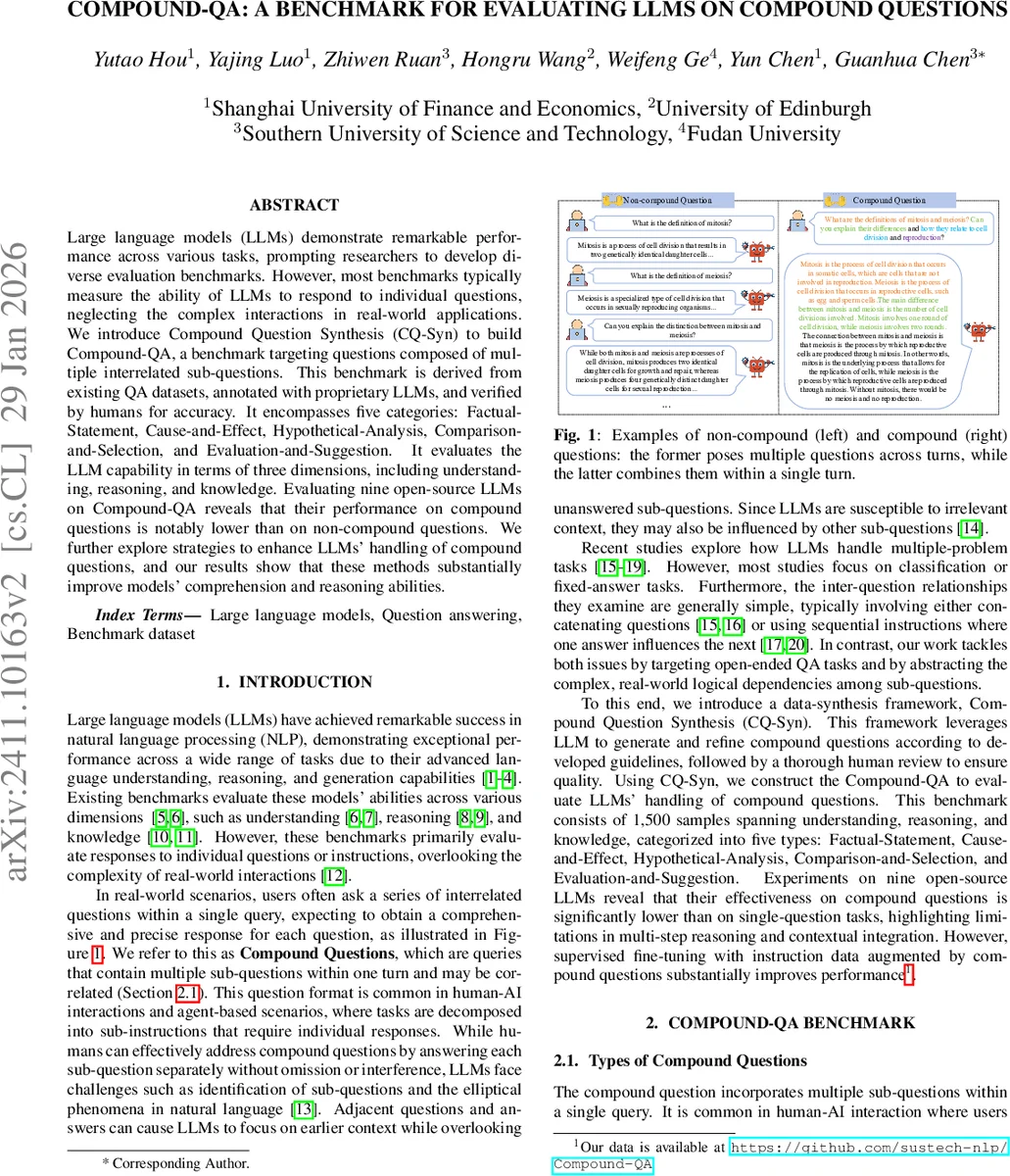

Large language models (LLMs) demonstrate remarkable performance across various tasks, prompting researchers to develop diverse evaluation benchmarks. However, most benchmarks typically measure the ability of LLMs to respond to individual questions, neglecting the complex interactions in real-world applications. We introduce Compound Question Synthesis (CQ-Syn) to build Compound-QA, a benchmark targeting questions composed of multiple interrelated sub-questions. This benchmark is derived from existing QA datasets, annotated with proprietary LLMs, and verified by humans for accuracy. It encompasses five categories: Factual-Statement, Cause-and-Effect, Hypothetical-Analysis, Comparison-and-Selection, and Evaluation-and-Suggestion. It evaluates the LLM capability in terms of three dimensions, including understanding, reasoning, and knowledge. Evaluating nine open-source LLMs on Compound-QA reveals that their performance on compound questions is notably lower than on non-compound questions. We further explore strategies to enhance LLMs’ handling of compound questions, and our results show that these methods substantially improve models’ comprehension and reasoning abilities.

💡 Research Summary

The paper addresses a critical gap in the evaluation of large language models (LLMs): existing benchmarks largely focus on single‑question answering, while real‑world interactions often involve “compound questions” that embed multiple interrelated sub‑questions within a single user turn. To systematically study this phenomenon, the authors propose a new benchmark called Compound‑QA and a data‑synthesis pipeline named Compound Question Synthesis (CQ‑Syn).

Definition and Taxonomy

A compound question is defined as a single utterance that contains two or more sub‑questions whose answers are required to be provided together. The authors identify five logical relationship categories that capture the main ways sub‑questions can interact:

- Factual‑Statement (FS) – a set of largely independent factual queries.

- Cause‑and‑Effect (CE) – first asks for causes, then for the resulting effects.

- Hypothetical‑Analysis (HA) – presents a hypothetical scenario and asks for analysis of possible outcomes.

- Comparison‑and‑Selection (CS) – requires a comparative analysis of multiple items and a final selection based on criteria.

- Evaluation‑and‑Suggestion (ES) – asks for a critical evaluation followed by actionable improvement suggestions.

These categories are motivated by real‑world user intents and span a spectrum from simple information retrieval (FS) to multi‑step reasoning and synthesis (ES).

CQ‑Syn Pipeline

The data generation process consists of three stages:

- Question Design – For each type, a detailed prompt is crafted that includes task description, role description, generation guidelines, and manually curated examples. The prompt also supplies the original single‑question context, encouraging the LLM to produce a compound version that mirrors the original distribution.

- Question Verification – Generated questions undergo keyword‑based filtering (type‑specific handcrafted rules) and an LLM‑based sanity check to discard malformed or off‑topic items.

- Reference Generation – A proprietary LLM (GPT‑4o) produces reference answers for each verified compound question. Human reviewers (three master’s‑level annotators) then validate the QA pairs; only items unanimously judged correct and complete are retained.

Using CQ‑Syn, the authors construct 1,500 compound QA instances (100 per type per ability subset) covering three ability dimensions: Understanding, Reasoning, and Knowledge. The underlying source datasets are Adversarial QA, AGI‑Eval, and PubMedQA.

Experimental Setup

Nine open‑source LLMs of varying scale are evaluated: DeepSeek‑7B‑chat, Mistral‑7B‑Instruct‑v0.3, LLaMA‑8B‑Instruct, Gemma‑2‑9B‑it, GLM‑4‑9B‑chat, InternLM‑7B‑chat, Qwen2.5‑7B‑Instruct, Gemma‑3‑27B‑it, and Qwen‑3‑32B.

The authors propose a multi‑dimensional evaluation framework:

- Comprehensiveness – does the model address every sub‑question without omission?

- Correctness – factual and logical accuracy of each answer component.

- Diversity – variety of solution strategies across sub‑questions.

Automatic scoring is performed with GPT‑4o‑mini, which compares model outputs to reference answers and computes a win‑rate (percentage of instances where the model is judged equal or superior). To reduce positional bias, the order of model and reference answers is swapped and averaged. Human evaluation on a subset shows 84 % agreement with the automatic scores, confirming reliability.

Key Findings

- Overall Performance – The largest model, Qwen‑3‑32B, dominates across all three dimensions, achieving win‑rates above 80 % for most types. Gemma‑3‑27B is competitive, especially in Reasoning, but lags behind Qwen‑3 in Knowledge and Understanding. Smaller models (e.g., DeepSeek, Mistral) show markedly lower scores, especially on complex types.

- Type‑Specific Trends – All models perform best on FS questions, reflecting their strength in factual retrieval. Performance drops sharply for CE, HA, CS, and especially ES, where multi‑step reasoning and synthesis are required. The gap narrows for the largest models, indicating that scale brings emergent reasoning abilities.

- Compound vs. Non‑Compound – When the same underlying questions are presented as a multi‑turn dialogue (i.e., decomposed into separate single‑question turns), both LLaMA and InternLM achieve substantially higher win‑rates. This demonstrates that the compound format itself introduces a non‑trivial difficulty, likely due to the need to maintain and integrate context across sub‑questions.

- Positional Effects – Experiments reordering sub‑questions in FS items reveal a “first‑and‑last advantage”: sub‑questions placed at the beginning or end of the sequence are answered more accurately than those in the middle. This aligns with known Transformer biases toward the ends of the input sequence.

- Fine‑Tuning with Compound Data – Adding compound‑question instruction data to the fine‑tuning corpus improves all models by roughly 10–15 percentage points across the three dimensions, confirming that exposure to this format during training is beneficial.

Implications and Future Directions

The study establishes Compound‑QA as the first publicly available benchmark that explicitly evaluates LLMs on multi‑question, interdependent queries. The findings highlight a clear weakness of current open‑source LLMs: while they excel at isolated factual recall, they struggle with maintaining logical coherence across multiple sub‑questions, handling ellipsis, and generating comprehensive, diverse solutions.

Future work could explore:

- Scaling up model size and training data to further close the gap on complex types.

- Developing dedicated question decomposition or chain‑of‑thought prompting strategies that explicitly break down compound queries before answering.

- Integrating external tools (retrievers, calculators, knowledge bases) to support multi‑step reasoning.

- Extending the taxonomy to cover more nuanced relational patterns (temporal ordering, conditional dependencies).

- Investigating multimodal extensions where compound queries involve images, tables, or code snippets.

Conclusion

Compound‑QA, built via the CQ‑Syn pipeline, provides a rigorous, human‑validated dataset of 1,500 compound questions spanning five logical types and three ability dimensions. Empirical evaluation of nine open‑source LLMs reveals a substantial performance gap between compound and single‑question tasks, with larger models and targeted fine‑tuning offering the most improvement. This benchmark opens a new avenue for assessing and advancing LLMs toward more realistic, multi‑turn, multi‑question human‑AI interactions.

Comments & Academic Discussion

Loading comments...

Leave a Comment