A Foundation Model for Virtual Sensors

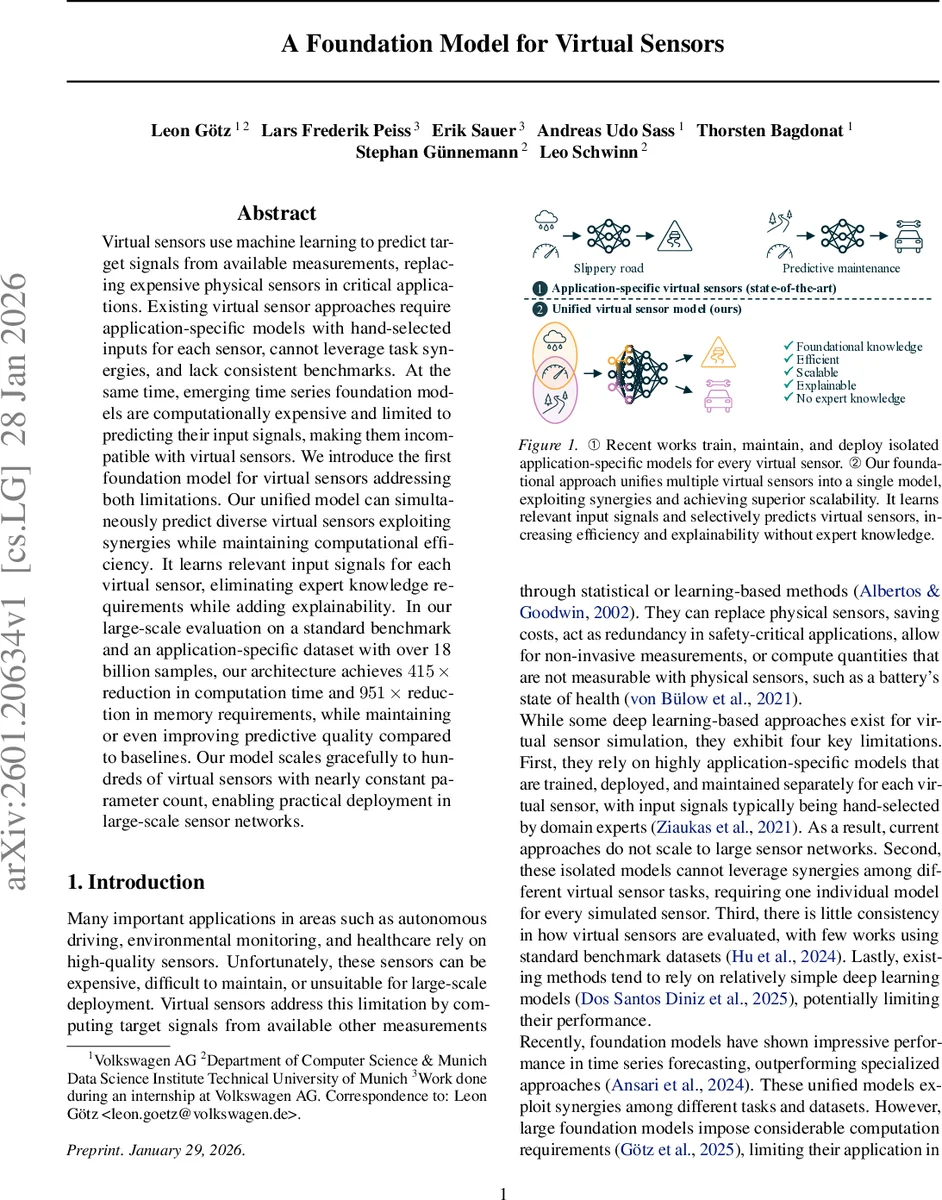

Virtual sensors use machine learning to predict target signals from available measurements, replacing expensive physical sensors in critical applications. Existing virtual sensor approaches require application-specific models with hand-selected inputs for each sensor, cannot leverage task synergies, and lack consistent benchmarks. At the same time, emerging time series foundation models are computationally expensive and limited to predicting their input signals, making them incompatible with virtual sensors. We introduce the first foundation model for virtual sensors addressing both limitations. Our unified model can simultaneously predict diverse virtual sensors exploiting synergies while maintaining computational efficiency. It learns relevant input signals for each virtual sensor, eliminating expert knowledge requirements while adding explainability. In our large-scale evaluation on a standard benchmark and an application-specific dataset with over 18 billion samples, our architecture achieves 415x reduction in computation time and 951x reduction in memory requirements, while maintaining or even improving predictive quality compared to baselines. Our model scales gracefully to hundreds of virtual sensors with nearly constant parameter count, enabling practical deployment in large-scale sensor networks.

💡 Research Summary

The paper “A Foundation Model for Virtual Sensors” tackles two longstanding challenges in the virtual‑sensor domain: (1) the need for a separate, expert‑designed model for each virtual sensor, and (2) the inability of existing time‑series foundation models to predict new signals beyond the inputs they are trained on. Traditional virtual‑sensor approaches rely on hand‑crafted input selections and isolated models, which do not scale to large sensor networks and cannot exploit cross‑sensor synergies. Conversely, recent large‑scale foundation models achieve impressive forecasting performance but are computationally heavy and limited to forecasting the very series they ingest, making them unsuitable for virtual‑sensor tasks.

The authors propose a unified foundation model that can simultaneously learn and predict hundreds of virtual sensors while remaining computationally efficient. The core architecture is a causal decoder‑only transformer. Input time‑series are first normalized, split into non‑overlapping patches of length p, and each patch is embedded via a small MLP into a token of dimension d. Tokens are concatenated in temporal order, and both sinusoidal time‑position embeddings and learned variate embeddings are added. Crucially, for each virtual sensor a “prototype token” initialized to zero is inserted into the token sequence together with a sensor‑specific variate embedding. The model then autoregressively predicts the next p steps of that virtual sensor, using its own previous predictions as inputs while always feeding ground‑truth measurements for the physical inputs. Multiple prototype tokens can be added to predict several virtual sensors in parallel, supporting heterogeneous update rates.

A novel contribution is the set of learnable signal‑relevance vectors R′. For each virtual sensor j, a vector r′_j ∈ ℝ^{M+N} encodes the importance of every available signal (both raw inputs Z and other virtual sensors Z′). The outer product r′_j r′_j^T produces a (M+N)×(M+N) cross‑relevance matrix that is duplicated across the temporal dimension and added as a static bias B to the attention scores in every transformer layer:

Attention(Q,K,V) = softmax( (QK^T / √d_k) + B ) V .

During early training the relevance vectors are initialized to ones to preserve gradient flow; later a threshold r_thres is applied, setting low‑importance entries to –∞, which effectively prunes those signals from the attention computation. This structured sparsification yields dramatic reductions in both memory footprint and compute cost because entire rows and columns of the attention matrix become zero, a pattern that modern hardware can exploit.

The model is evaluated on two fronts: (i) a public multivariate time‑series benchmark covering diverse domains, and (ii) a proprietary automotive dataset collected by Volkswagen, comprising 17,500 km of driving and over 18 billion samples. Training required roughly 43,500 GPU‑hours on NVIDIA H100 accelerators (≈ 5 years of single‑GPU compute). Compared with baseline approaches—individual LSTM/GRU models per sensor and large generic time‑series foundation models—the proposed method achieves up to a 415× speed‑up in inference and a 951× reduction in memory usage while maintaining or improving predictive quality (MAE, RMSE, MAPE). Notably, performance gains are most pronounced for sensors with complex, nonlinear dependencies (e.g., battery state‑of‑health, side‑slip angle). The parameter count grows only marginally with the number of virtual sensors, demonstrating graceful scalability.

The authors discuss several practical implications. First, the automatic relevance learning eliminates the need for domain experts to hand‑pick input signals, simplifying deployment in large‑scale networks such as smart factories, autonomous vehicle fleets, or environmental monitoring grids. Second, the prototype‑token mechanism allows selective, on‑demand prediction of a subset of sensors at varying frequencies, aligning with real‑time control requirements. Third, the structured sparsity introduced by relevance pruning makes the model amenable to edge‑device deployment, opening possibilities for on‑board fault detection and predictive maintenance.

Limitations are acknowledged: the current variate embedding dimension is fixed, which may restrict integration of high‑dimensional modalities like images or LiDAR point clouds; the choice of relevance‑threshold r_thres remains a hyper‑parameter that trades off accuracy against efficiency; and hardware‑specific acceleration (e.g., FPGA, ASIC) for the sparse attention pattern is left for future work.

In summary, this paper delivers the first foundation model tailored for virtual‑sensor applications. By unifying multiple sensor prediction tasks, learning input relevance end‑to‑end, and introducing a sparsity‑aware transformer architecture, it achieves unprecedented scalability and efficiency. The work paves the way for cost‑effective, high‑performance sensor virtualization across a broad spectrum of industries.

Comments & Academic Discussion

Loading comments...

Leave a Comment