IoT Device Identification with Machine Learning: Common Pitfalls and Best Practices

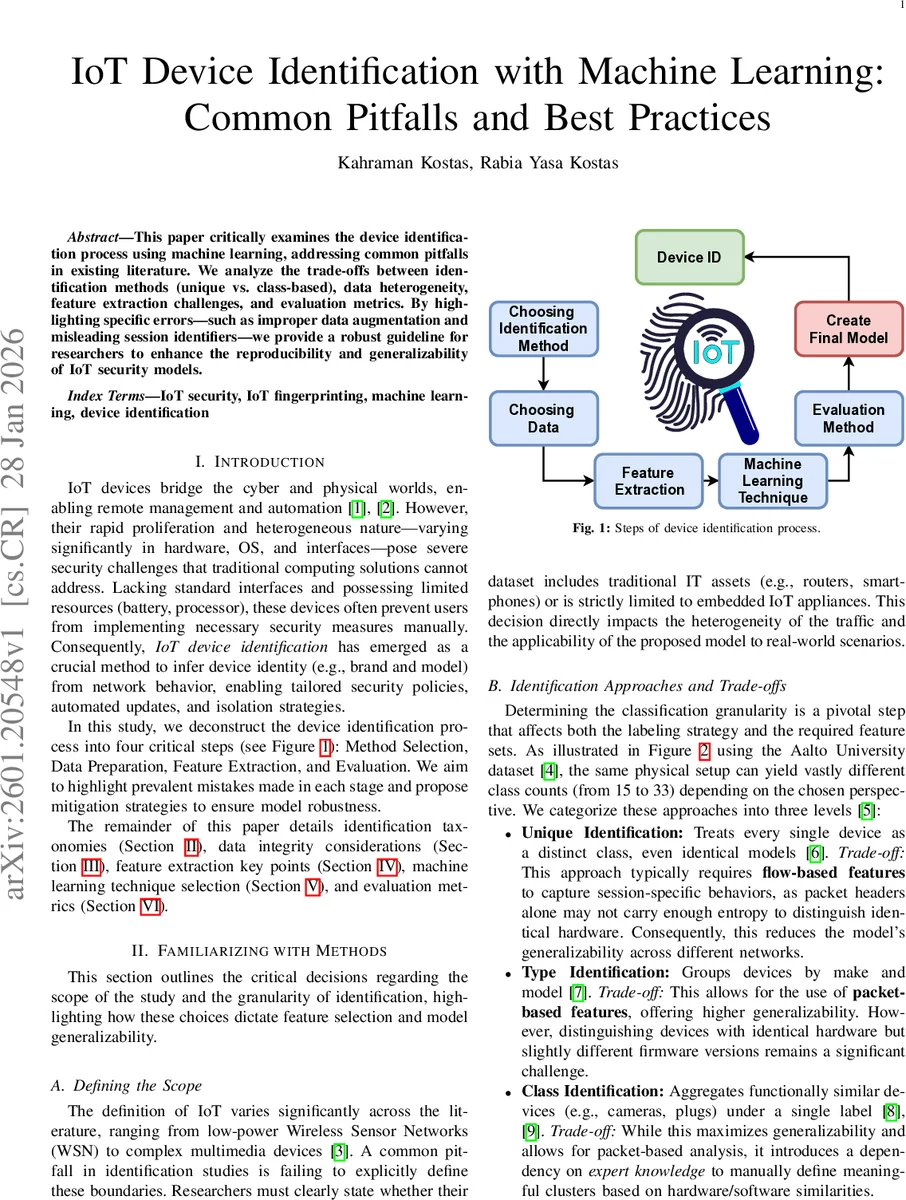

This paper critically examines the device identification process using machine learning, addressing common pitfalls in existing literature. We analyze the trade-offs between identification methods (unique vs. class based), data heterogeneity, feature extraction challenges, and evaluation metrics. By highlighting specific errors, such as improper data augmentation and misleading session identifiers, we provide a robust guideline for researchers to enhance the reproducibility and generalizability of IoT security models.

💡 Research Summary

The paper presents a systematic critique of the entire machine‑learning pipeline used for IoT device identification, focusing on recurring methodological mistakes and offering concrete best‑practice recommendations. It begins by motivating the problem: the explosive growth of heterogeneous IoT devices creates a large attack surface, and identifying devices from their network behavior is essential for enforcing fine‑grained security policies, automated updates, and isolation. The authors decompose the identification workflow into five stages—method selection, data preparation, feature extraction, model selection, and evaluation—and examine each stage in depth.

In the method‑selection stage, three granularity levels are defined: Unique (each physical device is a separate class), Type (devices are grouped by make and model), and Class (devices are grouped by functional category such as cameras or smart plugs). The paper explains the trade‑offs: Unique identification requires flow‑level features (inter‑packet timing, packet length distributions) because static packet headers lack enough entropy, but such models are fragile across networks. Type identification can rely on packet‑header features, offering better generalization, yet struggles with firmware‑level variations. Class identification maximizes generalizability and permits packet‑based analysis, but demands expert knowledge to define meaningful clusters.

The data‑preparation section surveys three common sources: simulated traffic, controlled test‑beds, and public datasets (e.g., UNSW IoT traces, Aalto captures). Simulations are cheap but fail to capture real‑world noise; test‑beds provide realistic data but raise privacy concerns and require careful anonymization. Public datasets enable reproducibility but often contain only benign traffic, limiting insight into attack scenarios. A critical pitfall highlighted is the “Transfer Problem,” where devices that communicate via non‑IP protocols (e.g., ZigBee) appear under the MAC address of a gateway, causing distinct devices to be mislabeled as one. The authors stress that ground‑truth labels must be derived from logical device identities rather than raw MAC/IP fields. They also discuss extreme class imbalance (e.g., cameras generating gigabytes of packets while sensors emit only a few bytes) and warn against data leakage caused by augmenting the entire dataset before splitting into training and test sets. Proper practice is to split first, then augment only the training portion, keeping the test set pristine.

Feature extraction is treated as the bridge between raw PCAP files and the learning algorithm. The authors compare Python libraries (dpkt, Scapy) with command‑line tools like tshark, recommending the latter for large‑scale preprocessing due to speed. The central recommendation is the removal of any identifier that could enable “shortcut learning.” Explicit identifiers (MAC, IP), session identifiers (source ports, TCP sequence numbers, IP IDs), and implicit identifiers (header checksums, absolute timestamps) must be stripped or masked. When raw packet bytes are fed to deep models, the same masking step must be applied to Ethernet/IP headers to prevent the network from learning static addresses.

Model selection is discussed with scalability and interpretability in mind. Because device identification is a multi‑class problem, a monolithic model scales poorly when new devices appear. The authors advocate a One‑vs‑Rest (OvR) architecture, where each device gets its own binary classifier, allowing incremental addition without retraining the whole system. They argue that for tabular flow features, classical algorithms (decision trees, random forests, gradient boosting) often outperform deep neural networks in both accuracy and training speed. Real‑time constraints are emphasized: k‑NN and kernel‑SVM, while accurate, have inference costs that grow with dataset size and are unsuitable for micro‑second traffic processing. Transparent models such as decision trees or logistic regression are preferred because they provide explanations useful for security audits.

The evaluation section critiques the over‑reliance on overall accuracy, especially in imbalanced IoT datasets where a majority‑class‑only predictor can achieve high accuracy while completely missing minority devices. The paper recommends per‑class recall, precision, and especially macro‑averaged F1‑score as primary metrics. Macro averaging treats each class equally, exposing poor performance on low‑traffic devices, whereas micro averaging re‑introduces the bias of accuracy. Visualizing confusion matrices is urged to diagnose systematic misclassifications (e.g., devices from the same manufacturer being confused due to shared firmware).

In the conclusion, the authors synthesize the recommendations: align identification granularity with feature type, ensure ethical and accurate labeling, rigorously prune static identifiers, adopt scalable OvR or modular classifiers, prioritize interpretable models for operational use, and evaluate with metrics that reflect class imbalance. By following this checklist, researchers can produce reproducible, generalizable, and practically deployable IoT security models that are robust to the heterogeneity and scale of real‑world deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment