Advancing Open-source World Models

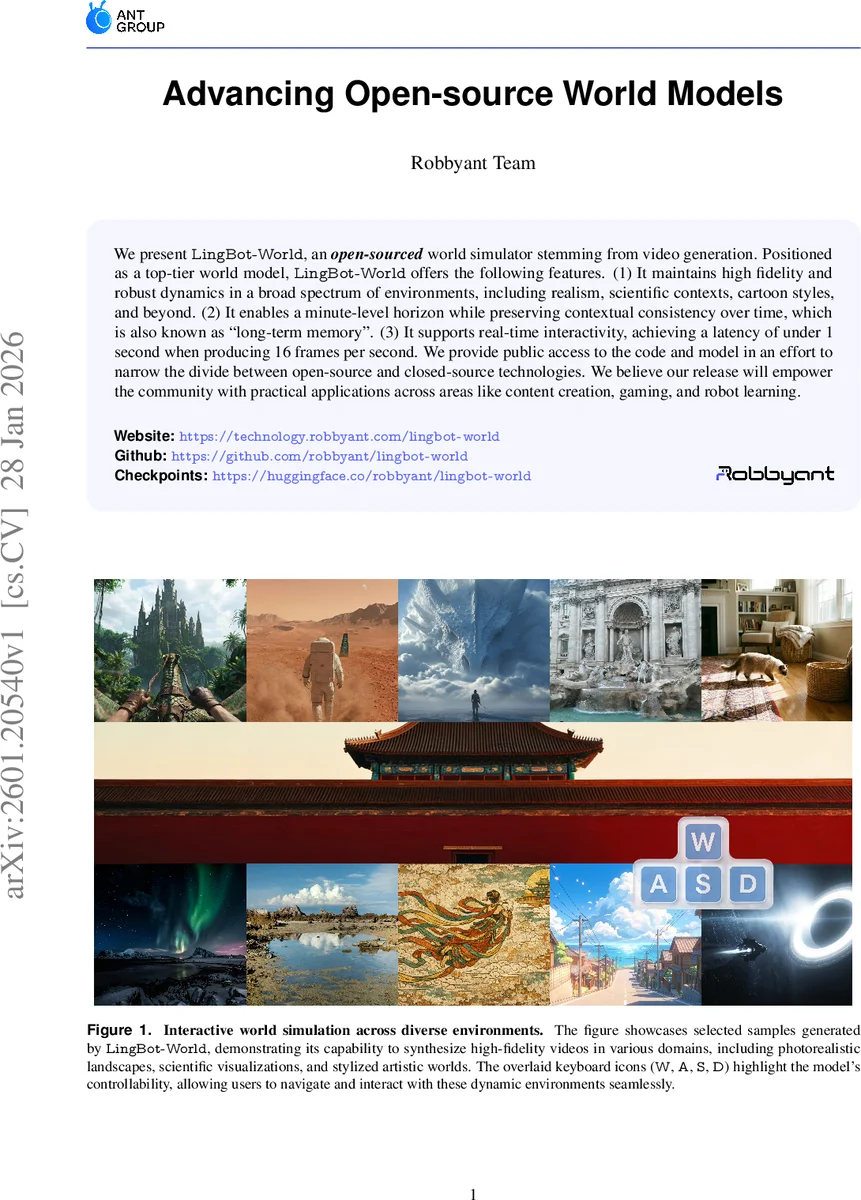

We present LingBot-World, an open-sourced world simulator stemming from video generation. Positioned as a top-tier world model, LingBot-World offers the following features. (1) It maintains high fidelity and robust dynamics in a broad spectrum of environments, including realism, scientific contexts, cartoon styles, and beyond. (2) It enables a minute-level horizon while preserving contextual consistency over time, which is also known as “long-term memory”. (3) It supports real-time interactivity, achieving a latency of under 1 second when producing 16 frames per second. We provide public access to the code and model in an effort to narrow the divide between open-source and closed-source technologies. We believe our release will empower the community with practical applications across areas like content creation, gaming, and robot learning.

💡 Research Summary

LingBot‑World is presented as an open‑source, high‑fidelity world simulator that builds on recent advances in video generation. The authors identify three major shortcomings of current text‑to‑video models: (1) they generate short, visually impressive clips but lack a grounded understanding of physics, causality, and object permanence; (2) they cannot maintain narrative or structural consistency over long horizons; and (3) real‑time interactive control is infeasible due to the heavy computational cost of diffusion sampling. To address these gaps, LingBot‑World introduces a three‑pillar architecture.

1. Scalable Data Engine with Hierarchical Semantics. The system aggregates three data sources—real‑world footage, game engine recordings, and synthetic Unreal Engine renders. Real videos are enriched with pseudo‑camera intrinsics/extrinsics using state‑of‑the‑art pose estimators; game recordings provide synchronized RGB frames, user control signals (W, A, S, D), and precise camera parameters; synthetic pipelines generate collision‑free, randomized camera trajectories with perfect ground‑truth poses. After acquisition, a profiling stage filters low‑resolution or short clips, extracts semantic attributes (visual quality, motion magnitude, scene perspective) via a vision‑language model, and annotates missing geometry with MegaSAM. This multi‑modal pipeline yields a clean, richly annotated corpus ready for world‑model training.

2. Multi‑Stage Evolutionary Training Pipeline. Training proceeds in three phases. Phase I (pre‑training) builds a general video prior on the filtered corpus, focusing on texture, lighting, and high‑resolution detail. Phase II (middle‑training) introduces a Mixture‑of‑Experts (MoE) backbone and a “long‑term memory” loss that forces the model to retain state across extended sequences, thereby learning action‑conditioned dynamics and preserving environmental consistency over minute‑scale horizons. Phase III (post‑training) converts the bidirectional diffusion model into an efficient autoregressive sampler using causal attention adaptation and few‑step distillation, achieving sub‑second latency for 16 fps generation. The resulting system can respond to user inputs with less than one second of delay, enabling real‑time navigation via keyboard controls.

3. Versatile Embodied‑AI Applications. LingBot‑World supports promptable world events, allowing users to steer global conditions or local dynamics through textual prompts. It also serves as a testbed for training reinforcement‑learning agents, and its generated videos can be fed into 3D reconstruction pipelines to verify geometric integrity. The authors provide a comparative table showing that, unlike proprietary systems such as Genie 3 or Mirage 2, LingBot‑World offers a general‑domain capability, long generation horizons, high dynamic degree, real‑time performance, and full open‑source availability (code, weights, checkpoints).

The paper emphasizes democratization: by releasing the entire stack, the authors aim to narrow the gap between closed‑source commercial world simulators and the research community. While the technical contributions—hierarchical captioning, MoE‑augmented diffusion, and few‑step distillation for real‑time inference—are compelling, the manuscript lacks extensive quantitative evaluation (e.g., PSNR/SSIM over long sequences, action success rates, user studies) and detailed analysis of memory drift or error accumulation across minute‑long trajectories. Moreover, the computational resources required for training (model size, GPU days) are not disclosed, which may pose a barrier for reproducibility.

In summary, LingBot‑World represents a comprehensive, open‑source attempt to transform video generation models into interactive, long‑horizon world simulators. Its integrated data pipeline, hierarchical semantic annotations, and staged training strategy push the state of the art toward practical, real‑time applications in content creation, gaming, and robot learning. Future work that adds rigorous benchmarks, ablation studies, and resource‑efficiency analyses will further solidify its position as a foundational platform for the next generation of virtual worlds.

Comments & Academic Discussion

Loading comments...

Leave a Comment