SemBind: Binding Diffusion Watermarks to Semantics Against Black-Box Forgery Attacks

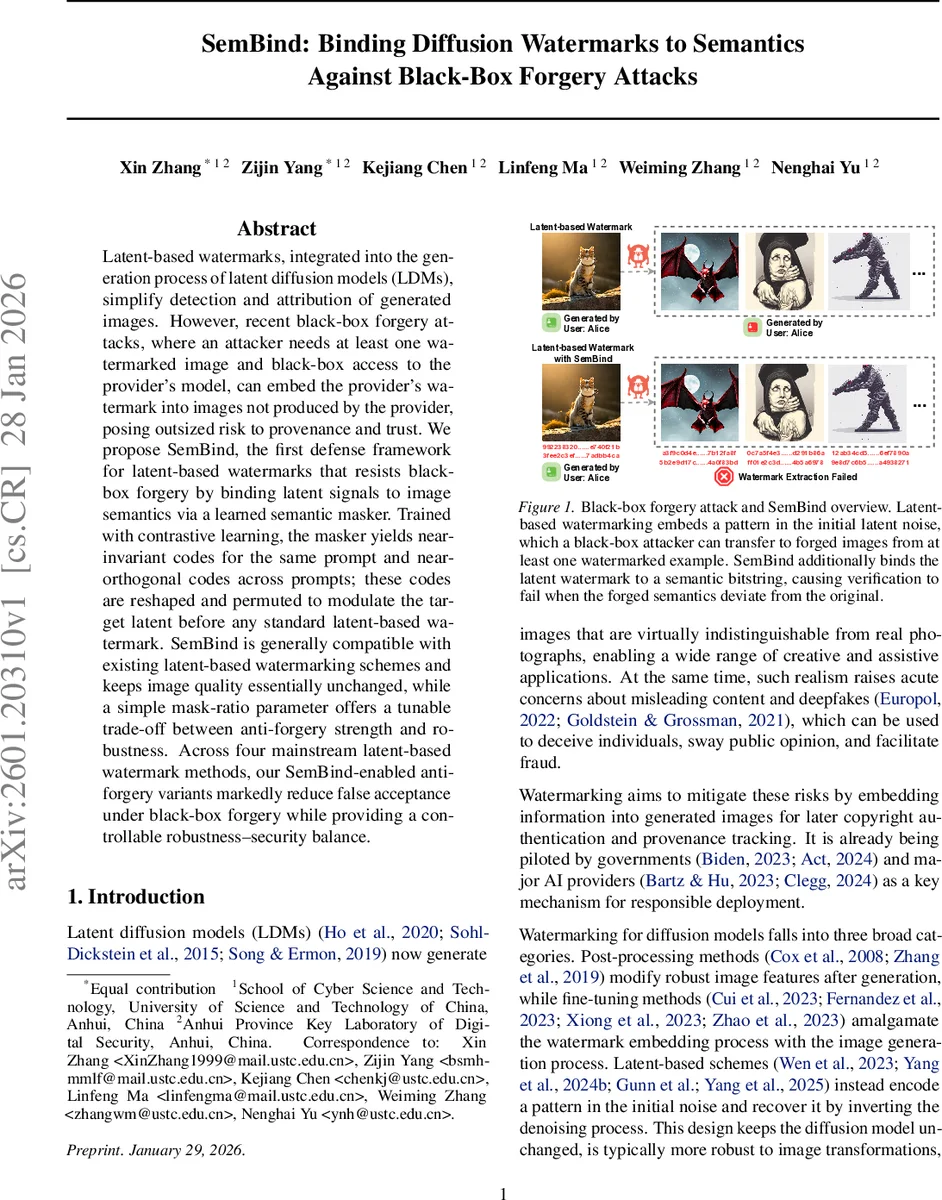

Latent-based watermarks, integrated into the generation process of latent diffusion models (LDMs), simplify detection and attribution of generated images. However, recent black-box forgery attacks, where an attacker needs at least one watermarked image and black-box access to the provider’s model, can embed the provider’s watermark into images not produced by the provider, posing outsized risk to provenance and trust. We propose SemBind, the first defense framework for latent-based watermarks that resists black-box forgery by binding latent signals to image semantics via a learned semantic masker. Trained with contrastive learning, the masker yields near-invariant codes for the same prompt and near-orthogonal codes across prompts; these codes are reshaped and permuted to modulate the target latent before any standard latent-based watermark. SemBind is generally compatible with existing latent-based watermarking schemes and keeps image quality essentially unchanged, while a simple mask-ratio parameter offers a tunable trade-off between anti-forgery strength and robustness. Across four mainstream latent-based watermark methods, our SemBind-enabled anti-forgery variants markedly reduce false acceptance under black-box forgery while providing a controllable robustness-security balance.

💡 Research Summary

The paper introduces SemBind, a novel defense framework for latent‑based watermarks in diffusion models that mitigates black‑box forgery attacks. Existing latent‑based schemes embed a pattern directly into the initial latent noise (z_T) and recover it via an inverse DDIM process. While this design leaves the diffusion backbone untouched and offers robustness to image transformations, it also makes the watermark vulnerable: an attacker with only black‑box query access and a single watermarked sample can invert the generated image to estimate the hidden latent, then either (i) imprint the watermark onto a semantically unrelated cover image or (ii) reuse the latent with a different prompt (reprompting). Both strategies succeed because the watermark is purely a latent‑space signal, independent of the image semantics.

SemBind addresses this weakness by binding the watermark to the semantics of the generated image. The core component is a semantic masker f_θ, a private model that maps any image to a compact binary code m ∈ {0,1}^B. The masker is built from a frozen vision encoder (e.g., DINOv2‑Giant) followed by three lightweight MLP modules: an encoder, a projection head for contrastive learning, and a hash head that outputs logits subsequently binarized. Training proceeds in two stages. First, the encoder and projection head are optimized with a supervised contrastive loss to cluster embeddings of images that share the same textual prompt while spreading different‑prompt embeddings across the hypersphere. Second, the hash head quantizes these clustered features into binary codes, encouraging (i) near‑zero Hamming distance for images generated from the same prompt, and (ii) an expected Hamming distance of B/2 for images from different prompts (i.e., near‑orthogonal codes).

During watermark embedding, the provider first generates a standard latent‑based watermark z_T^{wm} using any existing scheme (Tree‑Ring, Gaussian Shading, PRC, or Gaussian Shading++). Simultaneously, the semantic masker processes a clean image generated from the same prompt to produce the code m. This code is tiled, reshaped, and permuted under a secret key to form a mask M of the same spatial dimensions as the latent. A mask‑ratio parameter r controls how strongly the mask modulates the latent:

z_T^{*} = (1‑r)·(z_T^{wm} ⊙ M) + r·z_T^{wm}

Thus, when r = 0 the method reduces to the original watermark; when r approaches 1 the mask dominates. The modulated latent z_T^{*} is then fed to the diffusion denoiser to produce the final image.

At verification time, the same semantic masker is applied to the possibly forged image to recompute a code \hat{m} and reconstruct \hat{M}. If the image’s semantics match the original prompt, M and \hat{M} align, causing only a minor perturbation to the latent‑based watermark and allowing successful extraction. If an attacker has altered the semantics (as in imprinting or reprompting), the masks become essentially uncorrelated, introducing a large error that makes the watermark extraction fail.

The mask‑ratio r offers a tunable trade‑off: larger r yields stronger anti‑forgery protection but reduces robustness to benign perturbations (compression, noise, etc.). Experiments across the four representative latent‑based schemes show that setting r ≈ 0.2–0.3 dramatically lowers the false‑acceptance rate (FAR) under both imprinting and reprompting attacks—often by 70–90%—while preserving image quality (FID and CLIP scores differ by <0.01) and maintaining the original watermark’s detection robustness. Importantly, for schemes that already have provable undetectability (Gaussian Shading, PRC, Gaussian Shading++), the authors prove that SemBind’s linear mask multiplication does not alter the statistical distribution of the latent, thereby preserving the undetectability guarantee.

The paper also discusses limitations and future directions. The semantic masker relies on a fixed vision encoder; updates would require re‑training. Selecting the optimal r may depend on the dataset, prompt distribution, and underlying watermark, suggesting a need for adaptive tuning. Moreover, while SemBind thwarts current black‑box forgery strategies, an attacker might attempt to reverse‑engineer the masker or infer the binary code; integrating cryptographic protection for the code could further harden the system.

In summary, SemBind is the first framework that couples latent‑based diffusion watermarks with prompt‑conditioned semantic codes, delivering strong resistance to black‑box forgery without sacrificing the core benefits of latent‑based watermarking. Its modular design makes it compatible with existing watermark schemes, and its empirical and theoretical results demonstrate a practical path toward more trustworthy generative AI deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment