Endogenous Reprompting: Self-Evolving Cognitive Alignment for Unified Multimodal Models

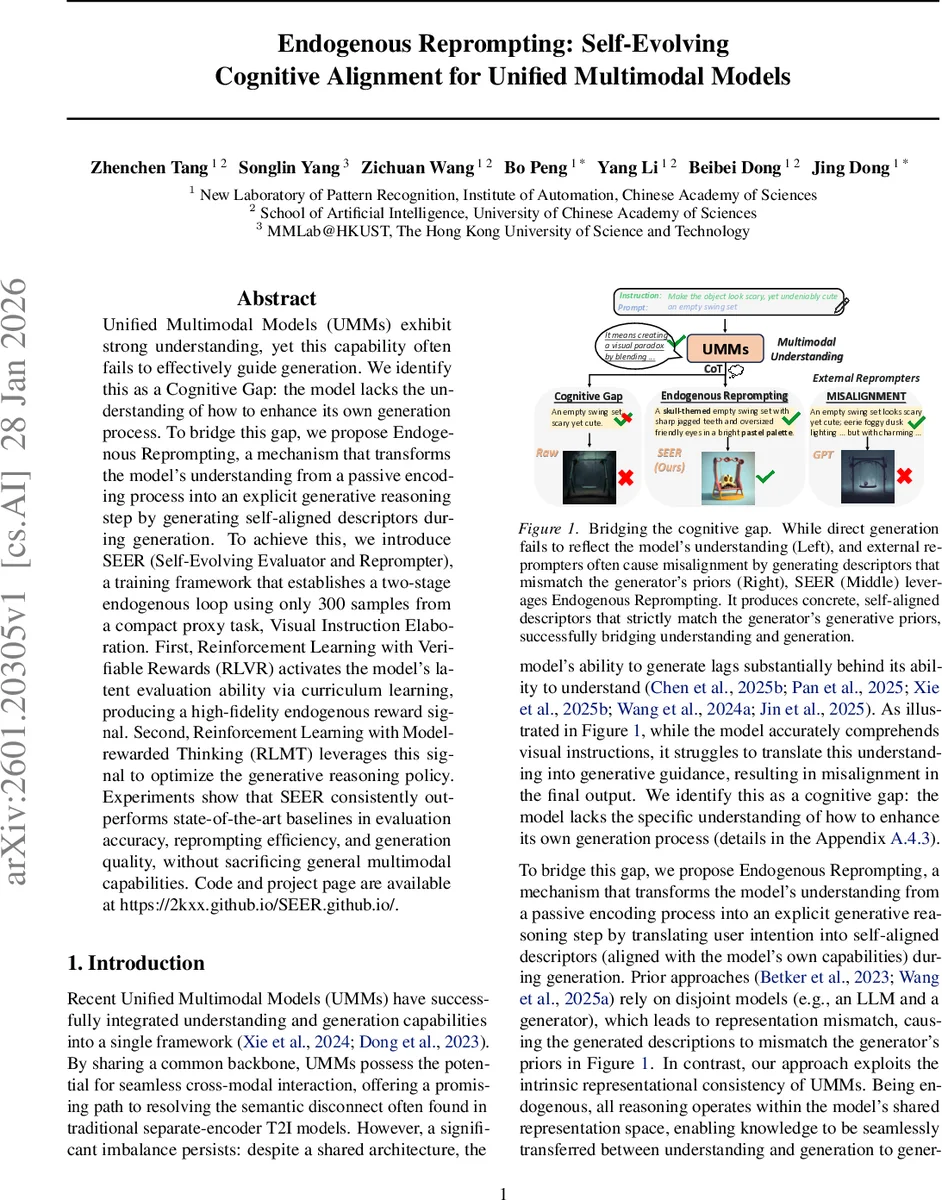

Unified Multimodal Models (UMMs) exhibit strong understanding, yet this capability often fails to effectively guide generation. We identify this as a Cognitive Gap: the model lacks the understanding of how to enhance its own generation process. To bridge this gap, we propose Endogenous Reprompting, a mechanism that transforms the model’s understanding from a passive encoding process into an explicit generative reasoning step by generating self-aligned descriptors during generation. To achieve this, we introduce SEER (Self-Evolving Evaluator and Reprompter), a training framework that establishes a two-stage endogenous loop using only 300 samples from a compact proxy task, Visual Instruction Elaboration. First, Reinforcement Learning with Verifiable Rewards (RLVR) activates the model’s latent evaluation ability via curriculum learning, producing a high-fidelity endogenous reward signal. Second, Reinforcement Learning with Model-rewarded Thinking (RLMT) leverages this signal to optimize the generative reasoning policy. Experiments show that SEER consistently outperforms state-of-the-art baselines in evaluation accuracy, reprompting efficiency, and generation quality, without sacrificing general multimodal capabilities.

💡 Research Summary

The paper identifies a “cognitive gap” in Unified Multimodal Models (UMMs): while these models excel at multimodal understanding, they fail to translate that understanding into effective generation. To close this gap, the authors introduce Endogenous Reprompting, a mechanism that turns the model’s internal comprehension into explicit generative reasoning by producing self‑aligned descriptors (reprompts) during the generation process. Unlike prior approaches that rely on external re‑prompting modules or separate language models—leading to representation mismatches—this method operates entirely within the model’s shared representation space, ensuring model‑specific alignment.

The core contribution is SEER (Self‑Evolving Evaluator and Reprompter), a two‑stage training framework that leverages a tiny proxy dataset of 300 Visual Instruction Elaboration samples. In Stage 1, Reinforcement Learning with Verifiable Rewards (RLVR) uses curriculum learning on pairwise image comparisons to activate an internal evaluator. This evaluator learns to assess three dimensions—instruction compliance, consistency with the original prompt, and overall image quality—producing a high‑fidelity, verifiable reward signal. In Stage 2, Reinforcement Learning with Model‑rewarded Thinking (RLMT) employs this endogenous reward to optimize a reprompting policy. The model receives an initial minimal prompt and a user instruction, generates a reasoning trace, and then produces a reprompt that is evaluated against a naive baseline. The policy is trained to maximize the expected relative preference while regularizing with a KL‑divergence term to preserve language fluency.

Experiments demonstrate that SEER consistently outperforms state‑of‑the‑art baselines in evaluation accuracy, reprompting efficiency, and generated image quality, all while keeping the generation parameters frozen and preserving general multimodal capabilities. The results suggest that optimizing the “think‑generate‑evaluate” loop—rather than directly fine‑tuning pixel‑level diffusion processes—offers a more fundamental pathway to unlock the generative potential of UMMs. In summary, the work provides a novel, data‑efficient paradigm for bridging the understanding‑generation divide in multimodal AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment