Spark: Strategic Policy-Aware Exploration via Dynamic Branching for Long-Horizon Agentic Learning

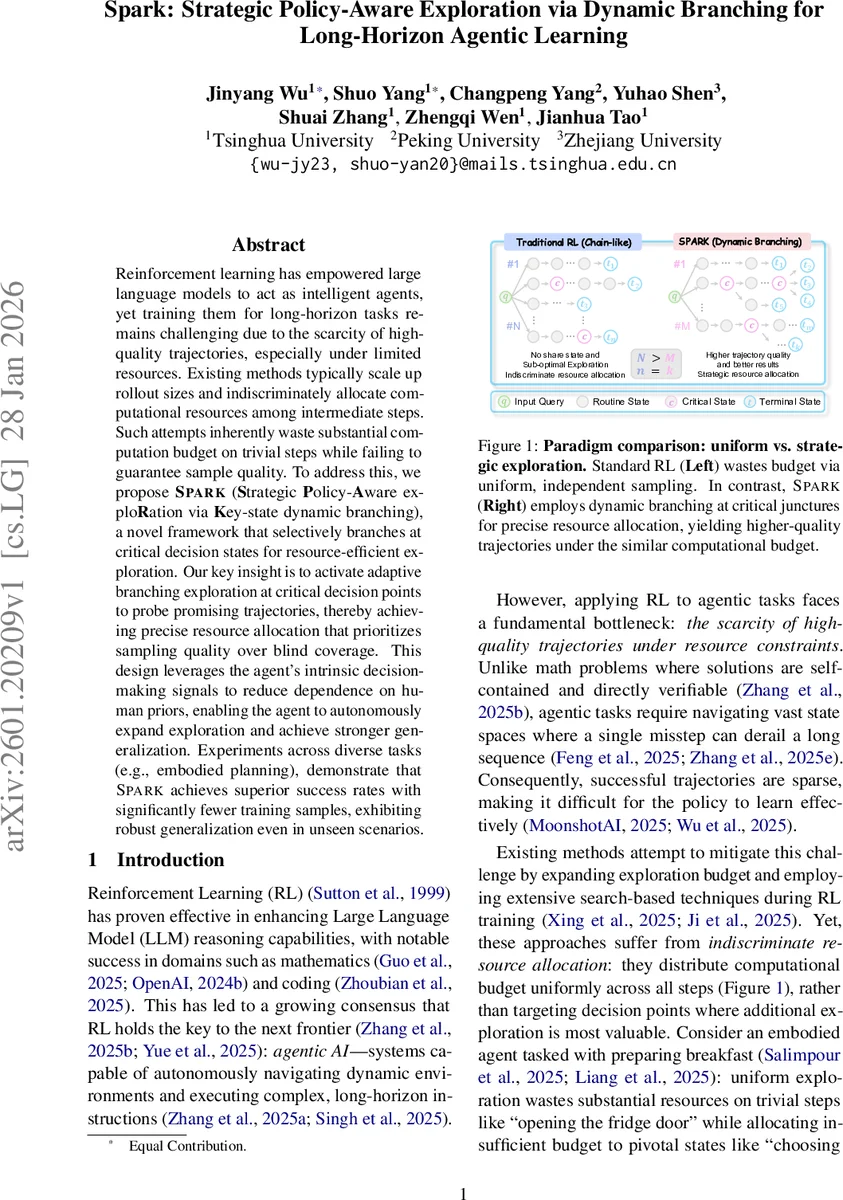

Reinforcement learning has empowered large language models to act as intelligent agents, yet training them for long-horizon tasks remains challenging due to the scarcity of high-quality trajectories, especially under limited resources. Existing methods typically scale up rollout sizes and indiscriminately allocate computational resources among intermediate steps. Such attempts inherently waste substantial computation budget on trivial steps while failing to guarantee sample quality. To address this, we propose \textbf{Spark} (\textbf{S}trategic \textbf{P}olicy-\textbf{A}ware explo\textbf{R}ation via \textbf{K}ey-state dynamic branching), a novel framework that selectively branches at critical decision states for resource-efficient exploration. Our key insight is to activate adaptive branching exploration at critical decision points to probe promising trajectories, thereby achieving precise resource allocation that prioritizes sampling quality over blind coverage. This design leverages the agent’s intrinsic decision-making signals to reduce dependence on human priors, enabling the agent to autonomously expand exploration and achieve stronger generalization. Experiments across diverse tasks (e.g., embodied planning), demonstrate that \textsc{Spark} achieves superior success rates with significantly fewer training samples, exhibiting robust generalization even in unseen scenarios.

💡 Research Summary

Paper Overview

The authors introduce Spark (Strategic Policy‑Aware exploration via Key‑state dynamic branching), a reinforcement‑learning framework designed to improve sample efficiency and success rates for large‑language‑model (LLM) agents tackling long‑horizon tasks. Traditional approaches either increase rollout size uniformly or apply exhaustive search, which wastes computation on trivial steps and fails to guarantee high‑quality trajectories under limited budgets. Spark addresses this by activating exploration only at critical decision points—states where the agent’s internal reasoning indicates high epistemic uncertainty or semantic ambiguity.

Key Mechanisms

- Root Initialization – At the start of each episode, Spark creates a small set (M) of diverse root trajectories, ensuring exploration variety without exhausting the budget.

- Autonomous Branching – During rollout, the policy generates a reasoning trace

z_t. If the trace contains a special<explore>token (learned during a brief supervised‑fine‑tuning phase), Spark treats the current state as a Spark point and spawnsBchild continuations. The branching factor is dynamically capped by the remaining global budgetN(b_eff = min(b, N‑N_current+1)). - Budget Enforcement – Guarantees that the total number of leaf nodes never exceeds

N, preventing runaway computation. - Tree‑Based Policy Update – Completed leaf trajectories are grouped by task, assigned binary success/failure rewards, and used to compute group‑normalized advantages. The policy is updated with a standard clipped objective and KL regularization, making Spark compatible with existing GRPO‑style pipelines.

Theoretical Insight

Let C be the set of pivotal steps (|C| = m ≪ K). For a single sample, the probability of taking a desirable action at step t∈C is q_t. With branching factor B, the probability that at least one child selects a desirable action becomes q_branch_t = 1 – (1 – q_t)^B, which strictly dominates q_t for any B ≥ 2. Because long‑horizon success typically requires correct decisions at multiple pivotal steps, the multiplicative effect across C dramatically raises the chance of discovering successful trajectories, while non‑pivotal steps remain sampled linearly, keeping token usage modest.

Experimental Evaluation

The authors evaluate Spark on three challenging domains:

- ALFWorld (embodied household tasks)

- ScienceWorld (complex scientific reasoning with >30 steps)

- WebShop (large‑scale product navigation).

Base models are Qwen2.5‑1.5B and 7B. Settings: total rollout budget N=8, initial roots M=4, branching factor B=2. Training runs for 120 RL steps with batch size 16.

Results

- Spark consistently outperforms strong baselines (GPT‑4o, GPT‑5, Gemini‑2.5‑Pro, ReAct, GRPO, GiGPO, RL‑VMR) across all model sizes and tasks. Notably, 1.5B Spark reaches 49.2 % success on the hardest ScienceWorld L2, beating GPT‑5 (33.6 %) and Gemini‑2.5‑Pro (30.5 %).

- Compared to uniform sampling (GRPO), Spark yields +23.3 % on ALFWorld “Look” and +39.4 % on “Pick2”, confirming that allocating extra rollouts to pivotal decisions yields larger gains on more complex coordination tasks.

- Generalization tests on out‑of‑domain L2 scenarios show Spark’s advantage persists (e.g., 80.5 % vs. 29.7 % on ALFWorld).

Ablations

- Removing the

<explore>trigger or settingB=1collapses performance to baseline levels, demonstrating the necessity of both the learned signal and the branching mechanism. - Varying

MandBshows diminishing returns beyondB=2, suggesting a sweet spot between exploration depth and budget consumption.

Limitations & Future Work

Spark’s effectiveness hinges on the quality of the <explore> token, which is learned in a modest SFT stage; insufficient exposure to diverse uncertainty patterns could cause missed Spark points. The current implementation uses a fixed branching factor; future work could incorporate meta‑learning to adapt B per state, or integrate multi‑policy ensembles for richer exploration. Extending the approach to continuous action spaces, multimodal observations, or hierarchical RL settings are promising directions.

Conclusion

Spark introduces a principled, resource‑aware exploration strategy that dynamically branches only at agent‑identified key states. By concentrating computational effort where it matters most, Spark achieves superior sample efficiency, higher success rates, and robust out‑of‑domain generalization, even with relatively small LLM backbones. The framework offers a practical pathway toward scalable, long‑horizon agentic learning without the prohibitive costs of exhaustive uniform rollout strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment