CLIP-Guided Unsupervised Semantic-Aware Exposure Correction

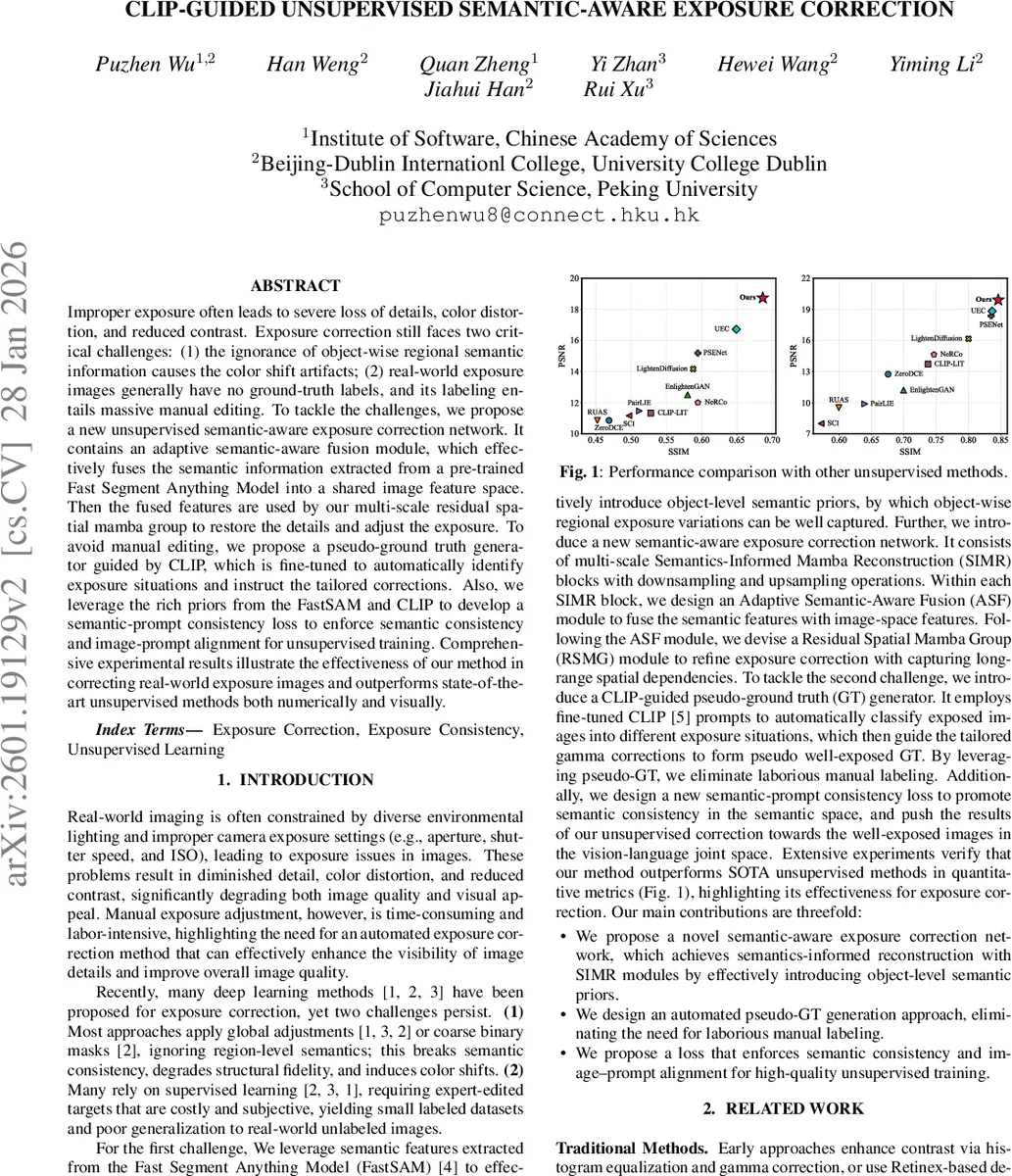

Improper exposure often leads to severe loss of details, color distortion, and reduced contrast. Exposure correction still faces two critical challenges: (1) the ignorance of object-wise regional semantic information causes the color shift artifacts; (2) real-world exposure images generally have no ground-truth labels, and its labeling entails massive manual editing. To tackle the challenges, we propose a new unsupervised semantic-aware exposure correction network. It contains an adaptive semantic-aware fusion module, which effectively fuses the semantic information extracted from a pre-trained Fast Segment Anything Model into a shared image feature space. Then the fused features are used by our multi-scale residual spatial mamba group to restore the details and adjust the exposure. To avoid manual editing, we propose a pseudo-ground truth generator guided by CLIP, which is fine-tuned to automatically identify exposure situations and instruct the tailored corrections. Also, we leverage the rich priors from the FastSAM and CLIP to develop a semantic-prompt consistency loss to enforce semantic consistency and image-prompt alignment for unsupervised training. Comprehensive experimental results illustrate the effectiveness of our method in correcting real-world exposure images and outperforms state-of-the-art unsupervised methods both numerically and visually.

💡 Research Summary

The paper tackles the long‑standing problem of correcting improperly exposed photographs without relying on manually annotated ground‑truth images. Two major shortcomings of prior work are identified: (1) most exposure‑correction methods ignore object‑level semantics, leading to color‑shift artifacts and loss of structural fidelity, and (2) real‑world datasets rarely provide well‑exposed references, making supervised training impractical. To address these issues, the authors propose a fully unsupervised, semantic‑aware exposure‑correction framework that integrates visual‑language priors from CLIP and segmentation priors from Fast Segment Anything (FastSAM).

Network Architecture

The core network consists of multiple Semantics‑Informed Mamba Reconstruction (SIMR) blocks arranged in a classic encoder‑decoder fashion. Each SIMR block contains:

-

Adaptive Semantic‑Aware Fusion (ASF) module – Takes image‑space features (F_mi) and semantic features (F_ms) extracted from FastSAM. A cross‑attention layer projects both modalities into a shared latent space, followed by a spatial‑frequency feed‑forward block. The frequency branch applies FFT, refines amplitude and phase with 1×1 convolutions, and reconstructs the signal via IFFT, capturing global exposure trends. The spatial branch processes the same tensor with three standard convolutions to retain local detail. The two branches are summed and layer‑normalized, producing a fused feature E_ma.

-

Residual Spatial Mamba Group (RSMG) – Built on the VMamba architecture, the group replaces the original S4 component with a 2‑D Selective Scan Module (2D‑SSM), enabling linear‑complexity modeling of long‑range spatial dependencies. Each Spatial Mamba Block (SMB) within the group contains a Vision Mamba Module (VMM) for global context and a separate spatial‑attention branch for fine‑grained correction. Residual connections ensure stable training and preserve low‑level details.

The multi‑scale design allows the network to handle mixed lighting conditions, where some objects may be under‑exposed while others are over‑exposed within the same frame.

CLIP‑Guided Pseudo‑Ground‑Truth Generation

To obtain supervision without manual labels, the authors fine‑tune three textual prompts (T_w, T_u, T_o) representing well‑exposed, under‑exposed, and over‑exposed states using an unpaired image collection. Images are encoded with the CLIP image encoder Φ_image, prompts with the CLIP text encoder Φ_text, and a triplet cross‑entropy loss aligns each prompt with its corresponding image group while pushing apart mismatched pairs.

For a new input image I, cosine similarities with the under‑ and over‑exposed prompts are computed. The higher similarity determines whether the image needs brightening or darkening. A gamma transformation I_pgt = 1 − (1 − I)^γ is applied, where γ is initially set heuristically. γ is then refined by gradient ascent on the similarity between the transformed image and the well‑exposed prompt T_w, iterating until convergence. This process yields a pseudo‑ground‑truth (pseudo‑GT) that mimics a properly exposed version of the input, entirely without human annotation.

Loss Functions

Training combines three components:

- Pixel‑level loss: L_MSE enforces intensity fidelity; L_COS encourages color consistency in sRGB space.

- Semantic‑Prompt Consistency (SPC) loss:

- Semantic Feature Consistency (SFC) – Uses FastSAM semantic maps of the output, pseudo‑GT, and input. It minimizes L1 distance and Gram‑matrix differences across semantic channels, ensuring that each object region receives a coherent exposure adjustment.

- Image‑Prompt Alignment (IPA) – Maximizes cosine similarity between the corrected output and the well‑exposed prompt while minimizing similarity to the under‑ and over‑exposed prompts, effectively pulling the output toward the “well‑exposed” region of CLIP’s joint vision‑language space.

The total loss is L_total = λ₁ L_MSE + λ₂ L_COS + λ₃ L_SPC, with λ’s tuned empirically.

Experiments

The method is trained on the under‑ and over‑exposed subsets of MSEC (2,830 train / 193 test) and SICE (512 train / 30 test). Evaluation uses PSNR, SSIM, LPIPS, BRISQUE, and NIMA. Compared against a broad set of unsupervised baselines (ZeroDCE, RUAS, EnlightenGAN, SCI, PairLIE, CLIP‑LIT, NeRCo, PSENet, LightenDiffusion, UEC), the proposed model achieves the highest PSNR/SSIM on both datasets and consistently lower LPIPS and BRISQUE scores, indicating superior perceptual quality. Ablation studies show that removing the ASF module or the SPC loss leads to noticeable color shifts and structural artifacts, confirming the necessity of both semantic fusion and vision‑language alignment.

Contributions and Impact

- Introduces a novel semantic‑aware exposure correction network that injects object‑level priors from FastSAM into a Mamba‑based reconstruction pipeline.

- Proposes a fully automatic CLIP‑guided pseudo‑GT generator, eliminating the need for costly manual labeling.

- Designs a dual‑component loss that enforces semantic consistency and aligns corrected images with well‑exposed textual prompts, bridging visual and linguistic domains.

Overall, the paper presents a compelling unsupervised solution that leverages state‑of‑the‑art segmentation and vision‑language models to achieve high‑quality exposure correction, opening avenues for label‑free enhancement in related tasks such as low‑light restoration, HDR synthesis, and domain adaptation.

Comments & Academic Discussion

Loading comments...

Leave a Comment