Accelerated Sinkhorn Algorithms for Partial Optimal Transport

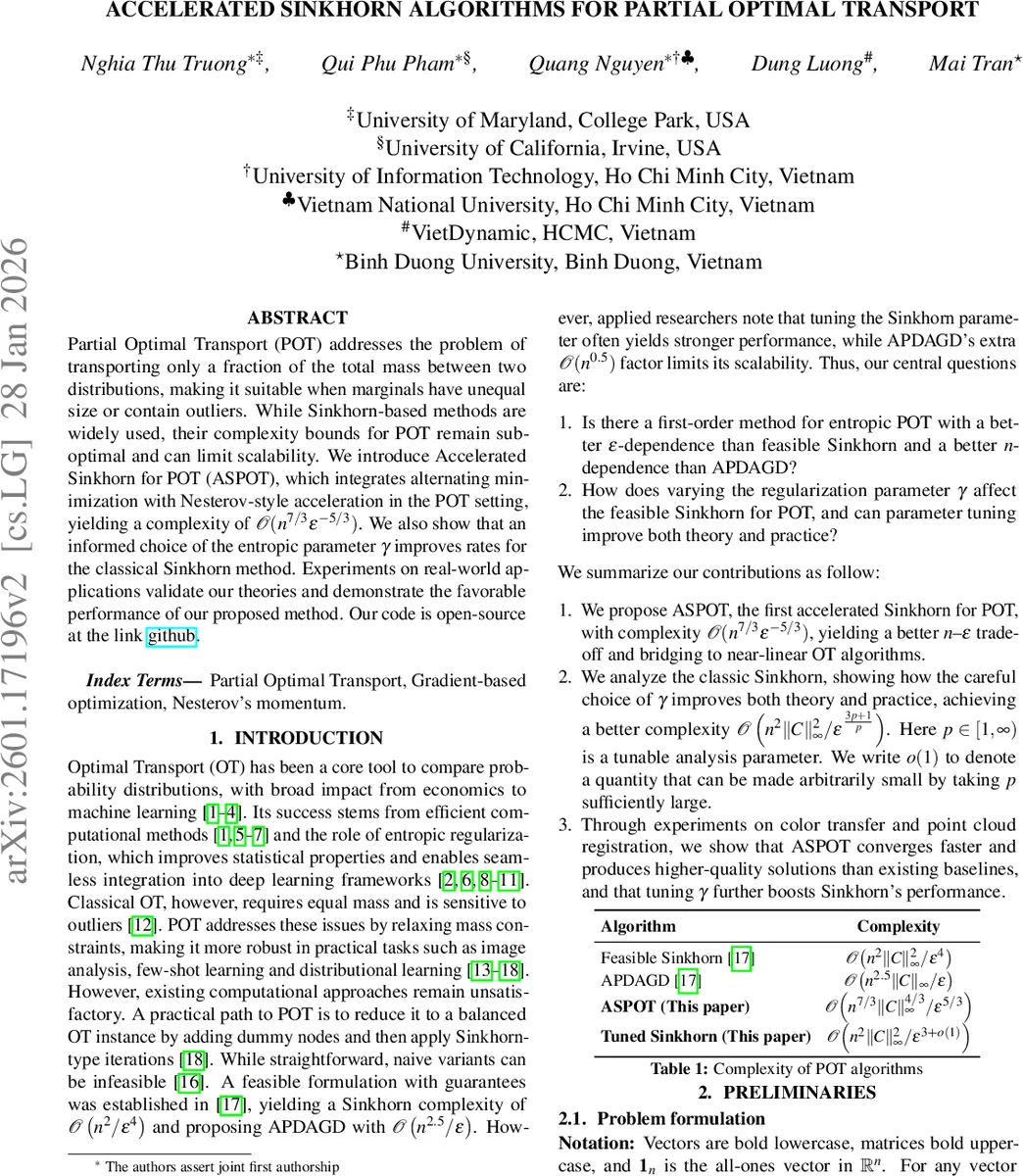

Partial Optimal Transport (POT) addresses the problem of transporting only a fraction of the total mass between two distributions, making it suitable when marginals have unequal size or contain outliers. While Sinkhorn-based methods are widely used, their complexity bounds for POT remain suboptimal and can limit scalability. We introduce Accelerated Sinkhorn for POT (ASPOT), which integrates alternating minimization with Nesterov-style acceleration in the POT setting, yielding a complexity of $\mathcal{O}(n^{7/3}\varepsilon^{-5/3})$. We also show that an informed choice of the entropic parameter $γ$ improves rates for the classical Sinkhorn method. Experiments on real-world applications validate our theories and demonstrate the favorable performance of our proposed methods.

💡 Research Summary

The paper tackles the computational challenges of Partial Optimal Transport (POT), a variant of optimal transport where only a fraction of the total mass needs to be moved, making it suitable for scenarios with unequal marginals or outliers. While entropic regularization and Sinkhorn iterations have become the de‑facto standard for balanced optimal transport, their direct application to POT yields suboptimal complexity bounds (typically O(n² ε⁻⁴)), limiting scalability. Existing accelerated methods such as APD‑AGD improve the ε‑dependence but suffer from an unfavorable n‑dependence (O(n²·⁵ ε⁻¹)).

The authors address two central questions: (1) Can a first‑order method for entropic POT achieve a better ε‑dependence than feasible Sinkhorn while also improving the n‑dependence compared to APD‑AGD? (2) How does the choice of the entropic regularization parameter γ affect both theory and practice for POT‑Sinkhorn?

Algorithmic contribution – ASPOT

The core contribution is Accelerated Sinkhorn for POT (ASPOT), which blends Nesterov‑style momentum with the Greenkhorn coordinate‑wise update. Each outer iteration performs:

- An extrapolation step (Nesterov) to obtain a provisional dual vector (\bar{z}_t).

- A gradient step scaled by γ and the sum of the marginal deficits.

- A Greenkhorn correction that adjusts the coordinate with the largest residual (either a source marginal, a target marginal, or the total mass constraint).

This design avoids the O(n⁰·⁵) penalty that naïve combinations (e.g., Alternating Accelerated Method) would incur. Theoretical analysis shows that the dual suboptimality δₜ decays as O(1/t²) (Lemma 2) and that the feasibility error E(t) is tightly linked to the dual decrease. Consequently, the total number of iterations required to achieve an ε‑approximation is bounded by

\

Comments & Academic Discussion

Loading comments...

Leave a Comment