GCL-OT: Graph Contrastive Learning with Optimal Transport for Heterophilic Text-Attributed Graphs

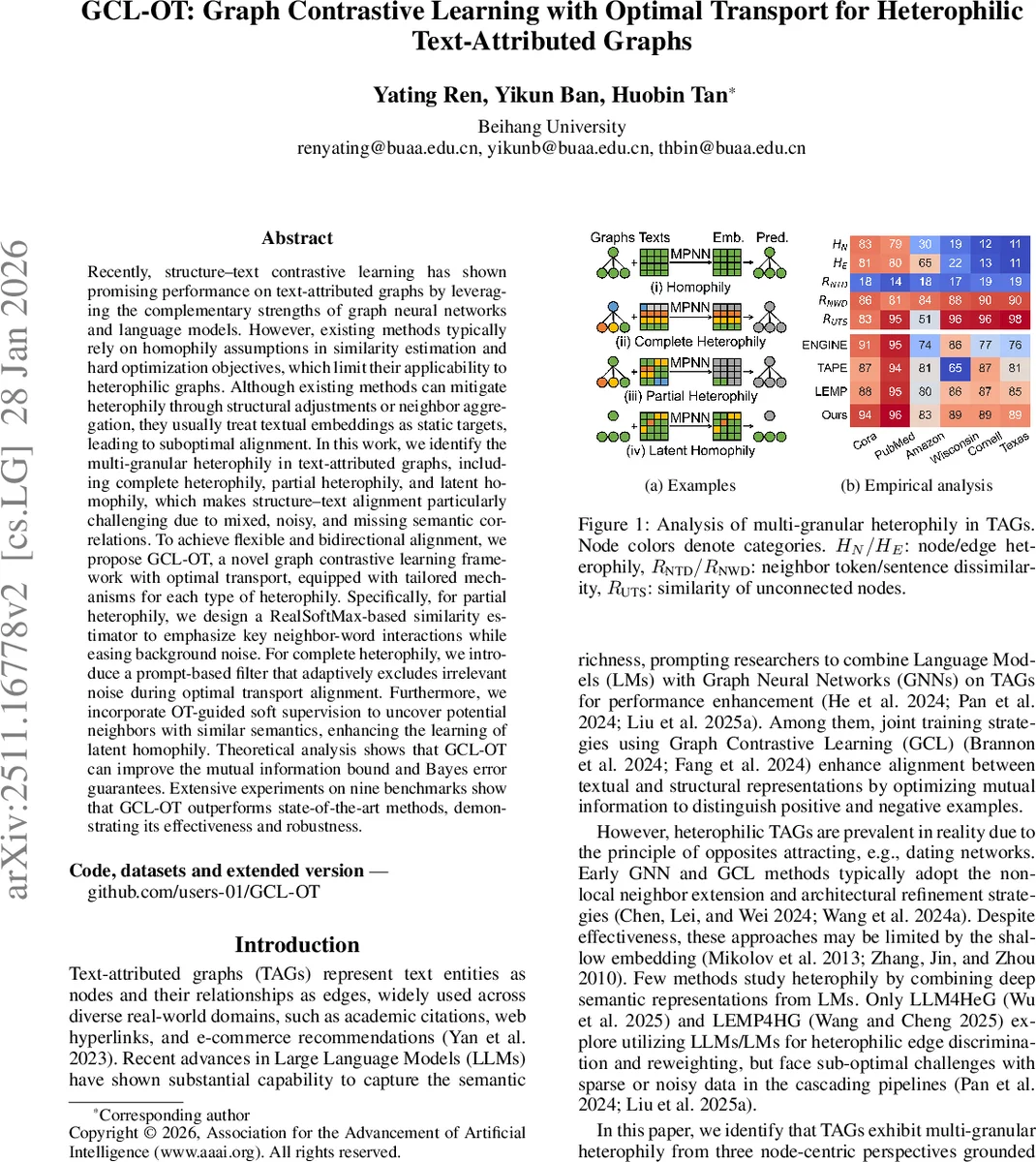

Recently, structure-text contrastive learning has shown promising performance on text-attributed graphs by leveraging the complementary strengths of graph neural networks and language models. However, existing methods typically rely on homophily assumptions in similarity estimation and hard optimization objectives, which limit their applicability to heterophilic graphs. Although existing methods can mitigate heterophily through structural adjustments or neighbor aggregation, they usually treat textual embeddings as static targets, leading to suboptimal alignment. In this work, we identify multi-granular heterophily in text-attributed graphs, including complete heterophily, partial heterophily, and latent homophily, which makes structure-text alignment particularly challenging due to mixed, noisy, and missing semantic correlations. To achieve flexible and bidirectional alignment, we propose GCL-OT, a novel graph contrastive learning framework with optimal transport, equipped with tailored mechanisms for each type of heterophily. Specifically, for partial heterophily, we design a RealSoftMax-based similarity estimator to emphasize key neighbor-word interactions while easing background noise. For complete heterophily, we introduce a prompt-based filter that adaptively excludes irrelevant noise during optimal transport alignment. Furthermore, we incorporate OT-guided soft supervision to uncover potential neighbors with similar semantics, enhancing the learning of latent homophily. Theoretical analysis shows that GCL-OT can improve the mutual information bound and Bayes error guarantees. Extensive experiments on nine benchmarks show that GCL-OT outperforms state-of-the-art methods, demonstrating its effectiveness and robustness.

💡 Research Summary

The paper introduces GCL‑OT, a novel graph contrastive learning framework that explicitly tackles the challenges posed by heterophilic text‑attributed graphs (TAGs). Traditional graph contrastive learning (GCL) methods rely on the homophily assumption, pairing each node’s structural view with its textual view in a one‑to‑one fashion using InfoNCE. This works well when neighboring nodes share similar labels and textual content, but fails dramatically on heterophilic graphs where (i) only a subset of a node’s text aligns with its neighbors (partial heterophily), (ii) the text is completely unrelated to the structural neighborhood (complete heterophily), or (iii) semantically similar nodes are not connected (latent homophily). The authors formalize these three patterns as “multi‑granular heterophily” and argue that a hard 1:1 alignment is insufficient because it cannot capture many‑to‑many (N:N) relationships and is vulnerable to noisy or missing semantic signals.

Key Contributions

-

RealSoftMax‑based similarity estimator – For partial heterophily, the authors replace a simple dot‑product or max‑pooling with a RealSoftMax function, a smooth interpolation between mean and max. Given neighbor embeddings and word embeddings, RealSoftMax emphasizes the most relevant neighbor‑word pairs while still retaining background information, controlled by a temperature‑like parameter β. This bidirectional estimator computes the similarity s_ij as the average of two RealSoftMax terms, one focusing on words for each neighbor and the other on neighbors for each word.

-

Prompt‑based filter for complete heterophily – To prevent completely unrelated text from contaminating the alignment, a learnable “prompt vector” z is appended to the global similarity matrix, creating an augmented matrix (\bar{S}) of size (N+1)×(N+1). If the maximum similarity of a row/column falls below the value of z, the corresponding node is forced to align with the prompt rather than any other node, effectively discarding noisy pairs.

-

Optimal transport (OT) as a soft alignment backbone – The augmented similarity matrix (\bar{S}) is fed into an entropy‑regularized OT problem solved with the Sinkhorn algorithm (implemented as LRSinkhorn). The resulting transport plan Q* provides fractional assignments between structural and textual embeddings, enabling many‑to‑many matching. The OT distance is then turned into a contrastive affinity d_ij = exp(q*ij·(\bar{s}{ij})/τ). A bidirectional contrastive loss L_MHA (a variant of InfoNCE) is applied on these affinities, encouraging high scores for matched pairs while penalizing mismatches.

-

OT‑guided latent homophily mining – Random negative sampling can mistakenly treat semantically similar but unconnected nodes as negatives. To alleviate this, the authors reuse the OT assignment matrix Q* as a soft target distribution P = I + Q* (I is the identity for self‑positives). A latent homophily loss L_LHM is defined as the cross‑entropy between P and the softmaxed similarity scores, encouraging the model to pull together nodes that OT deems similar even if they lack an explicit edge.

-

Theoretical analysis – The paper shows that using OT‑based soft matching tightens the mutual‑information (MI) lower bound of contrastive learning compared with standard InfoNCE, because the set of positive pairs is effectively enlarged. Moreover, the OT‑derived soft targets reduce the Bayes error bound for downstream node classification, providing a formal guarantee of improved generalization.

-

Extensive experiments – The authors evaluate GCL‑OT on nine public TAG benchmarks covering citation networks, e‑commerce co‑purchase graphs, and social media datasets. They compare against strong baselines: PolyGCL, HeterGCL, GraphGPT, Congrat, as well as heterophily‑specific methods LLM4HeG and LEMP4HG. Across all datasets, GCL‑OT achieves the highest accuracy and F1 scores, with especially large gains (up to 10% absolute improvement) on graphs exhibiting strong complete heterophily. Ablation studies confirm that each component (RealSoftMax, prompt filter, OT‑guided loss) contributes positively. Computationally, the Sinkhorn step adds modest overhead (≈1.2× training time, 1.3× memory) but remains feasible for graphs up to several hundred thousand nodes.

Implications and Future Work

GCL‑OT demonstrates that optimal transport can serve as a principled soft alignment mechanism for heterogeneous graph data, moving beyond the restrictive 1:1 pairing of traditional contrastive methods. By integrating RealSoftMax and prompt‑based filtering, the framework adapts to varying degrees of semantic mismatch, while the OT‑guided latent homophily loss leverages hidden semantic neighborhoods. The authors acknowledge remaining challenges: automatic tuning of β and the prompt vector, scaling Sinkhorn to very large graphs, and extending the approach to multimodal graphs (e.g., image‑text). Nonetheless, the work provides a solid foundation for future research on robust graph‑text integration in heterophilic settings.

In summary, GCL‑OT offers a comprehensive solution that (1) refines similarity estimation for partial heterophily, (2) filters out irrelevant text for complete heterophily, (3) employs optimal transport to enable many‑to‑many soft alignment, and (4) exploits the transport plan to discover latent homophily. Theoretical guarantees and strong empirical results together make it a significant advancement in the field of graph contrastive learning for text‑attributed graphs.

Comments & Academic Discussion

Loading comments...

Leave a Comment