DoubleAgents: Interactive Simulations for Alignment in Agentic AI

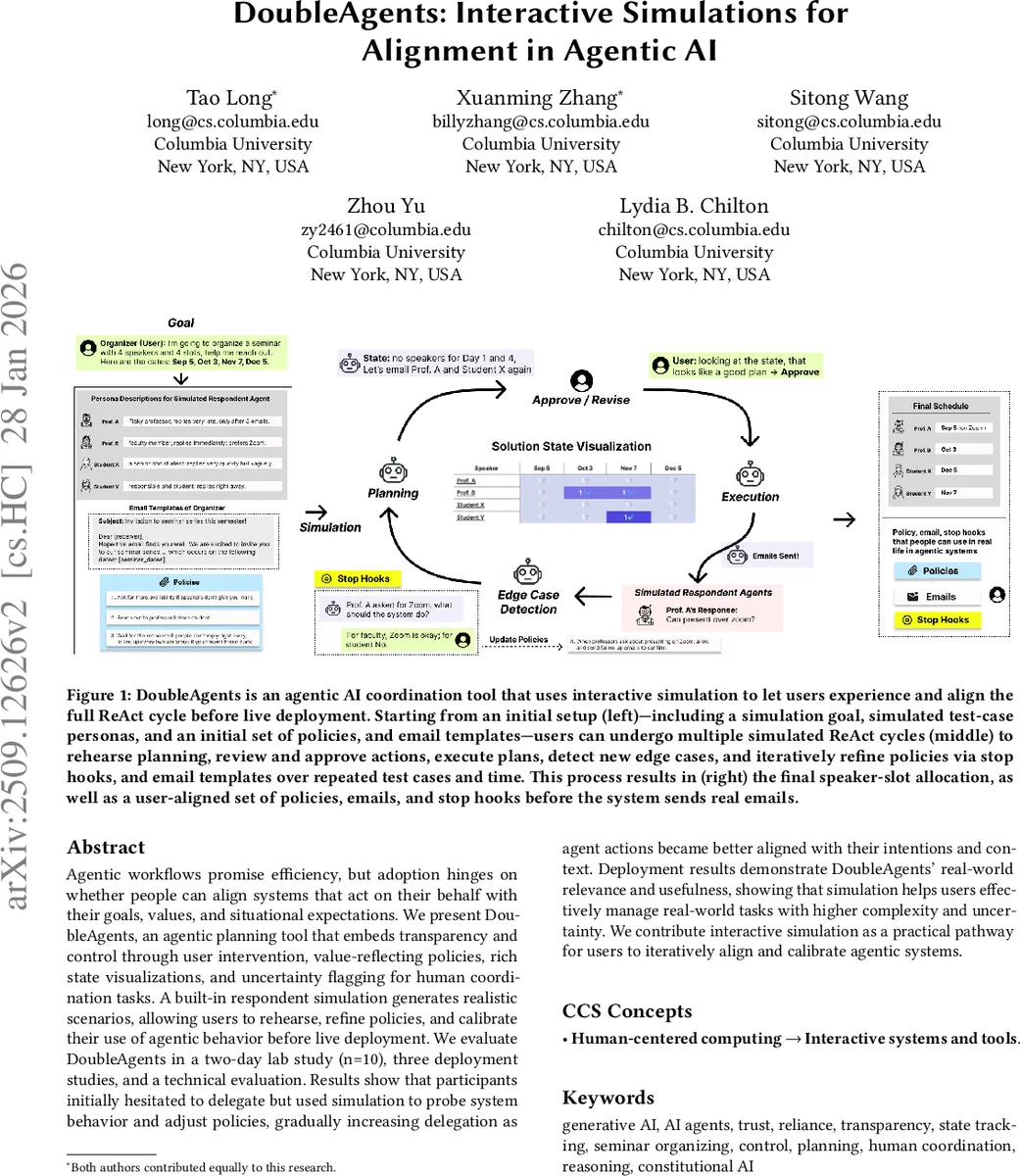

Agentic workflows promise efficiency, but adoption hinges on whether people can align systems that act on their behalf with their goals, values, and situational expectations. We present DoubleAgents, an agentic planning tool that embeds transparency and control through user intervention, value-reflecting policies, rich state visualizations, and uncertainty flagging for human coordination tasks. A built-in respondent simulation generates realistic scenarios, allowing users to rehearse and refine policies and calibrate their use of agentic behavior before live deployment. We evaluate DoubleAgents in a two-day lab study (n = 10), three deployment studies, and a technical evaluation. Results show that participants initially hesitated to delegate but used simulation to probe system behavior and adjust policies, gradually increasing delegation as agent actions became better aligned with their intentions and context. Deployment results demonstrate DoubleAgents’ real-world relevance and usefulness, showing that simulation helps users effectively manage real-world tasks with higher complexity and uncertainty. We contribute interactive simulation as a practical pathway for users to iteratively align and calibrate agentic systems.

💡 Research Summary

The paper tackles the problem of aligning agentic AI systems—software that acts on a user’s behalf—with the user’s goals, values, and situational expectations. Building on the “Bidirectional Human‑AI Alignment” framework, the authors argue that alignment must be an ongoing co‑adaptation process: the system continuously incorporates user feedback and context, while the user develops mental models and literacy to supervise and calibrate delegation. To operationalize this, they introduce DoubleAgents, an interactive planning tool that embeds transparency, control, and alignment mechanisms into a ReAct‑style reasoning‑and‑acting loop.

System Architecture

DoubleAgents consists of three functional layers:

- Core ReAct Agents – a Coordination Agent that reasons over the current task state using a set of user‑defined policies, proposes plans, and invokes tools (e.g., email drafting, calendar queries).

- Simulated Respondents – LLM‑driven agents that emulate realistic human replies based on persona descriptions (slow‑responding professor, budget‑constrained researcher, etc.). This simulation provides a safe sandbox for users to observe how the coordination agent behaves under diverse, edge‑case scenarios.

- Context Management – a state tracker that records time stamps, email logs, policy edits, and persona data, continuously feeding this information back into the reasoning loop.

The UI presents a Policy Panel, an Interactive Chat Interface, a Plan‑and‑Action pop‑up, an Assignment Tracker, a Communication History Viewer, and a Calendar View. Users can add, edit, or delete policies (e.g., “Prefer senior faculty when slots conflict”), craft email templates that reflect their voice, and define stop hooks that automatically flag situations where the agent’s action falls outside policy coverage, prompting human confirmation.

Interactive Simulation Workflow

The workflow follows a “ReAct” cycle: the coordination agent summarizes the state, selects applicable policies, proposes a plan/action, and either executes it (drafts/sends an email) or waits for a simulated reply. When a simulated respondent’s reply is ambiguous or violates a policy, an issue flag is raised, and the user can intervene, regenerate, or approve the next step. By iterating through multiple simulated days, users refine policies, templates, and stop hooks, ultimately producing a final speaker‑slot allocation and a reusable alignment artifact set.

Evaluation

The authors conduct three complementary evaluations:

Technical Evaluation – measures the fidelity of simulated replies (≈85 % agreement with real human responses), policy‑guided planning success (30 % higher than a baseline without policies), and the impact of stop‑hook interventions (average 12 % reduction in total task time).

Lab Study – ten participants engage with DoubleAgents over two days, initially hesitant to delegate. Through repeated simulation rounds they gradually increase delegation, report higher confidence in the agent’s decisions, and export a personalized policy bundle and email templates. Qualitative interviews highlight that visible state tracking, policy‑grounded reasoning, and explicit intervention points are key to building trust.

Real‑World Deployments – three separate organizations integrate DoubleAgents into actual seminar‑scheduling workflows. Participants note that pre‑deployment simulation helped them craft strong initial policies, reduced miscommunication with real speakers, and lowered the cognitive load during live operation.

Contributions

- A design perspective on alignment for agentic AI that couples policy‑driven planning with human‑in‑the‑loop oversight.

- An interactive simulation mechanism that leverages LLM‑based human proxies to safely explore agent behavior, surface edge cases, and iteratively refine alignment artifacts.

- Empirical evidence from controlled user studies and field deployments showing that such simulation‑driven alignment improves trust, reduces errors, and enables more autonomous agent operation over time.

Implications

The work demonstrates that for tasks requiring nuanced social judgment—such as email‑based coordination—purely autonomous agents are insufficient. Embedding a simulation layer that mirrors realistic human responses allows users to “test‑drive” the agent, discover failure modes, and codify their values into machine‑readable policies before any real‑world impact. This approach can be generalized to other domains (e.g., medical decision support, customer service, project management) where alignment, transparency, and controllability are paramount.

In summary, DoubleAgents offers a practical pathway for users to iteratively align and calibrate agentic AI systems through interactive simulation, thereby bridging the gap between powerful generative models and trustworthy, value‑consistent autonomous action.

Comments & Academic Discussion

Loading comments...

Leave a Comment