Guided Perturbation Sensitivity (GPS): Detecting Adversarial Text via Embedding Stability and Word Importance

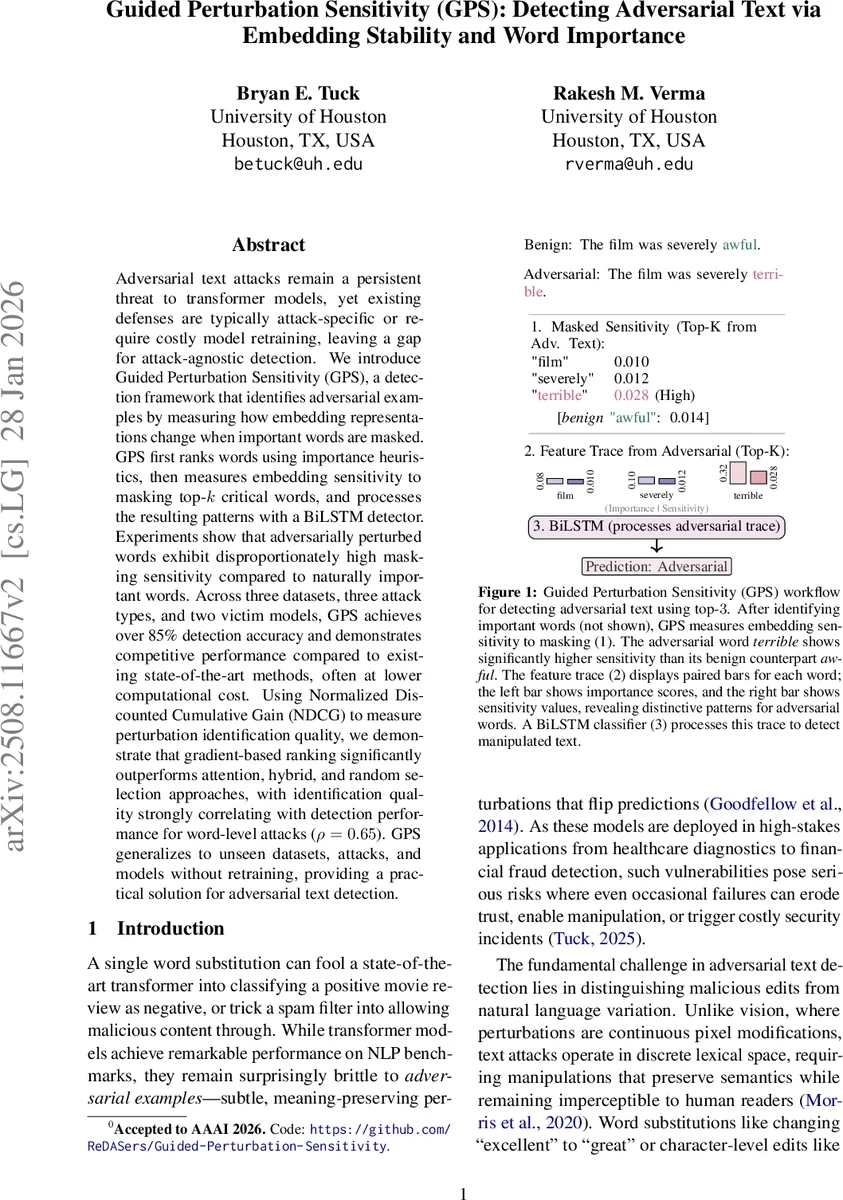

Adversarial text attacks remain a persistent threat to transformer models, yet existing defenses are typically attack-specific or require costly model retraining, leaving a gap for attack-agnostic detection. We introduce Guided Perturbation Sensitivity (GPS), a detection framework that identifies adversarial examples by measuring how embedding representations change when important words are masked. GPS first ranks words using importance heuristics, then measures embedding sensitivity to masking top-k critical words, and processes the resulting patterns with a BiLSTM detector. Experiments show that adversarially perturbed words exhibit disproportionately high masking sensitivity compared to naturally important words. Across three datasets, three attack types, and two victim models, GPS achieves over 85% detection accuracy and demonstrates competitive performance compared to existing state-of-the-art methods, often at lower computational cost. Using Normalized Discounted Cumulative Gain (NDCG) to measure perturbation identification quality, we demonstrate that gradient-based ranking significantly outperforms attention, hybrid, and random selection approaches, with identification quality strongly correlating with detection performance for word-level attacks ($ρ= 0.65$). GPS generalizes to unseen datasets, attacks, and models without retraining, providing a practical solution for adversarial text detection.

💡 Research Summary

The paper tackles the persistent problem of adversarial text attacks on transformer‑based NLP models, noting that most existing defenses are either attack‑specific or require costly model retraining. To fill this gap, the authors propose Guided Perturbation Sensitivity (GPS), a detection framework that operates without modifying the target model and without prior knowledge of the attack method.

GPS works in four stages. First, it computes an importance score for every word in the input using a post‑hoc heuristic. Four heuristics are evaluated: Gradient Attribution (the Simonyan‑style gradient norm of the loss w.r.t. input embeddings), Attention Rollout, Grad‑SAM (a combination of gradient and attention), and a random baseline. The authors find that Gradient Attribution consistently yields the highest Normalized Discounted Cumulative Gain (NDCG) and correlates strongly (ρ≈0.65) with detection performance.

Second, the top‑K most important words (K is set to 20 in the main experiments, with stable performance across 5–50) are individually masked with the model’s

Comments & Academic Discussion

Loading comments...

Leave a Comment