Progressive-Resolution Policy Distillation: Leveraging Coarse-Resolution Simulations for Time-Efficient Fine-Resolution Policy Learning

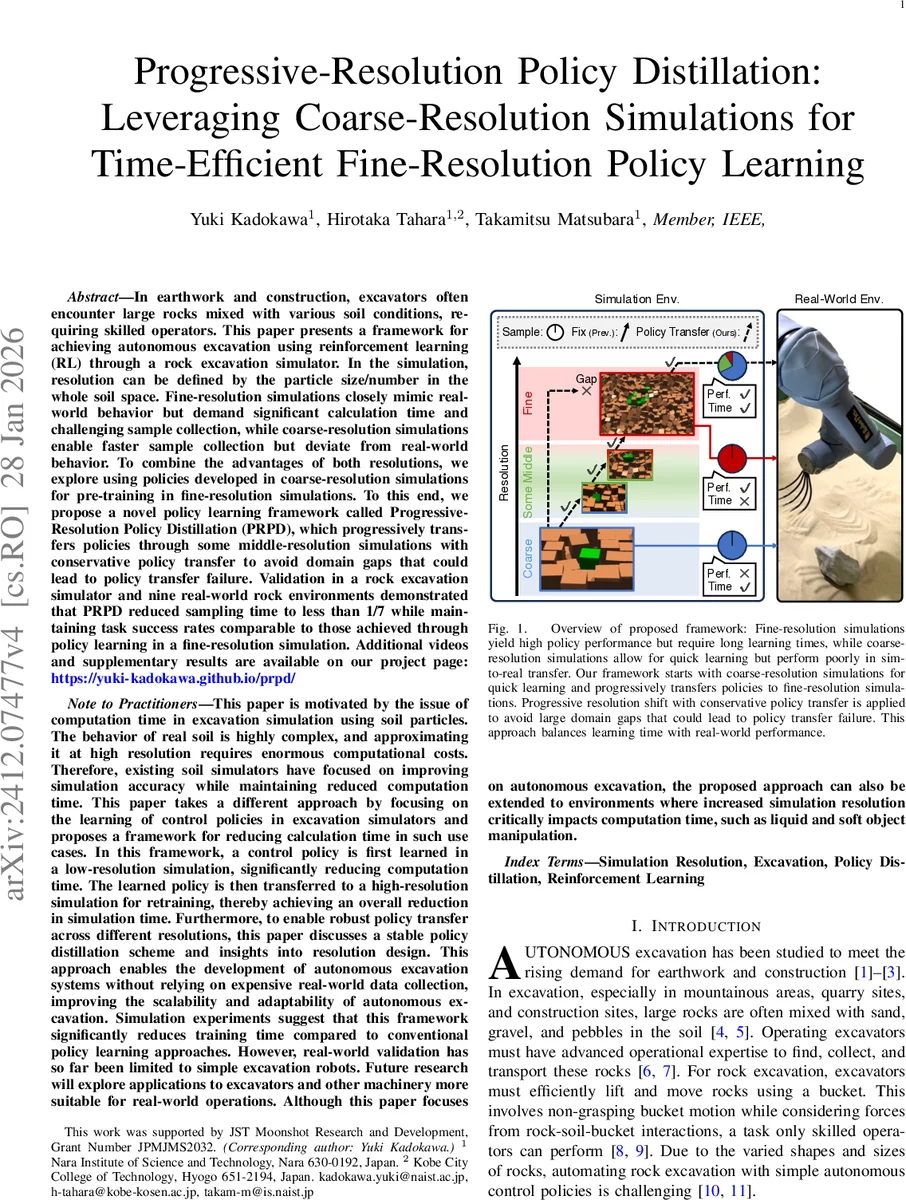

In earthwork and construction, excavators often encounter large rocks mixed with various soil conditions, requiring skilled operators. This paper presents a framework for achieving autonomous excavation using reinforcement learning (RL) through a rock excavation simulator. In the simulation, resolution can be defined by the particle size/number in the whole soil space. Fine-resolution simulations closely mimic real-world behavior but demand significant calculation time and challenging sample collection, while coarse-resolution simulations enable faster sample collection but deviate from real-world behavior. To combine the advantages of both resolutions, we explore using policies developed in coarse-resolution simulations for pre-training in fine-resolution simulations. To this end, we propose a novel policy learning framework called Progressive-Resolution Policy Distillation (PRPD), which progressively transfers policies through some middle-resolution simulations with conservative policy transfer to avoid domain gaps that could lead to policy transfer failure. Validation in a rock excavation simulator and nine real-world rock environments demonstrated that PRPD reduced sampling time to less than 1/7 while maintaining task success rates comparable to those achieved through policy learning in a fine-resolution simulation. Additional videos and supplementary results are available on our project page: https://yuki-kadokawa.github.io/prpd/

💡 Research Summary

The paper tackles a fundamental bottleneck in applying reinforcement learning (RL) to particle‑based excavation simulators: high‑resolution simulations faithfully reproduce soil‑rock interactions but are computationally prohibitive, whereas low‑resolution simulations run quickly but generate policies that do not transfer to the real world. To bridge this gap, the authors propose Progressive‑Resolution Policy Distillation (PRPD), a framework that progressively raises the simulation resolution while transferring policies in a conservative, distillation‑based manner.

PRPD begins by training a policy in a coarse‑resolution environment (large particles, few interactions) using Soft Actor‑Critic (SAC). The learned policy is then copied to a slightly finer “middle” resolution. At each transition, a KL‑divergence regularization term penalizes large deviations from the previous policy, effectively “soft‑distilling” the old behavior into the new environment. The total loss combines this KL term with the standard SAC objective, weighted by hyper‑parameters α (regularization strength) and β (RL loss weight). After a brief fine‑tuning phase at the new resolution, the policy is evaluated; if its average return remains within a predefined tolerance of the previous policy, the framework proceeds to the next higher resolution. Otherwise, additional training is performed at the current level. This stability check prevents catastrophic performance drops caused by abrupt domain shifts.

The authors implement a variable‑resolution excavator simulator on NVIDIA’s Isaac Gym platform. Four resolution levels are defined by particle size: coarse (≈10⁴ particles, 30 mm), middle‑1 (≈4× particles, 15 mm), middle‑2 (≈16× particles, 7.5 mm), and fine (≈100× particles, 3 mm). Training times for each level are roughly 30 min, 80 min, 150 min, and 600 min respectively when trained from scratch. Using PRPD, the total wall‑clock time to obtain a fine‑resolution‑level policy is only about 90 minutes—a seven‑fold reduction compared with training solely in the fine environment.

Empirical evaluation includes both simulation benchmarks and real‑world tests. In simulation, success rates (defined as correctly lifting and depositing a rock) increase monotonically with resolution (45 % → 92 %). In physical experiments, the PRPD‑derived policy is deployed on a real excavator handling nine distinct rock shapes and soil mixtures. The policy succeeds in roughly 90 % of trials, statistically indistinguishable from a policy trained directly in the fine simulation. The authors also analyze the effect of the KL regularization weight: larger α values yield more stable transfers but slightly slower convergence, illustrating a trade‑off between robustness and speed.

Key contributions are: (1) a novel progressive‑resolution distillation scheme that integrates existing RL and policy‑distillation techniques into a unified pipeline; (2) a demonstration that such a pipeline can dramatically cut total training time while preserving high‑fidelity performance in a complex, particle‑based domain; (3) validation on real hardware, showing that policies learned largely in coarse simulations can be safely refined to achieve real‑world competence.

Limitations are acknowledged. The design of intermediate resolutions relies on domain expertise; an automated method for selecting optimal intermediate steps is not provided. The current work focuses on 2‑D excavation scenarios; extending to full 3‑D environments with varying soil properties, moisture, or multi‑robot coordination will require additional investigation. Moreover, only SAC and KL‑based distillation are explored; integrating model‑based RL, meta‑learning, or curriculum learning could further improve efficiency.

In conclusion, PRPD offers a practical solution to the “resolution‑vs‑time” dilemma in high‑fidelity simulators, enabling rapid policy acquisition for tasks that would otherwise be infeasible due to computational constraints. Future research directions include automated resolution scheduling, broader robotic manipulation domains (e.g., soft‑object handling), and combining PRPD with other sample‑efficient RL paradigms to push the boundaries of simulation‑to‑real transfer.

Comments & Academic Discussion

Loading comments...

Leave a Comment