Up to 36x Speedup: Mask-based Parallel Inference Paradigm for Key Information Extraction in MLLMs

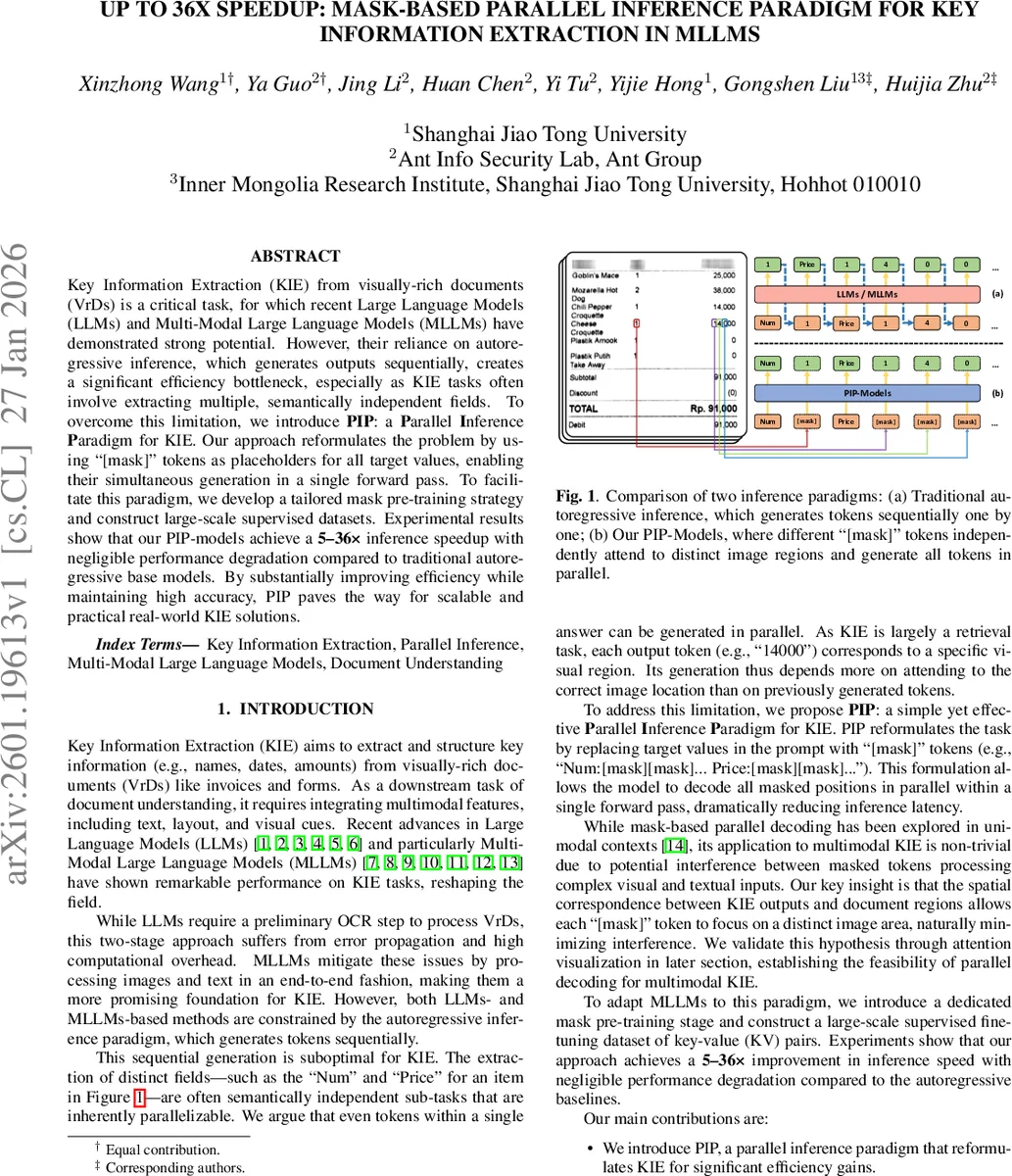

Key Information Extraction (KIE) from visually-rich documents (VrDs) is a critical task, for which recent Large Language Models (LLMs) and Multi-Modal Large Language Models (MLLMs) have demonstrated strong potential. However, their reliance on autoregressive inference, which generates outputs sequentially, creates a significant efficiency bottleneck, especially as KIE tasks often involve extracting multiple, semantically independent fields. To overcome this limitation, we introduce PIP: a Parallel Inference Paradigm for KIE. Our approach reformulates the problem by using “[mask]” tokens as placeholders for all target values, enabling their simultaneous generation in a single forward pass. To facilitate this paradigm, we develop a tailored mask pre-training strategy and construct large-scale supervised datasets. Experimental results show that our PIP-models achieve a 5-36x inference speedup with negligible performance degradation compared to traditional autoregressive base models. By substantially improving efficiency while maintaining high accuracy, PIP paves the way for scalable and practical real-world KIE solutions.

💡 Research Summary

The paper addresses a critical efficiency bottleneck in key information extraction (KIE) from visually‑rich documents (VrDs) when using large language models (LLMs) or multimodal large language models (MLLMs). Traditional approaches rely on autoregressive decoding, generating each token sequentially, which becomes prohibitively slow as the number of fields to extract grows. To overcome this, the authors propose the Parallel Inference Paradigm (PIP). PIP reformulates the KIE task by replacing every target value in the prompt with a “

Comments & Academic Discussion

Loading comments...

Leave a Comment