AROMMA: Unifying Olfactory Embeddings for Single Molecules and Mixtures

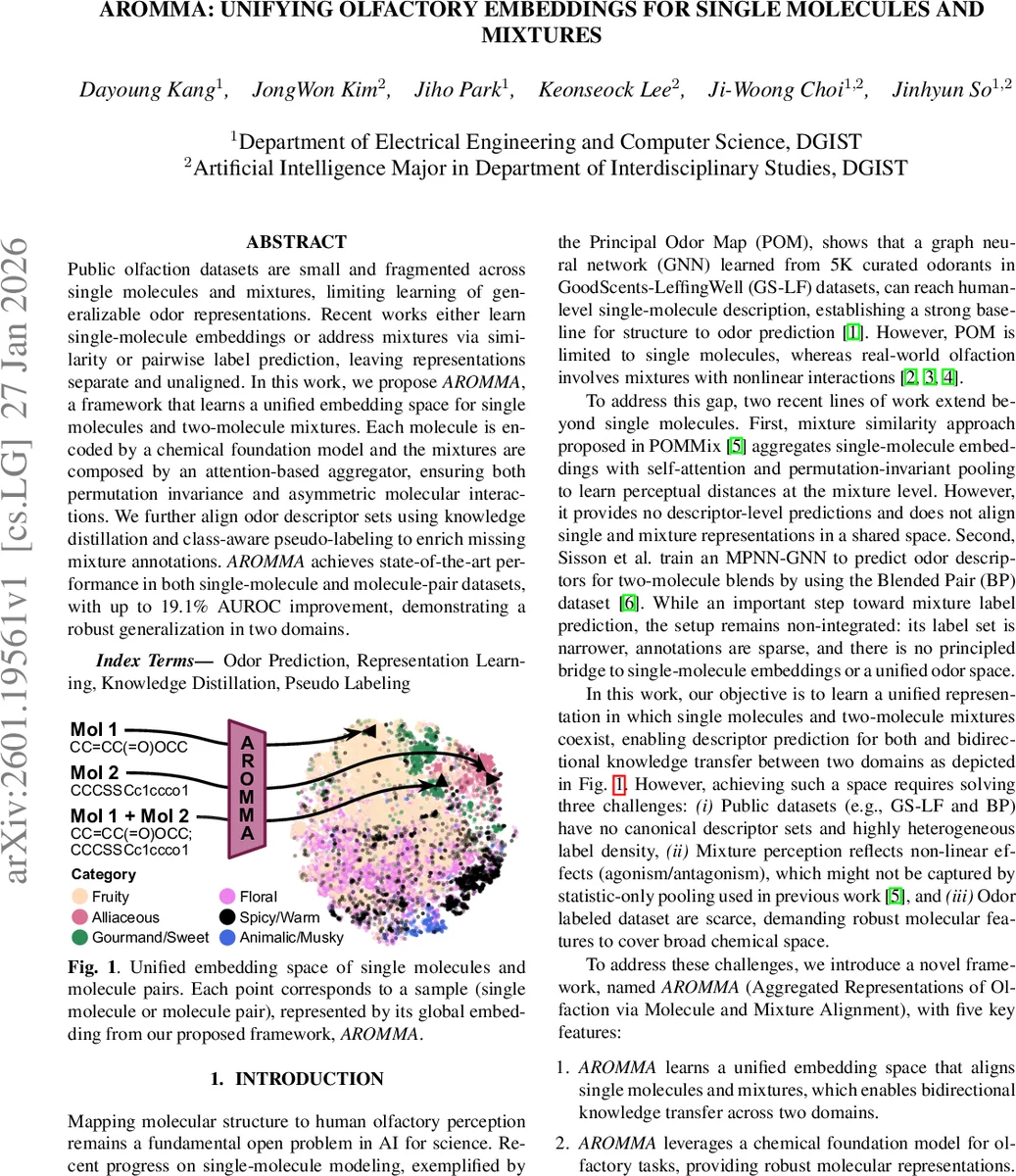

Public olfaction datasets are small and fragmented across single molecules and mixtures, limiting learning of generalizable odor representations. Recent works either learn single-molecule embeddings or address mixtures via similarity or pairwise label prediction, leaving representations separate and unaligned. In this work, we propose AROMMA, a framework that learns a unified embedding space for single molecules and two-molecule mixtures. Each molecule is encoded by a chemical foundation model and the mixtures are composed by an attention-based aggregator, ensuring both permutation invariance and asymmetric molecular interactions. We further align odor descriptor sets using knowledge distillation and class-aware pseudo-labeling to enrich missing mixture annotations. AROMMA achieves state-of-the-art performance in both single-molecule and molecule-pair datasets, with up to 19.1% AUROC improvement, demonstrating a robust generalization in two domains.

💡 Research Summary

The paper introduces AROMMA, a novel framework that learns a unified odor‑embedding space for both single molecules and two‑component mixtures. The authors identify three major challenges: (i) public olfactory datasets are fragmented and use heterogeneous descriptor sets, (ii) mixture perception involves nonlinear, often asymmetric interactions that simple statistical pooling cannot capture, and (iii) the overall scarcity of labeled data demands robust molecular features.

To address (i), they merge the single‑molecule GS‑LF dataset (≈5 K molecules, 138 binary descriptors) and the mixture BP dataset (≈60 K pairs, 74 descriptors) into a single label space of 152 descriptors. Missing BP labels are initially zero‑padded. For (ii), each molecule is encoded with a large‑scale chemical foundation model, SPMM, which has been pre‑trained on ~50 M molecules and aligns structure with 53 biochemical properties. SPMM’s SMILES encoder is fine‑tuned via LoRA (rank 4, scaling 8), keeping trainable parameters under 0.3 % of the model.

The mixture aggregator first applies masked multi‑head self‑attention (MSA) to the two molecule embeddings, allowing each component to attend to the other and capture asymmetric effects. A learnable global query token then performs cross‑attention over the MSA outputs, producing a permutation‑invariant yet interaction‑rich global mixture embedding. This design improves upon prior work (POMMix) that relied on simple statistics (mean, variance, min, max) or a fixed mean query.

For (iii), knowledge distillation is used: the original POM model serves as a teacher for single‑molecule predictions. A multi‑label logit distillation (MLD) loss aligns the soft probability distributions of SPMM and POM, combined with binary cross‑entropy (BCE) for the ground‑truth labels. Mixture samples are trained only with BCE.

Because many BP descriptors are missing, the authors employ class‑aware pseudo‑labeling. They compute the positive‑label proportion γ_c for each class from GS‑LF, then set class‑specific thresholds τ_c so that the fraction of predictions exceeding τ_c matches γ_c. Applying this to BP yields two enriched training sets, Pseudo‑78 and Pseudo‑152, which are used to re‑train the model (AROMMA‑P78, AROMMA‑P152). This process raises the average number of active labels per BP sample from 1.4 to 2.7 (Pseudo‑78) and 5.6 (Pseudo‑152), narrowing the label‑density gap with GS‑LF.

Experiments use 4 attention heads, project SPMM embeddings from 768 → 196 → 384 dimensions, and train with Adam (lr = 4e‑5) and early stopping. Evaluation follows prior protocols: macro‑averaged AUROC on GS‑LF and BP, with stratified 5‑fold splits synchronized across datasets. AROMMA achieves AUROC = 0.939 on GS‑LF and 0.900 on BP, outperforming the previous state‑of‑the‑art POM (0.874, 0.851) and MPNN‑GNN (0.851, 0.734) by +3.2 % and +19.1 % respectively.

Ablation studies confirm: (1) SPMM provides richer representations than POM; (2) the learnable query cross‑attention outperforms statistic‑based pooling; (3) knowledge distillation improves single‑molecule predictions and transfers benefits to mixtures; (4) fine‑tuning SPMM via LoRA yields further gains over a frozen encoder.

In conclusion, AROMMA successfully bridges the representation gap between single molecules and mixtures through a combination of a powerful foundation model, an interaction‑aware attention aggregator, and label‑alignment techniques (distillation and pseudo‑labeling). The authors note that extending the architecture to three‑or‑more component mixtures and incorporating 3‑D molecular representations are promising directions for future work.

Comments & Academic Discussion

Loading comments...

Leave a Comment