ClipGS-VR: Immersive and Interactive Cinematic Visualization of Volumetric Medical Data in Mobile Virtual Reality

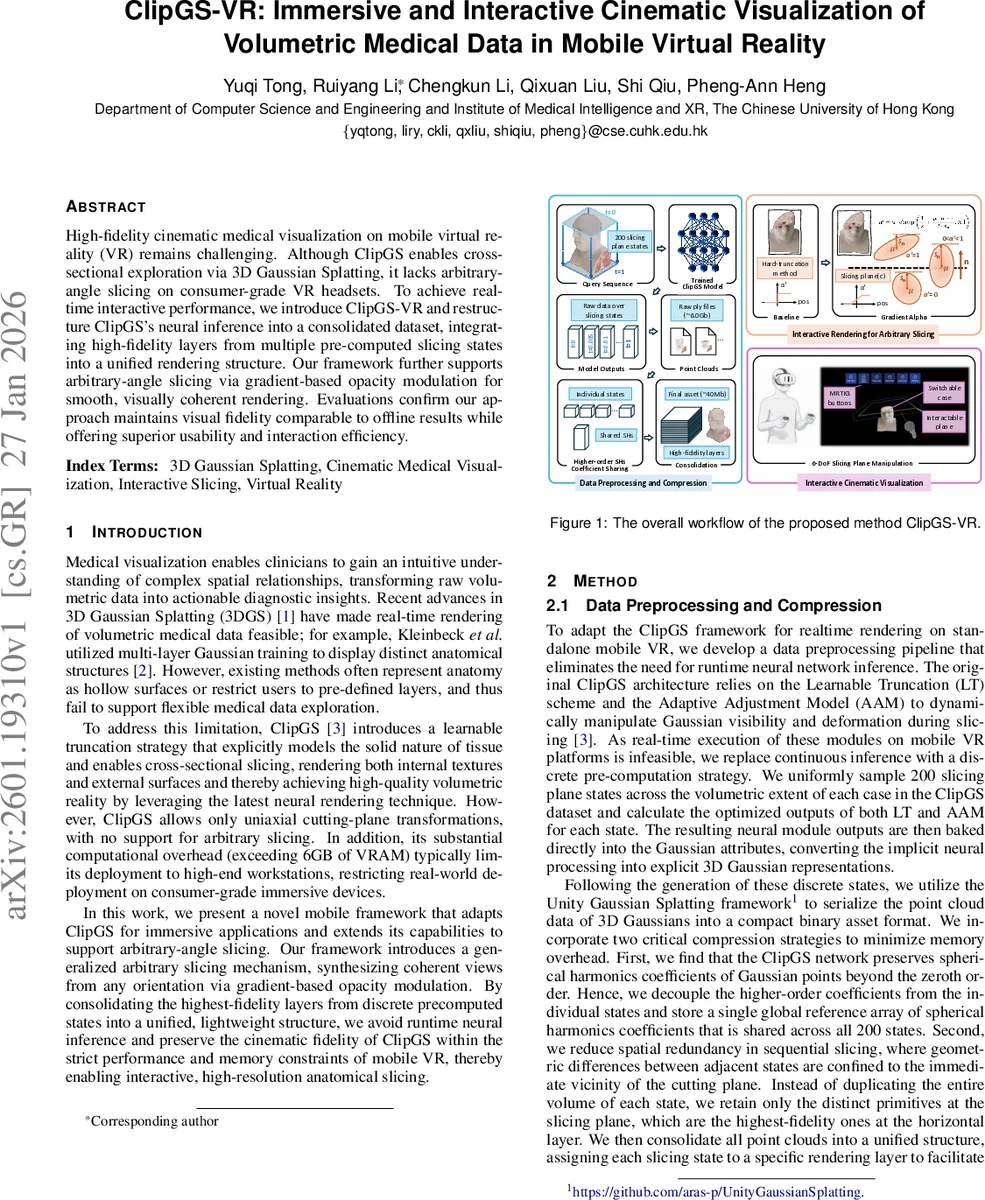

High-fidelity cinematic medical visualization on mobile virtual reality (VR) remains challenging. Although ClipGS enables cross-sectional exploration via 3D Gaussian Splatting, it lacks arbitrary-angle slicing on consumer-grade VR headsets. To achieve real-time interactive performance, we introduce ClipGS-VR and restructure ClipGS’s neural inference into a consolidated dataset, integrating high-fidelity layers from multiple pre-computed slicing states into a unified rendering structure. Our framework further supports arbitrary-angle slicing via gradient-based opacity modulation for smooth, visually coherent rendering. Evaluations confirm our approach maintains visual fidelity comparable to offline results while offering superior usability and interaction efficiency.

💡 Research Summary

ClipGS‑VR presents a mobile‑friendly adaptation of the original ClipGS framework, enabling high‑fidelity cinematic visualization of volumetric medical data on standalone VR headsets such as the Meta Quest 3. The authors first address the prohibitive memory and computational demands of ClipGS, which relies on runtime neural inference (Learnable Truncation and Adaptive Adjustment Model) and exceeds 6 GB of VRAM. Instead of performing these operations on‑device, they pre‑compute 200 uniformly sampled slicing states for each case, baking the neural outputs directly into the attributes of 3D Gaussian points. Two compression techniques dramatically shrink the data: (1) higher‑order spherical‑harmonic coefficients are extracted from individual states and stored once in a global array shared across all states; (2) only the primitives that differ near the cutting plane are retained for each state, eliminating redundant geometry. The resulting asset size drops from roughly 6 GB to about 40 MB per case, making it feasible for mobile VR.

To overcome ClipGS’s limitation to uniaxial cutting planes, the paper introduces an arbitrary‑angle slicing mechanism based on gradient‑based opacity modulation. For each Gaussian centered at μ, the signed distance to the slicing plane (μ·n − c) and the projected radius along the plane normal (sₙ) are used to compute a transparency factor σ = clamp(½ + (μ·n − c)/(2 sₙ), 0, 1). The original opacity α is then scaled to α′ = α·σ, producing a smooth fade‑out for splats intersecting the plane and eliminating jagged artifacts. This approach incurs minimal GPU cost and preserves real‑time frame rates.

Implementation leverages the Mixed Reality Toolkit 3 (MRTK3) to expose a 6‑DoF slicing plane that users manipulate directly with VR controllers. Plane transformations are streamed each frame to the rendering shader, enabling precise, on‑the‑fly arbitrary slicing. Visual quality is evaluated against the original ClipGS ground truth using PSNR and SSIM under uniaxial slicing; ClipGS‑VR achieves an average PSNR of 33.40 dB and SSIM of 0.9698, outperforming a hard‑truncation baseline (30.55 dB, 0.9542). Qualitative results for beveled (non‑axial) slicing demonstrate smooth, anti‑aliased cut surfaces.

A user study with ten participants compared fixed uniaxial slicing to the proposed arbitrary slicing. System Usability Scale (SUS) scores rose from 71.80 ± 14.41 (uniaxial) to 88.20 ± 9.35 (arbitrary), and interaction efficiency (5‑point Likert) improved from 3.40 ± 0.70 to 4.70 ± 0.48. Statistical tests (paired t‑test, Wilcoxon signed‑rank) confirmed these differences as significant (p < 0.05). Participants reported reduced physical strain and better spatial understanding when using the 6‑DoF slicing mode.

In summary, ClipGS‑VR delivers real‑time, high‑quality volumetric medical visualization on consumer‑grade mobile VR by (1) eliminating runtime neural inference through extensive pre‑computation and compression, and (2) enabling smooth arbitrary‑angle slicing via gradient‑based opacity modulation. The system maintains visual fidelity comparable to offline results while markedly improving usability and interaction efficiency. Limitations include the upfront cost of pre‑computing slicing states and the current focus on static volumes. Future work will explore optimizing the pre‑computation pipeline, supporting dynamic deformation, and extending the framework to real‑time medical simulation scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment