Contrastive Spectral Rectification: Test-Time Defense towards Zero-shot Adversarial Robustness of CLIP

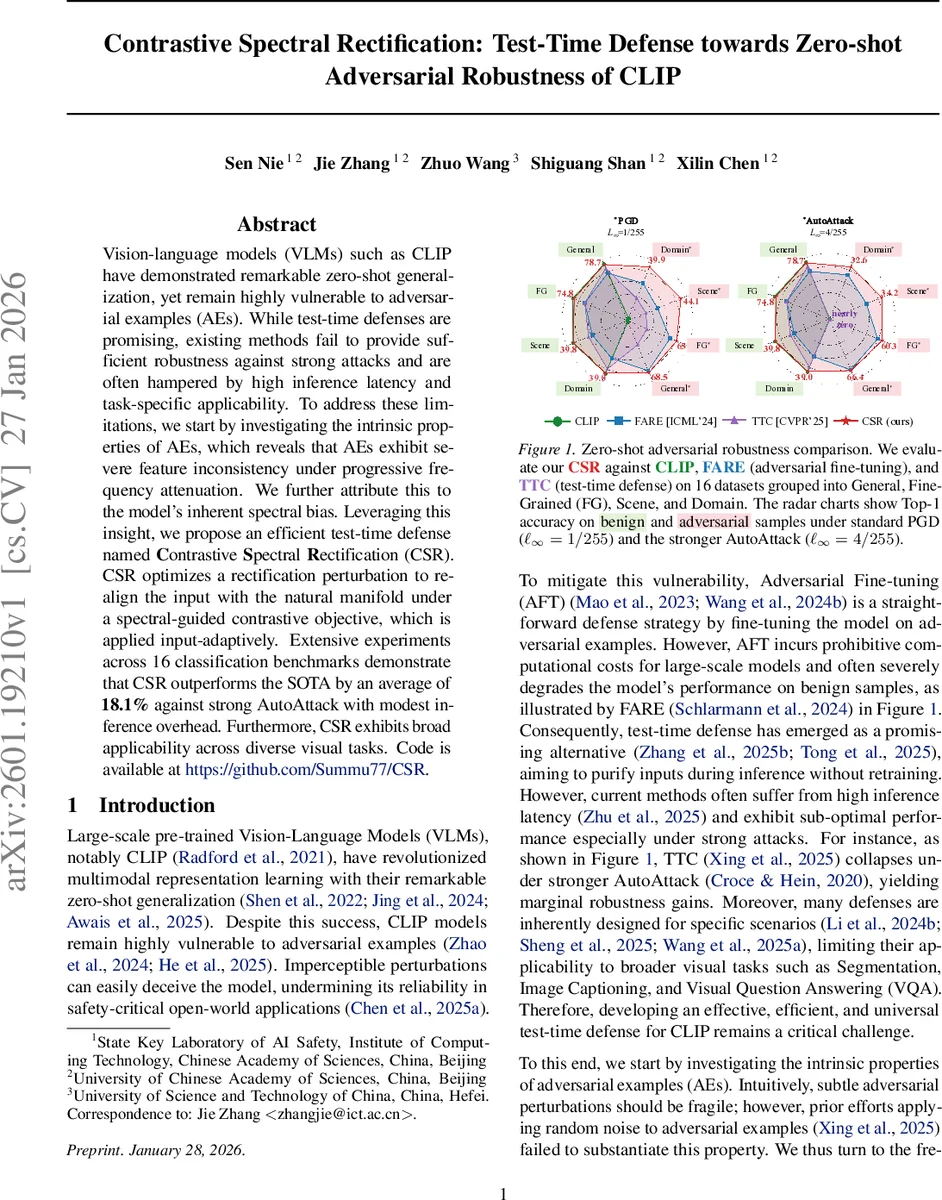

Vision-language models (VLMs) such as CLIP have demonstrated remarkable zero-shot generalization, yet remain highly vulnerable to adversarial examples (AEs). While test-time defenses are promising, existing methods fail to provide sufficient robustness against strong attacks and are often hampered by high inference latency and task-specific applicability. To address these limitations, we start by investigating the intrinsic properties of AEs, which reveals that AEs exhibit severe feature inconsistency under progressive frequency attenuation. We further attribute this to the model’s inherent spectral bias. Leveraging this insight, we propose an efficient test-time defense named Contrastive Spectral Rectification (CSR). CSR optimizes a rectification perturbation to realign the input with the natural manifold under a spectral-guided contrastive objective, which is applied input-adaptively. Extensive experiments across 16 classification benchmarks demonstrate that CSR outperforms the SOTA by an average of 18.1% against strong AutoAttack with modest inference overhead. Furthermore, CSR exhibits broad applicability across diverse visual tasks. Code is available at https://github.com/Summu77/CSR.

💡 Research Summary

This paper addresses the vulnerability of large‑scale vision‑language models (VLMs), particularly CLIP, to adversarial attacks despite their impressive zero‑shot capabilities. Existing defenses fall into two categories: adversarial fine‑tuning, which is computationally expensive and often degrades clean‑image performance, and test‑time defenses, which suffer from high latency, limited robustness against strong attacks, and narrow applicability.

The authors first investigate the intrinsic properties of adversarial examples (AEs) by progressively applying low‑pass filters in the frequency domain. They find that benign images retain high cosine similarity between original and filtered embeddings even when mid‑to‑high frequencies are removed, whereas AEs experience a rapid collapse of feature consistency under the same attenuation. This phenomenon is traced to a “spectral bias” in CLIP: the model’s loss gradients and feature shifts concentrate in mid‑to‑high frequency bands, making the model hypersensitive to perturbations in those regions while remaining relatively insensitive to low‑frequency changes.

Motivated by this insight, the paper proposes Contrastive Spectral Rectification (CSR), an efficient test‑time defense. CSR optimizes a small rectification perturbation δ that is added to the input image. The optimization is driven by a contrastive objective: the low‑pass filtered embedding f_low(x) serves as a positive anchor, and the original (potentially adversarial) embedding f(x) serves as a negative anchor. The loss encourages the rectified embedding f(x+δ) to become more similar to f_low(x) while moving away from f(x).

To avoid unnecessary alterations of clean inputs, CSR incorporates an input‑adaptive gating mechanism. It measures the cosine similarity between f(x) and f_low(x); only when this similarity falls below a predefined threshold does the rectification process activate. Consequently, CSR applies its correction selectively, preserving clean‑image accuracy and limiting computational overhead. The rectification perturbation is optimized in only a few gradient steps (typically 3–5), resulting in an inference overhead of less than 10 % compared with the vanilla CLIP forward pass.

Extensive experiments are conducted on 16 zero‑shot classification benchmarks spanning general, fine‑grained, scene, and domain shift settings, as well as on three additional vision tasks: semantic segmentation, image captioning, and visual question answering. Against the strong AutoAttack (ℓ∞ = 4/255), CSR achieves an average accuracy gain of 18.1 % over the prior state‑of‑the‑art defenses (FARE, TTC). Against standard PGD (ℓ∞ = 1/255), CSR improves accuracy by 6.9 % while maintaining near‑identical performance on benign samples. The method’s inference time increase is modest (≈1.08×–1.12×), making it suitable for real‑time deployment.

Key contributions are: (1) uncovering a spectral fragility of adversarial examples linked to CLIP’s mid‑to‑high‑frequency bias; (2) introducing CSR, which leverages a spectral‑guided contrastive objective and adaptive gating to detect and rectify adversarial inputs without retraining; (3) demonstrating CSR’s broad applicability and superior robustness across diverse benchmarks with minimal computational cost. The work suggests that exploiting intrinsic spectral properties offers a powerful, model‑agnostic avenue for defending large multimodal systems, and opens future directions such as integrating CSR with other VLMs or combining it with pre‑training strategies that mitigate spectral bias.

Comments & Academic Discussion

Loading comments...

Leave a Comment