MATA: A Trainable Hierarchical Automaton System for Multi-Agent Visual Reasoning

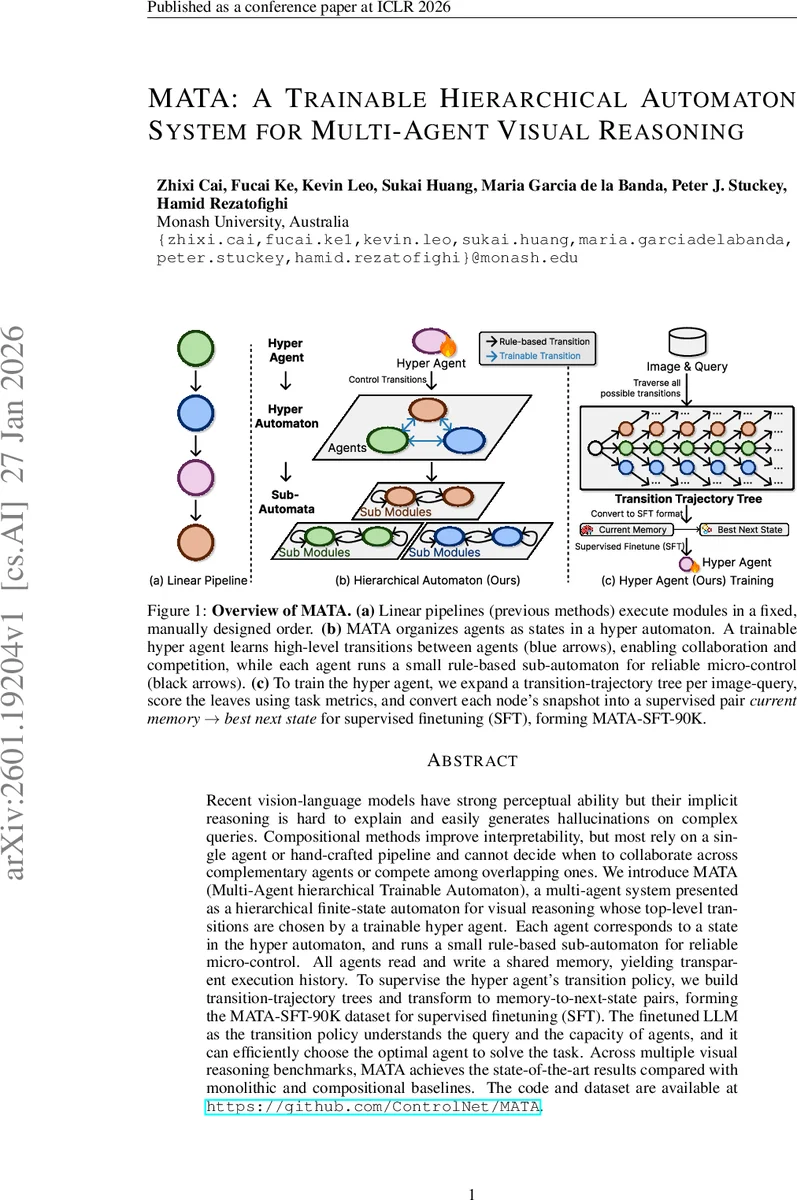

Recent vision-language models have strong perceptual ability but their implicit reasoning is hard to explain and easily generates hallucinations on complex queries. Compositional methods improve interpretability, but most rely on a single agent or hand-crafted pipeline and cannot decide when to collaborate across complementary agents or compete among overlapping ones. We introduce MATA (Multi-Agent hierarchical Trainable Automaton), a multi-agent system presented as a hierarchical finite-state automaton for visual reasoning whose top-level transitions are chosen by a trainable hyper agent. Each agent corresponds to a state in the hyper automaton, and runs a small rule-based sub-automaton for reliable micro-control. All agents read and write a shared memory, yielding transparent execution history. To supervise the hyper agent’s transition policy, we build transition-trajectory trees and transform to memory-to-next-state pairs, forming the MATA-SFT-90K dataset for supervised finetuning (SFT). The finetuned LLM as the transition policy understands the query and the capacity of agents, and it can efficiently choose the optimal agent to solve the task. Across multiple visual reasoning benchmarks, MATA achieves the state-of-the-art results compared with monolithic and compositional baselines. The code and dataset are available at https://github.com/ControlNet/MATA.

💡 Research Summary

The paper introduces MATA (Multi‑Agent hierarchical Trainable Automaton), a novel framework for visual reasoning that combines a hierarchical finite‑state automaton with a trainable high‑level transition policy. The top‑level automaton, called the hyper automaton, treats each specialized agent as a state and uses a learned transition function δθ to decide which agent to activate next. This transition function is implemented as a large language model (LLM) that is fine‑tuned on a newly constructed dataset, MATA‑SFT‑90K, which contains 90 K (memory snapshot, next‑state) pairs derived from exhaustive transition‑trajectory trees.

Each agent—Specialized Agent (fast perception), Oneshot Reasoner (single‑pass program generation), and Stepwise Reasoner (multi‑step Python reasoning)—operates a small rule‑based sub‑automaton. These sub‑automata handle low‑level actions such as invoking vision models, generating code, executing it in a sandbox, and applying verifiers. The rule‑based design ensures reliable micro‑control while keeping the internal logic transparent.

All agents share an append‑only structured memory that records image‑question inputs, intermediate tool outputs, verifier feedback, and task metadata. The hyper agent reads the current memory snapshot at each step, feeds it to δθ, and receives the next state. If the chosen state is an agent, that agent runs its sub‑automaton until it returns control to the hyper automaton, updating the shared memory along the way. The process repeats until the hyper automaton reaches the FINAL state, at which point the output function Γ produces the final answer.

To train the hyper agent, the authors generate transition‑trajectory trees for each image‑question pair by exhaustively exploring possible agent sequences. Each leaf node is scored using the appropriate task metric (e.g., VQA accuracy, grounding IoU). The best‑scoring child of every internal node defines the optimal next‑state label for that node’s memory snapshot. Collecting these labels across many examples yields the MATA‑SFT‑90K dataset, which is used for supervised fine‑tuning of the LLM transition policy.

Experiments span several visual reasoning benchmarks, including VQA‑2, GQA, RefCOCO, and visual grounding tasks. MATA consistently outperforms monolithic vision‑language models and prior compositional pipelines, achieving state‑of‑the‑art accuracy improvements of 2–4 percentage points. Notably, on complex multi‑step queries MATA dynamically switches to the Stepwise Reasoner, while on simpler queries it often selects the Oneshot Reasoner or the Specialized Agent, demonstrating effective collaboration and competition among agents. Ablation studies show that removing the learned hyper‑agent (using a fixed hand‑crafted transition rule) degrades performance substantially, and replacing rule‑based sub‑automata with learned controllers reduces interpretability and error‑recovery capability.

The paper’s contributions are threefold: (1) a hierarchical automaton architecture that unifies neuro‑symbolic reasoning with collaborative and competitive multi‑agent design; (2) a transition‑trajectory data generation pipeline and the MATA‑SFT‑90K dataset for supervised training of the hyper‑agent; and (3) extensive empirical validation showing that learned high‑level transitions enable more reliable, transparent, and performant visual reasoning. The authors also release code and data, facilitating future research on extending the framework to other domains such as robotics or multimodal dialogue.

In summary, MATA offers a principled solution to the opacity and hallucination problems of current vision‑language models by making the reasoning process explicit, modular, and data‑driven. Its hierarchical design mirrors the dual‑process theory of human cognition (fast System 1 perception versus slow System 2 reasoning) while providing a flexible, learnable mechanism for orchestrating multiple specialized agents. This work represents a significant step toward more trustworthy and adaptable multimodal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment