SynthRM: A Synthetic Data Platform for Vision-Aided Mobile System Simulation

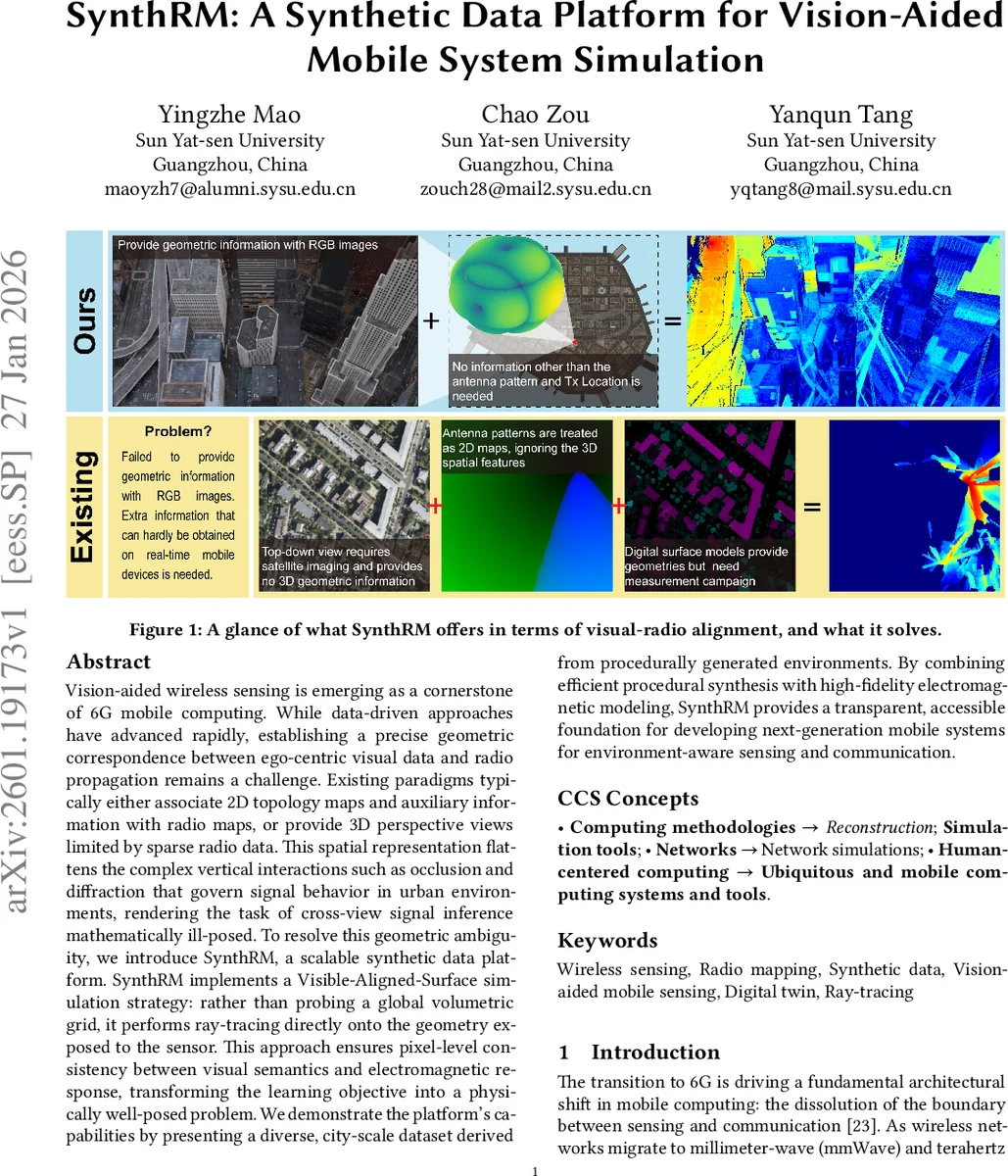

Vision-aided wireless sensing is emerging as a cornerstone of 6G mobile computing. While data-driven approaches have advanced rapidly, establishing a precise geometric correspondence between ego-centric visual data and radio propagation remains a challenge. Existing paradigms typically either associate 2D topology maps and auxiliary information with radio maps, or provide 3D perspective views limited by sparse radio data. This spatial representation flattens the complex vertical interactions such as occlusion and diffraction that govern signal behavior in urban environments, rendering the task of cross-view signal inference mathematically ill-posed. To resolve this geometric ambiguity, we introduce SynthRM, a scalable synthetic data platform. SynthRM implements a Visible-Aligned-Surface simulation strategy: rather than probing a global volumetric grid, it performs ray-tracing directly onto the geometry exposed to the sensor. This approach ensures pixel-level consistency between visual semantics and electromagnetic response, transforming the learning objective into a physically well-posed problem. We demonstrate the platform’s capabilities by presenting a diverse, city-scale dataset derived from procedurally generated environments. By combining efficient procedural synthesis with high-fidelity electromagnetic modeling, SynthRM provides a transparent, accessible foundation for developing next-generation mobile systems for environment-aware sensing and communication.

💡 Research Summary

SynthRM tackles a core obstacle in vision‑aided wireless sensing for future 6G systems: the lack of a precise geometric correspondence between ego‑centric visual inputs and the radio propagation field. Existing datasets typically pair RGB/Depth images with global, top‑down radio heatmaps or sparse 3D views. This “mismatched” alignment discards crucial vertical information—building facades, street canyons, edges, and corners—that governs blockage, reflection, and diffraction at mmWave/THz frequencies. Consequently, learning a radio map from a single visual frame becomes an ill‑posed inverse problem because the model receives only partial geometric cues (visible surfaces, normals, distances) while the target map depends on hidden structures and full interaction histories.

SynthRM resolves this by introducing a Visible‑Aligned‑Surface (VAS) simulation paradigm. The platform consists of three tightly coupled stages:

-

Procedural Scene Composition – A node‑based procedural content generation (PCG) engine creates diverse urban layouts (varying building density, street widths, zoning). The coarse geometry (polygons) is exported for physics, while a high‑fidelity Unreal Engine 5 pipeline textures the scene for photorealistic RGB and depth rendering. This dual‑stream approach yields unlimited city‑scale environments on consumer‑grade hardware.

-

VAS Reconstruction – Using the camera’s intrinsic matrix K and extrinsic pose {R, t} together with a depth map D, each pixel (u, v) is back‑projected to a 3D point p₍c₎ = D(u,v)·K⁻¹·

Comments & Academic Discussion

Loading comments...

Leave a Comment