Q-Probe: Scaling Image Quality Assessment to High Resolution via Context-Aware Agentic Probing

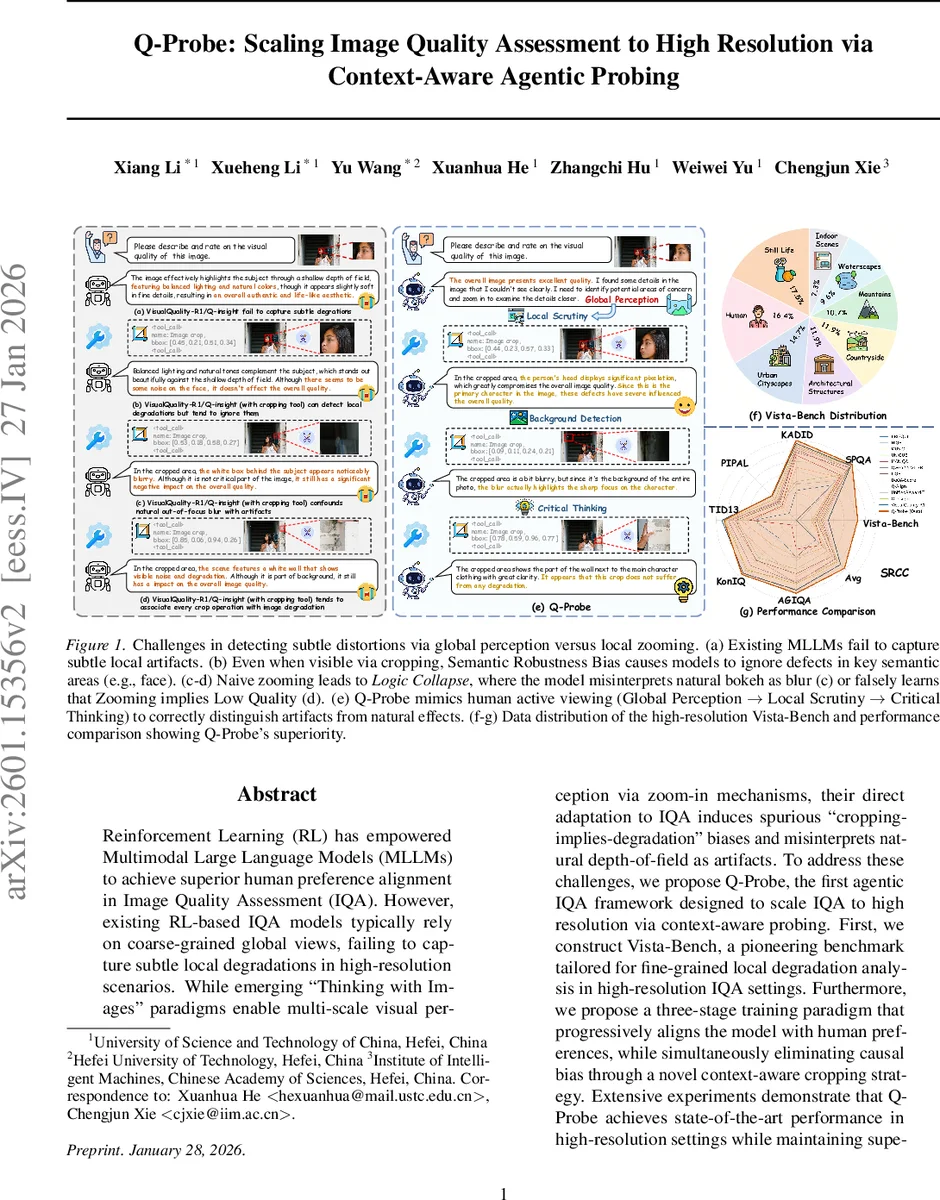

Reinforcement Learning (RL) has empowered Multimodal Large Language Models (MLLMs) to achieve superior human preference alignment in Image Quality Assessment (IQA). However, existing RL-based IQA models typically rely on coarse-grained global views, failing to capture subtle local degradations in high-resolution scenarios. While emerging “Thinking with Images” paradigms enable multi-scale visual perception via zoom-in mechanisms, their direct adaptation to IQA induces spurious “cropping-implies-degradation” biases and misinterprets natural depth-of-field as artifacts. To address these challenges, we propose Q-Probe, the first agentic IQA framework designed to scale IQA to high resolution via context-aware probing. First, we construct Vista-Bench, a pioneering benchmark tailored for fine-grained local degradation analysis in high-resolution IQA settings. Furthermore, we propose a three-stage training paradigm that progressively aligns the model with human preferences, while simultaneously eliminating causal bias through a novel context-aware cropping strategy. Extensive experiments demonstrate that Q-Probe achieves state-of-the-art performance in high-resolution settings while maintaining superior efficacy across resolution scales.

💡 Research Summary

The paper introduces Q‑Probe, an agentic image quality assessment (IQA) system designed to handle high‑resolution images where subtle local degradations are often missed by existing methods. Traditional no‑reference IQA models, especially those based on reinforcement learning (RL) with multimodal large language models (MLLMs), rely heavily on a global view of the image. This coarse perspective makes it difficult to detect fine‑grained artifacts such as noise on a face or compression artifacts on a license plate. Moreover, recent “Thinking with Images” approaches that allow models to zoom in or crop regions introduce a causal bias: models learn to associate any cropping operation with low quality, leading to two major failure modes. First, natural depth‑of‑field effects (bokeh) are mistakenly classified as blur artifacts. Second, even high‑quality regions that are cropped are penalized, causing a logical collapse where the model’s quality score drops simply because a crop was performed.

To overcome these issues, the authors propose three key innovations.

- Vista‑Bench – a new benchmark consisting of over 1,000 high‑resolution (≥ 4 K) images. The authors extract high‑frequency components using wavelet transforms and inject realistic degradations (blur, compression, mosaic) selectively into texture‑rich areas. They then employ Gemini‑2.5 Pro to generate hierarchical quality scores that weight local degradation severity by semantic importance (e.g., faces receive higher weight than background). Human reviewers further filter the data, ensuring reliable ground truth. This benchmark fills a gap in the literature: no existing dataset focuses on fine‑grained local defects in ultra‑high‑resolution images.

- Context‑aware cropping – instead of cropping only the damaged patch, the method deliberately includes both degraded and pristine regions (including natural bokeh) within the same crop. This design breaks the spurious correlation “crop → low quality” that plagues naïve zoom‑in strategies. Crops are invoked only after a global perception stage determines that a region warrants closer inspection, mimicking how a human photographer first looks at the whole scene before zooming.

- Three‑stage training curriculum –

Stage 1 (Pre‑RL): The model is first trained on low‑resolution image pairs from KADID‑10k using Group Relative Policy Optimization (GRPO). A Thurstone‑case‑V probabilistic ranking model treats quality as a relative concept, encouraging the model to distinguish genuine degradations from natural depth‑of‑field.

Stage 2 (Probe‑CoT‑3K SFT): A supervised fine‑tuning phase introduces chain‑of‑thought (CoT) trajectories that follow a “global overview → optional local scrutiny → final synthesis” pattern. The trajectories contain diverse cropping actions (degradation capture, clarity localization, distant view assessment) but always preserve contextual background, reinforcing the context‑aware principle.

Stage 3 (Probe‑RL‑4K): Finally, reinforcement learning is applied on the high‑resolution Vista‑Bench. The reward function is bifurcated: (i) accuracy of local defect detection, (ii) consistency between global and local scores, and (iii) a penalty for unnecessary cropping. This stage jointly optimizes tool‑invocation policy and quality prediction, allowing the model to learn when and where to zoom and how to aggregate the information.

Extensive experiments compare Q‑Probe against state‑of‑the‑art RL‑based IQA models (e.g., Q‑Insight, VisualQuality‑R1) and conventional NR‑IQA networks across multiple benchmarks (SPQA, KADID‑10k, PIPAL, TID13, KonIQ‑10k, AGIQA). Q‑Probe consistently outperforms baselines, achieving SRCC improvements of 4–6 percentage points on average. On the newly introduced Vista‑Bench, Q‑Probe reaches an SRCC of 0.92 and PLCC of 0.90, indicating strong alignment with human judgments. Ablation studies reveal that removing the context‑aware cropping reduces SRCC to 0.84, while skipping Stage 2 leads to poorer global‑local consistency, confirming the necessity of each component.

The authors also analyze failure cases: when the model incorrectly treats natural background blur as a defect, its confidence drops, but the context‑aware design mitigates this by always presenting the surrounding sharp foreground for reference. Human evaluation with 200 participants yields a Pearson correlation of 0.91 with Q‑Probe’s scores, the highest among all tested methods.

In discussion, the paper highlights that Q‑Probe’s human‑like “coarse‑to‑fine” visual strategy can be transferred to other multimodal tasks such as video quality assessment or medical imaging diagnostics, where high‑resolution detail matters. Future work includes model compression for real‑time deployment, expanding the range of synthetic degradations, and integrating online human feedback loops for continual RL refinement.

In summary, Q‑Probe introduces a novel agentic IQA framework that successfully bridges the gap between global aesthetic perception and fine‑grained defect detection in high‑resolution imagery. By constructing Vista‑Bench, employing context‑aware cropping, and following a progressive three‑stage training regimen, the system eliminates the “crop‑implies‑low‑quality” bias and achieves state‑of‑the‑art performance across diverse benchmarks, setting a new standard for high‑resolution image quality assessment.

Comments & Academic Discussion

Loading comments...

Leave a Comment