MGPC: Multimodal Network for Generalizable Point Cloud Completion With Modality Dropout and Progressive Decoding

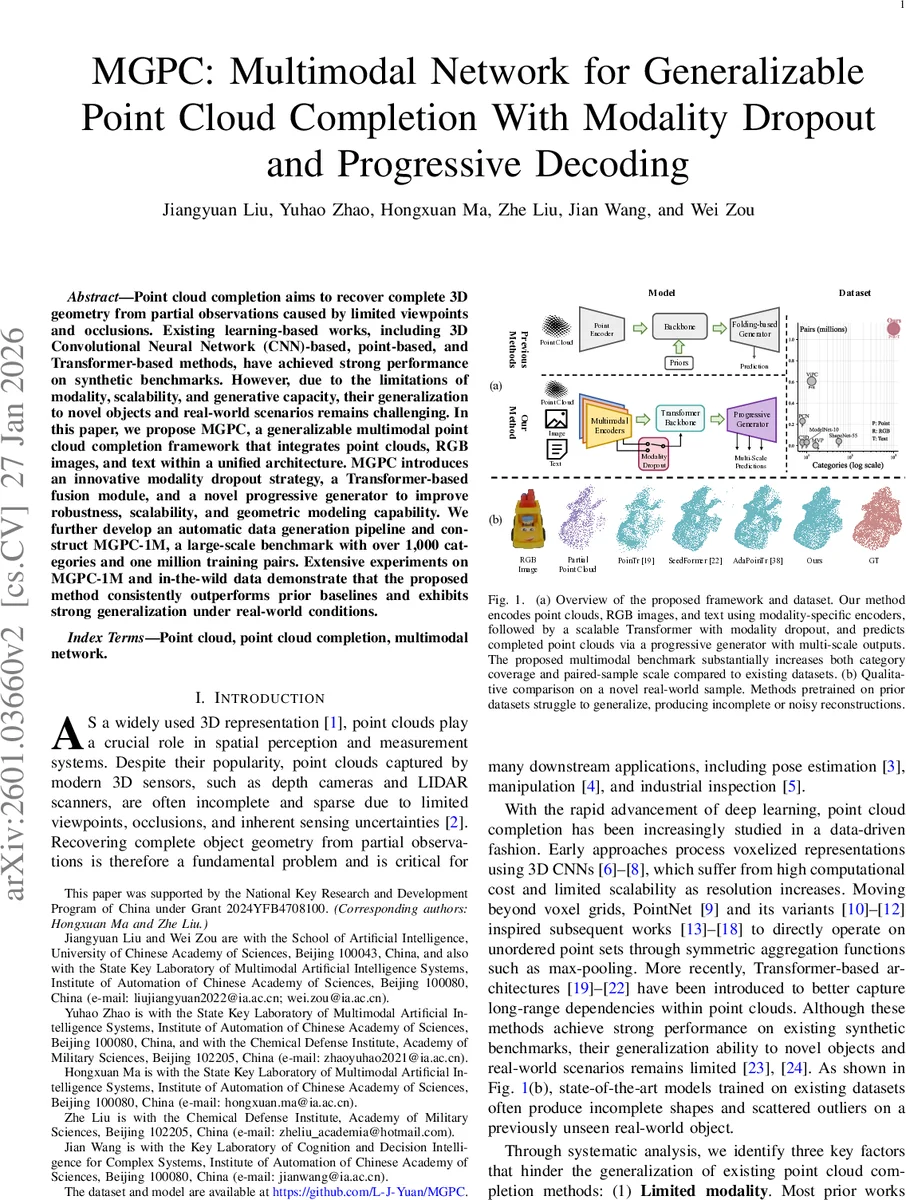

Point cloud completion aims to recover complete 3D geometry from partial observations caused by limited viewpoints and occlusions. Existing learning-based works, including 3D Convolutional Neural Network (CNN)-based, point-based, and Transformer-based methods, have achieved strong performance on synthetic benchmarks. However, due to the limitations of modality, scalability, and generative capacity, their generalization to novel objects and real-world scenarios remains challenging. In this paper, we propose MGPC, a generalizable multimodal point cloud completion framework that integrates point clouds, RGB images, and text within a unified architecture. MGPC introduces an innovative modality dropout strategy, a Transformer-based fusion module, and a novel progressive generator to improve robustness, scalability, and geometric modeling capability. We further develop an automatic data generation pipeline and construct MGPC-1M, a large-scale benchmark with over 1,000 categories and one million training pairs. Extensive experiments on MGPC-1M and in-the-wild data demonstrate that the proposed method consistently outperforms prior baselines and exhibits strong generalization under real-world conditions.

💡 Research Summary

The paper addresses the long‑standing challenge of completing partial 3‑D point clouds, especially when models trained on synthetic benchmarks fail to generalize to real‑world objects. Existing approaches fall into three categories—3‑D CNN‑based, point‑based (e.g., PointNet, PCN), and Transformer‑based methods. While they achieve impressive numbers on datasets such as ShapeNet, they suffer from three fundamental limitations: (1) Single‑modality input – relying only on the sparse point cloud leaves the system vulnerable to shape ambiguity, because many different objects can share similar geometric outlines from a limited viewpoint. (2) Limited scalability – current datasets are relatively small, prompting researchers to embed strong inductive biases (local convolutions, K‑NN graphs, handcrafted priors) that do not scale well when data volume grows. (3) Restricted generative capacity – most decoders are either folding‑based (deforming a 2‑D grid) or single‑shot MLP regressors, which cannot faithfully model complex surface details.

To overcome these issues, the authors propose MGPC (Multimodal Generalizable Point Cloud completion), a unified framework that simultaneously consumes three modalities: a partial point cloud, an RGB image, and a natural‑language description of the object. The architecture consists of three stages:

-

Multimodal Token Extraction – modality‑specific encoders convert each input into a sequence of learnable tokens. The point encoder is a lightweight PointNet‑style network, the image encoder is a Vision‑Transformer (ViT) backbone, and the text encoder leverages a pretrained large‑scale language model (e.g., BERT/CLIP‑text).

-

Modality Fusion with Dropout – tokens are concatenated and processed by a scalable Transformer that interleaves self‑attention (capturing global geometry) and cross‑attention (aligning information across modalities). A novel modality dropout mechanism randomly masks the image and/or text tokens during training, forcing the network to learn robust representations that do not depend on any single auxiliary modality. Consequently, at inference time the model can operate with any subset of modalities, which is crucial for real‑world deployment where cameras may fail or textual annotations may be unavailable.

-

Progressive Generator – instead of a one‑shot decoder, MGPC employs a multi‑scale, coarse‑to‑fine generation pipeline. Several decoder blocks progressively increase the number of output points (e.g., 2 k → 4 k → 8 k). Each scale is supervised with Chamfer Distance and Earth Mover’s Distance losses, encouraging both overall shape fidelity and fine‑grained surface detail. This progressive refinement dramatically improves expressive power compared with folding‑based or plain MLP generators.

A major contribution is the MGPC‑1M dataset, built via an automated pipeline that harvests over 1 000 object categories from Objaverse and GSO, generates realistic RGB images and depth maps using physics‑based rendering, and creates textual captions with a large Vision‑Language Model. The pipeline also adds sensor‑like noise and occlusion patterns to mimic real depth cameras, producing more than one million paired samples (partial point cloud, image, text, complete point cloud). This dataset is an order of magnitude larger and more diverse than prior benchmarks.

Experimental results show that MGPC consistently outperforms strong baselines across multiple metrics. On the MGPC‑1M test split, it reduces Chamfer Distance by 12‑15 % relative to the best single‑modal and multimodal competitors (e.g., PCN, SeedFormer, ViPC, XMFNet). Ablation studies confirm that (i) modality dropout significantly mitigates over‑fitting and improves performance when auxiliary modalities are missing, and (ii) the progressive decoder yields higher-resolution reconstructions than folding‑based decoders. Zero‑shot evaluations on in‑the‑wild data captured by a robot arm demonstrate that MGPC produces smooth, complete shapes, whereas prior methods generate fragmented or noisy outputs.

In summary, MGPC advances point‑cloud completion by (1) leveraging multimodal cues for better generalization, (2) introducing a dropout‑driven robustness to missing modalities, (3) employing a scalable Transformer for flexible fusion, (4) using a progressive, multi‑scale decoder for high‑fidelity geometry, and (5) providing a large, realistic benchmark to drive future research. Potential extensions include incorporating additional sensor modalities (LiDAR, ultrasonic), enabling text‑conditioned shape editing, and optimizing the architecture for real‑time streaming applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment